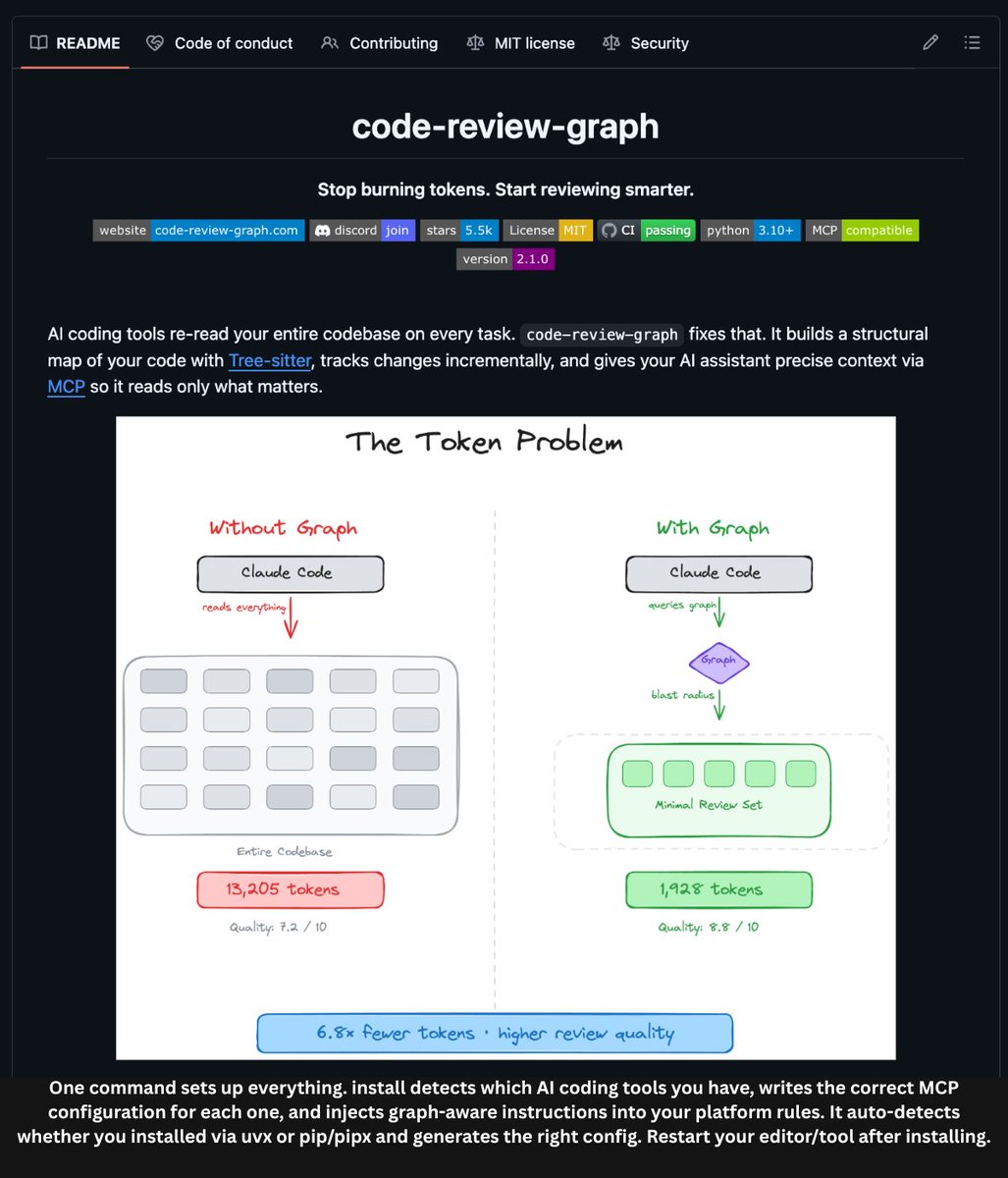

A new partnership between Google and Broadcom shifts the manufacturing model for Google's custom AI accelerators. According to a report, Broadcom will now be responsible for turning Google's Tensor Processing Unit (TPU) intellectual property into physical chips and networking systems. In this arrangement, Google earns licensing revenue from its IP without directly managing the capital-intensive hardware fabrication process.

What the Deal Entails

Under this agreement, Broadcom assumes the role of a foundry and systems integrator for Google's TPU designs. Google provides the chip architecture and IP—the blueprints for its specialized AI accelerators that power services like Google Search, Bard, and its cloud AI offerings. Broadcom then handles the semiconductor manufacturing, packaging, and the integration of these chips into the necessary networking hardware (like switches and interconnects) required for large-scale AI training clusters.

This is a significant evolution from Google's previous approach. Historically, Google has co-designed its TPUs with partners but maintained a very hands-on role in the specification and validation process. This move suggests a deeper delegation of the physical implementation and supply chain management to a dedicated semiconductor partner.

The Strategic Shift for Google

This partnership represents a classic "fabless" or "IP licensing" model, common in the semiconductor industry, but applied to a hyperscaler's most critical internal silicon. For Google, the primary benefits are:

- Capital Efficiency: It offloads the immense capital expenditure (CapEx) and operational complexity of advanced chip fabrication to a specialist.

- IP Monetization: It creates a direct revenue stream from its substantial R&D investment in TPU architecture.

- Focus: It allows Google's engineers to concentrate on core AI model and software stack development, while relying on Broadcom's manufacturing scale and networking expertise.

For Broadcom, this secures a massive, long-term design win with one of the world's largest consumers of AI chips. It leverages Broadcom's strengths in custom ASIC design, advanced packaging, and its dominant position in data center networking silicon.

The Competitive AI Silicon Landscape

This deal occurs within a fiercely competitive arena for AI compute. Google's TPUs compete directly with:

- NVIDIA's GPUs (H100, H200, Blackwell): The current market leader for training and inference.

- AMD's Instinct MI300 Series: Gaining traction as a high-performance alternative.

- AWS's Inferentia & Trainium Chips: Amazon's in-house custom silicon for its cloud.

- Microsoft's Maia AI Accelerator: Co-designed with OpenAI for its Azure cloud.

The move to partner with Broadcom for fabrication suggests Google is doubling down on its vertical integration strategy for AI infrastructure but doing so through a capital-light partnership model. It aims to ensure a reliable, scalable supply of cutting-edge silicon to compete with the GPU-centric roadmaps of its rivals.

gentic.news Analysis

This partnership is a logical, yet pivotal, step in the maturation of the custom AI silicon ecosystem. It formalizes a trend we've been tracking: hyperscalers are moving from being mere consumers of chips to becoming architects and licensors of semiconductor IP. This follows Google's nearly decade-long journey with TPUs, which began as a secret project to accelerate search and has evolved into a strategic pillar for its entire AI empire.

The choice of Broadcom is particularly telling. Broadcom is not a leading-edge logic foundry like TSMC; it is a powerhouse in custom ASICs, networking, and system-level integration. This indicates Google is prioritizing the system-level performance of its AI clusters—the interconnection between thousands of TPUs—as much as, if not more than, pure transistor-level gains. The networking component of this deal is crucial. As AI models grow, the bottleneck increasingly shifts from raw FLOPs to communication bandwidth between chips. Broadcom's dominance in Ethernet switching and custom interconnect solutions (like its Jericho3-AI platform) is likely a key value driver here.

This aligns with a broader industry trend of disaggregation. Just as companies like Apple design their own chips (A-series, M-series) but contract manufacturing to TSMC, Google is now applying that model to data center AI accelerators. It also creates a new competitive dynamic. While NVIDIA sells a tightly integrated hardware-software stack (GPU + NVLink + CUDA), Google and Broadcom are offering an alternative stack: Google's TPU IP + software frameworks (JAX, TensorFlow) + Broadcom's networking. The success of this partnership will hinge on whether this stack can match or exceed the performance and developer ease-of-use of the entrenched CUDA ecosystem.

Frequently Asked Questions

What is a Tensor Processing Unit (TPU)?

A Tensor Processing Unit (TPU) is Google's custom-developed application-specific integrated circuit (ASIC) designed to accelerate machine learning workloads, particularly those using TensorFlow and JAX frameworks. It is optimized for the high-volume matrix multiplications that are fundamental to neural network training and inference.

Why would Google license its TPU IP instead of building the chips itself?

Google is a software and services company, not a semiconductor manufacturer. Building chips at the cutting edge requires tens of billions of dollars in fab construction and maintenance. By licensing its IP to Broadcom, Google can generate revenue from its R&D, avoid massive capital expenditure, and leverage Broadcom's specialized expertise in chip fabrication, packaging, and high-speed data center networking.

How does this affect Google Cloud customers?

In the short term, this partnership is unlikely to cause immediate changes for Google Cloud customers using TPU v4 or v5e instances. The long-term goal is to ensure a reliable, cost-effective, and performance-competitive supply of next-generation TPUs. If successful, it could allow Google to offer more powerful or affordable AI training and inference options on Google Cloud, strengthening its position against AWS and Microsoft Azure.

Is Broadcom now a competitor to NVIDIA in AI chips?

Not directly. Broadcom is acting as a manufacturer and systems integrator for Google's proprietary design. It is not selling a "Broadcom AI Chip" on the open market. However, by enabling Google's TPU roadmap, Broadcom is indirectly supporting a major alternative to NVIDIA's GPU ecosystem, making it a strategic player in the AI infrastructure war.