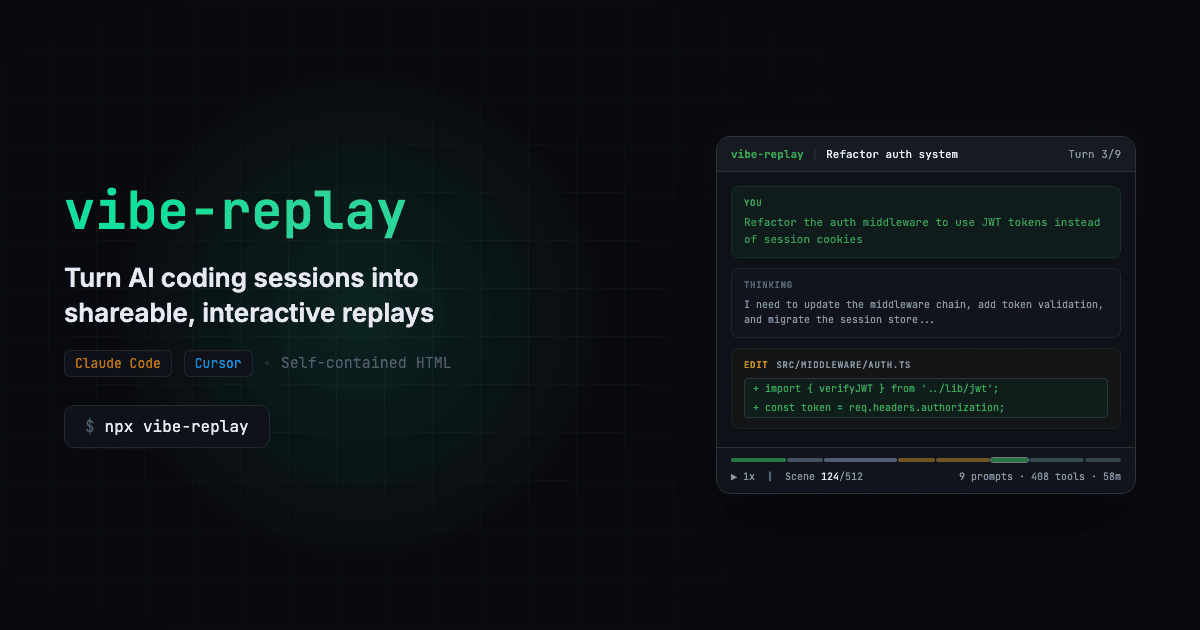

What It Does — A Security Gateway for AI Coding Agents

Oculi Security is a new tool designed to give security teams visibility and control over AI coding agents like Claude Code, Cursor, and Windsurf. It acts as a centralized security gateway that intercepts every agent action—shell commands, file modifications, network calls, and MCP tool usage—before execution. The platform provides policy enforcement, JWT authentication, rate limiting, and a complete audit trail.

For Claude Code users, this means an organization can deploy Oculi alongside the CLI tool. It integrates via PreToolUse, PostToolUse, and Stop hooks, requiring no SDK changes or modifications to developer workflows. The security team defines version-controlled policies in a central console, and every Claude Code action across the organization is evaluated against them.

Setup — Deploy the Control Plane in Minutes

According to the source, setup is designed to be quick. Security teams deploy the Oculi control plane, which then integrates with Claude Code installations. The promise is "minutes" for integration, with no changes required to the claude code CLI commands developers run daily. The platform shows live status for connected IDEs, including which security hooks are active.

Once deployed, you define policies using a rule engine. Example rules could include:

- Deny any shell command containing

rm -rf /orcurlto external IP ranges. - Require approval for file operations in directories containing

.envorconfig/secret*. - Block MCP server connections to unauthorized internal APIs.

- Log and warn on first use of any new MCP tool.

These policies are enforced at the IDE/agent layer, meaning Claude Code receives a Stop or modified instruction before the risky action is performed.

When To Use It — For Teams Scaling Agentic Development

This tool is not for the solo developer. It's for engineering organizations where multiple developers are using Claude Code (or other agents) and security needs to establish governance. The source explicitly states its purpose: for "security teams that need to get ahead of this before an incident forces the conversation."

Use Oculi if:

- Compliance is critical: You need an audit trail proving what AI agents did for SOC2, ISO 27001, or internal reviews.

- Sensitive codebases: Developers use Claude Code on infrastructure or code containing credentials, PII, or intellectual property.

- MCP server sprawl: Teams are installing various MCP servers (like those we covered for Manifold or Agent Reach), and you need to control which ones can be accessed.

- Preventing "hallucinated" commands: As noted in a recent Claude Code bug report, agents can sometimes generate incorrect or dangerous commands. A policy layer can serve as a safety net.

The alternative is having no visibility or control, which the source argues is the current default for most organizations rapidly adopting these tools.

The Bigger Picture — Securing the Agentic Shift

This development is a direct response to the accelerating adoption of AI coding agents. As our knowledge graph shows, AI Agents have been mentioned in 173 prior articles, with 20 appearances this week alone, indicating a massive trend. Furthermore, Claude Code itself has appeared in 407 articles, with 150 this week, underscoring its explosive growth.

Oculi represents the nascent "platformization" of agent security. It treats Claude Code not just as a developer tool but as an organizational asset that requires the same governance as CI/CD pipelines or cloud infrastructure. This aligns with the industry prediction from late 2026 that it would be a "breakthrough year for AI agents across all domains."

Notably, Oculi's focus on MCP governance is timely. The Model Context Protocol, developed by Anthropic and used by Claude Code (as referenced in 31 sources), is becoming a standard for tool integration. Controlling MCP access is now a core security concern, as these servers can be gateways to internal systems.

gentic.news Analysis

This tool addresses a critical gap that emerges when a powerful developer tool like Claude Code reaches enterprise scale. Our coverage has tracked Claude Code's evolution from a novel CLI to a foundational agentic platform. With features like the code research sub-agent and growing MCP ecosystem, its capabilities—and potential attack surface—are expanding.

Oculi's approach of intercepting tool use via hooks is architecturally sound for Claude Code, which is built on the Model Context Protocol. By governing at the MCP layer, it can control not just today's tools but future ones. This is crucial as the agent ecosystem diversifies, a trend evidenced by our recent articles on tools like Rotifer for agent evolution.

The need for such security layers was hinted at in dramatic fashion by Claude's demonstration of autonomously finding zero-day vulnerabilities. If an agent can exploit a kernel flaw, it certainly needs guardrails on what commands it can run in your production environment. Oculi is an early attempt to build those guardrails into the development workflow itself, aiming to provide security without sacrificing the velocity that makes Claude Code so valuable.