What It Does — Automatic Token Compression for Coding Agents

Tamp is a local HTTP proxy that sits between your coding agent (Claude Code, Aider, Cursor, etc.) and the AI provider's API. It automatically compresses tool_result blocks in API requests before forwarding them upstream, achieving 52.6% fewer input tokens on average. The key insight: coding agents send massive amounts of structured data (JSON, arrays, line-numbered output) that can be aggressively compressed without losing meaning.

Source code and error messages pass through untouched — only the verbose metadata gets optimized. This happens transparently; you use Claude Code exactly as before.

Setup — Two Minutes to Start Saving Tokens

Install and run Tamp with a single command:

npx @sliday/tamp

Or install globally:

curl -fsSL https://tamp.dev/setup.sh | bash

On first launch, Tamp shows an interactive prompt letting you toggle compression methods. Use -y to skip this in CI/scripts:

npx @sliday/tamp -y

Tamp runs on localhost:7778 and auto-detects your agent's API format (Anthropic, OpenAI, Gemini).

Configure Your Agent — One Environment Variable

For Claude Code:

export ANTHROPIC_BASE_URL=http://localhost:7778

claude

For Aider:

export OPENAI_API_BASE=http://localhost:7778

aider

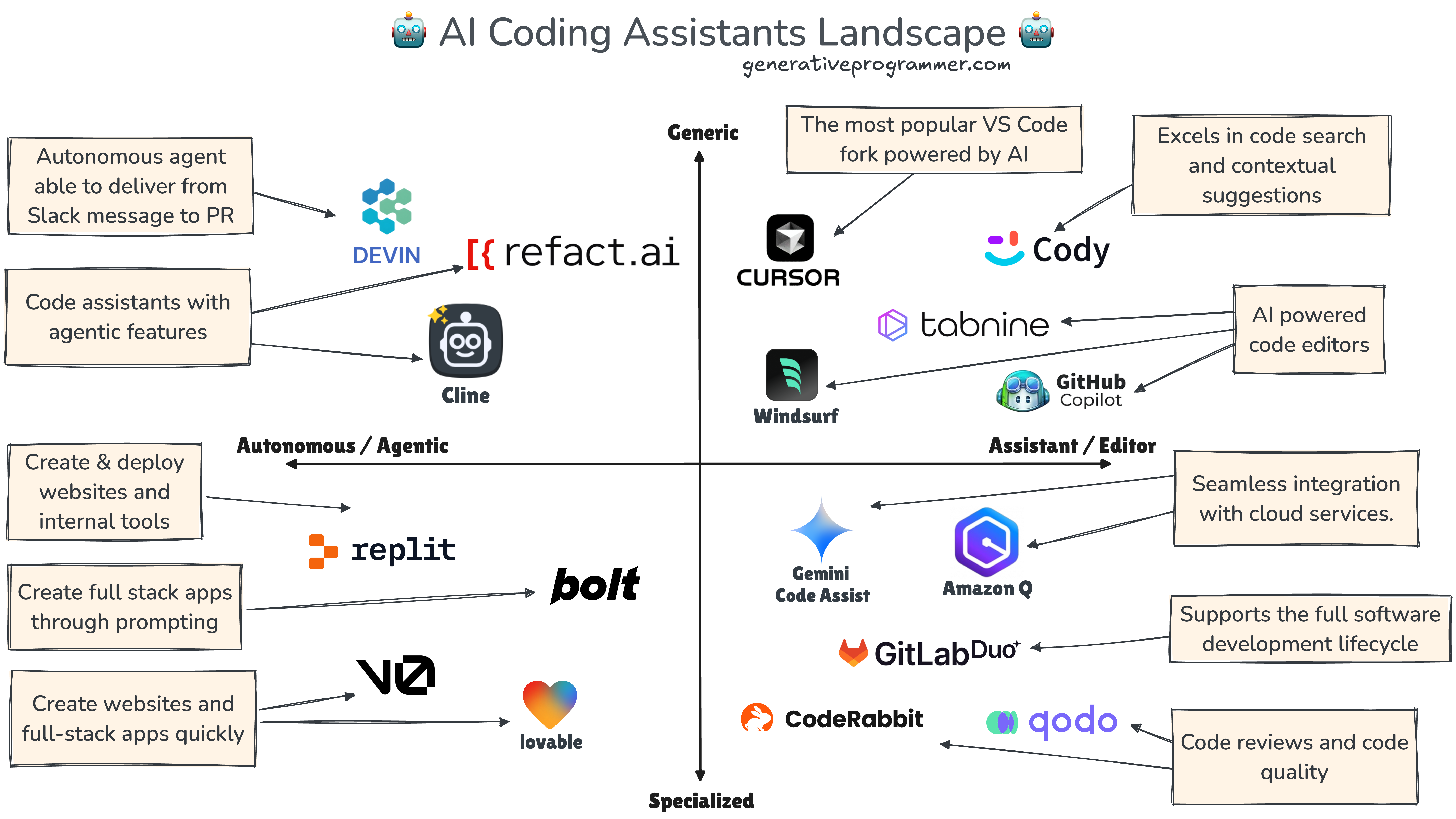

For Cursor/Cline/Windsurf: Set the API base URL to http://localhost:7778 in your editor's settings.

That's it. Tamp compresses silently in the background while you work.

How The Compression Works — Five Optimization Stages

Tamp applies multiple compression techniques, all enabled by default:

- JSON minify — Removes unnecessary whitespace from JSON structures

- TOON columnar encoding — Optimizes arrays by deduplicating repeated structures

- Strip line-number prefixes — Removes

1:,2:, etc. from numbered output - General whitespace reduction — Compresses other structured text

- LLMLingua integration — Advanced semantic compression (requires Python)

You can disable specific stages via environment variables:

# Skip LLMLingua (no Python dependency)

TAMP_STAGES=minify,toon,strip-lines,whitespace npx @sliday/tamp -y

Why This Matters — Compounding Savings

Because each API call resends the full conversation history uncompressed, Tamp's compression compounds with every turn. An in-memory cache ensures identical content is only compressed once per session. This means longer conversations save proportionally more tokens.

What About Codex?

Tamp originally supported Codex CLI but pulled support because Codex uses OpenAI's Responses API (POST /v1/responses) with a different request shape than Chat Completions. Codex also sends zstd-compressed bodies, adding another layer of complexity. The developers plan to revisit support once the Responses API format stabilizes.

Advanced Configuration

All configuration happens through environment variables:

TAMP_STAGES— Control which compression methods runTAMP_PORT— Change from default 7778TAMP_LOG_LEVEL— Debug, info, warn, error

Run from source if you want to modify the compression logic:

git clone https://github.com/sliday/tamp.git

cd tamp && npm install

node bin/tamp.js

The Bottom Line

Tamp delivers immediate token savings with zero workflow disruption. For teams running Claude Code at scale, this could translate to significant cost reduction. For individual developers, it extends context window effectiveness without changing how you prompt.