Stop treating agent memory as a passive log. Each memory unit becomes a living cryptographic witness that gossips with its peers, spawns new witnesses when fresh evidence converges, splits when it grows too generalist, coalesces when redundant, and retires by emitting signed refusals. The knowledge graph itself is the audit artefact. A closed-form bound shows redundancy without structural decorrelation hits a hard 1−α detection floor.

Three failure classes in production multi-agent AI are now well-documented: coordination collapses at 41–86.7% rates across seven SOTA frameworks (MAST, Cemri et al. 2025); memory layers concede 50–90% poisoned recall under published attacks (MemoryGraft, AgentPoison, PoisonedRAG); and decisions remain unauditable under EU AI Act, HIPAA, and SOC 2 because a single agent's chain-of-thought is unstructured prose.

A paper submitted to EUMAS 2026 (currently under single-blind review — acceptance has not been decided) argues these are not three problems but one. The architecture treats memory as a passive substrate that you write to and read from, while decision authority sits inside an opaque learned orchestrator. Both can be tuned, neither can be formally verified. The proposal — MNEMA — replaces both with something different in kind: a living memory of cryptographic witnesses, and a fixed signed protocol that turns the knowledge graph itself into the audit log.

Read the full paper (PDF, 15 pp.)

From static records to living memory

This is the move that distinguishes the paper from every other "agent memory" proposal.

In conventional systems — MemGPT, Mem0, Zep, A-MEM — memory is something the agent stores: a record, a note, a vector, a graph node. The system writes; the system reads. The unit is inert. MNEMA inverts this. A piece of memory is a witness: an autonomous unit with a cryptographic identity (Ed25519 public key), a hash-chained signed journal of every event in its life, and the structural right to refuse to comment when a question is outside its remit.

What makes the memory alive is that witnesses interact:

- They gossip. Idle witnesses run a small grammar —

ASSERT, CORROBORATE, CONTRADICT, PROPOSE, ASSENT, DISSENT — propagating signals to their neighbours.

- They give birth. When enough peers see fresh evidence and no existing witness already holds the claim, a new witness is instantiated — fresh identity, lineage edges to the witnesses that gossiped it into existence, an empty journal seeded by a signed

BIRTH entry. Memory is not written; it is witnessed into existence.

- They split. A witness whose action variance grows too high across domains is partitioned into two children, each inheriting the relevant slice of journal and reputation. The parent retires.

- They coalesce. Two witnesses with overlapping canonical claims and non-contradictory journals merge into one. Lineage and reputation merge with them.

- They probe. A witness with high precision but stale evidence may spend part of its restraint budget querying the substrate for fresh ingestion candidates.

- They retire visibly. Witnesses age through five stages —

EMBRYONIC → JUVENILE → ADULT → ELDER → PHANTOM — strictly forward, never revisited. A retired (PHANTOM) witness does not vanish; it intercepts retrievals and emits a signed refusal pointing to its successor. There is no silent knowledge loss.

Every birth, split, coalescence, retirement is journalled and signed. The lattice's evolution is therefore itself a cryptographically replayable audit trail — without any external logger or post-hoc instrumentation.

Decisions are protocol output, not agent output

The other half of MNEMA is what happens when an action needs to be taken. Instead of an orchestrator agent producing a decision in natural language, a fixed nine-step signed pipeline processes the candidate action: activation, speak-or-refuse, cross-family critic-jury (three different model families), constitutional + veto-council gates, a deterministic commitment function emitting COMMIT / ESCALATE / DEFER / DIE, an optional doubly-efficient debate on escalation, and a saga-style execution with a compensating action ready. Every step appends signed entries; the decision and all its evidence land in a Provenance DAG. Replay is exact.

MNEMA's central move: the knowledge graph is no longer something you write to. It is a living object whose evolution is itself the audit artefact.

The headline result: redundancy alone is a trap

The paper's most striking technical result is a clean bound on what fragment redundancy can actually buy you against memory poisoning.

The defence everyone reaches for first: store every load-bearing claim across q redundant copies and require them to agree. Under independent corruption, this works — at 10% per-copy corruption and q = 4, undetected poisoning falls to 10⁻⁵.

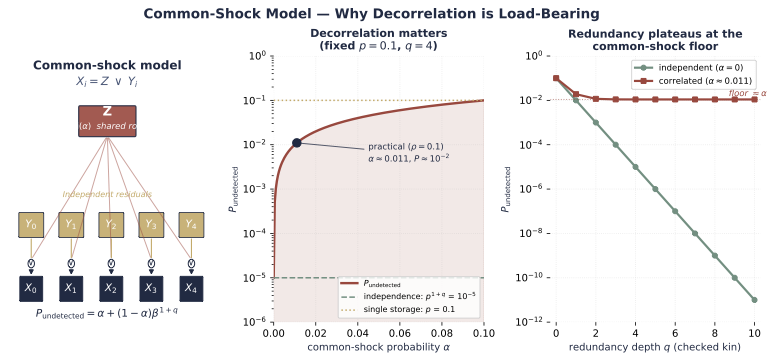

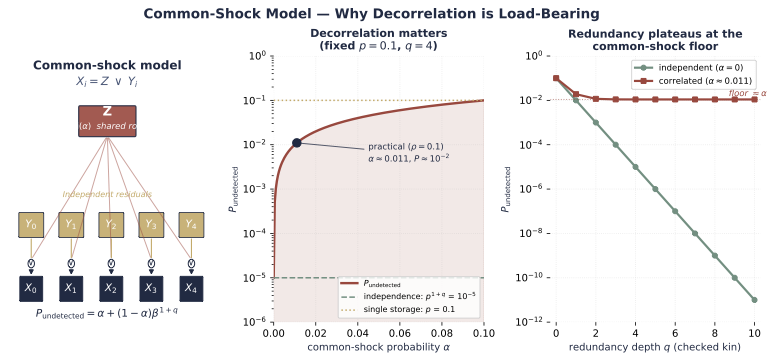

Independent corruption is the wrong assumption. If the copies share a model family, an ingestion path, or a source-graph root, a single shared shock corrupts all of them simultaneously. The paper proves:

P_undetected = α + (1 − α) · β^(1+q)

where α is the shared-root-cause probability and β is the residual per-copy rate. As q → ∞, the detection rate is pinned at 1 − α. No finite redundancy beats it. In the worst case, fragment redundancy delivers no more protection than single storage.

The engineering takeaway is concrete: at the moment you select redundant copies, enforce structural decorrelation — different model family, different source-graph root, different ingestion path — and record all three in the witness schema so an auditor can verify it later. Stop adding copies; start decorrelating.

Pre-registered, not post-hoc

The paper deliberately does not claim empirical superiority. It pre-registers exactly one demonstration — MemoryGraft survival — with the rigour of a clinical trial: explicit hypothesis, power analysis (n = 40, α = 0.05, β = 0.20), three written falsification criteria that determine in advance what counts as failure. The result publishes regardless of outcome. That commitment removes the "let me retry with different hyperparameters" exit.

Why this matters

The agentic-AI stack as currently shipped has a credibility ceiling. Regulated deployments need decisions to be replayable, attributable, and poisoning-resistant simultaneously — and today's stack delivers, at best, one of the three. MNEMA's bet is that getting all three requires giving up the learned orchestrator and accepting a fixed protocol over a living memory of cryptographic witnesses. That trade is uncomfortable for ML practitioners — protocols are less expressive than learned policies — but verifiability is what the deployments that matter actually need.

The full paper goes deeper into the witness anatomy, the typed lattice (lineage / authority / resonance edges), the seven-dimensional legitimacy vector, and the two-channel reputation tensor. If the architecture interests you, the 15 pages are below.

Read the full paper (PDF, 15 pp.) · EUMAS 2026 submission, under review · Springer LNCS format · Pre-registered demonstration with falsification criteria