Key Takeaways

- Five proven outer-loop workflows for using Claude Code as an AI SRE: incident triage, runbook execution, postmortem drafting, SLO investigation, and on-call handoffs.

- The bottleneck isn't the model — it's the MCP runtime.

The Context-Loading Tax Is Killing Your On-Call

It's 2:13am. PagerDuty fires. You open Datadog, find the wrong dashboard, then the right one. Then CI for recent deploys. Then Jira for open incidents. Then Slack to check if someone's already in the war room.

Eight minutes in, you have a working hypothesis. That's not incident response — that's a context-loading tax you pay before the work begins.

Claude Code already eats the inner loop (writing code, fixing bugs, refactoring). But as the team at Arcade.dev points out in their new playbook, the outer loop — operational work like incident response, runbook execution, and SLO investigation — still looks identical to how it looked five years ago.

The gap isn't the model. It's the infrastructure to run agentic tools against production with auth, scope, and audit guarantees.

Five AI SRE Workflows That Work Today

1. Incident Triage (The Archaeology Problem)

Manual triage is a parallelism problem: one engineer, five tools, sequential context loads. Claude Code flips this.

What to do: Hand the alert to Claude Code with a prompt like:

Triage this PagerDuty alert for checkout-service. Correlate with:

- Datadog metrics (p95 latency, error rate)

- Service logs (last 15 minutes)

- Deployment history (last 24 hours)

- Slack #incidents for correlated failures

Claude Code returns: the alert context in two sentences, the top three correlated signals with direct Datadog links, and the deploys most likely to matter — with commit SHAs and authors. Two to three minutes, not eight.

MCP servers needed: @pagerduty/mcp-server, @datadog/mcp-server, @slack/mcp-server, @github/mcp-server

2. Runbook Execution (The Checklist Problem)

Runbooks exist for a reason — but nobody reads them during a fire. Claude Code can execute them step by step.

What to do: Point Claude Code to your runbook repo:

Run the runbook at ./runbooks/database-failover.md.

Check each step's preconditions before executing.

Log every action with timestamps.

Pause if a precondition fails.

Claude Code reads the runbook, translates each step into tool calls (SSH, kubectl, API calls), and logs every action. If a step says "Check if replica lag > 30s", it runs the query, evaluates the result, and either proceeds or surfaces the mismatch.

MCP servers needed: Custom MCP server wrapping your runbook executor, @kubernetes/mcp-server, database-access MCP server

3. Postmortem Drafting (The Memory Problem)

Postmortems are the most skipped step in incident response. They shouldn't be — they're how you prevent the next one.

What to do: After the incident resolves:

Draft a postmortem for incident INC-4721.

Include:

- Timeline from PagerDuty and Slack

- All commands executed during the incident

- The root cause analysis from Datadog

- Three action items with owners

Claude Code assembles the timeline, pulls the CLI history from the session, and generates a structured postmortem you can drop into Google Docs or Notion.

MCP servers needed: @notion/mcp-server or @google-docs/mcp-server, @pagerduty/mcp-server, @slack/mcp-server

4. SLO Investigation (The Dashboard Problem)

When an SLO burns, you need to know why — fast. Not build a new dashboard.

What to do:

Investigate why the checkout-service error budget is 60% depleted.

Check:

- SLO definition in Datadog

- Recent deploys correlated with burn rate

- Service dependencies that had incidents in the last 7 days

Claude Code cross-references SLO burn rate with deployment cadence and dependency health, surfacing the most likely contributor in seconds.

MCP servers needed: @datadog/mcp-server, @github/mcp-server, service-catalog MCP server

5. On-Call Handoffs (The Knowledge Loss Problem)

The worst feeling in on-call: taking over from someone who left a Slack message that says "still investigating."

What to do: At shift change:

Summarize the current state of all open incidents.

For each incident:

- What was tried (with commands executed)

- What was ruled out

- What needs immediate attention

- Links to relevant dashboards and logs

Claude Code generates a handoff document that captures the state of play, not just the state of mind.

MCP servers needed: @pagerduty/mcp-server, @slack/mcp-server, @notion/mcp-server

The Real Bottleneck: It's Not the Model, It's the Runtime

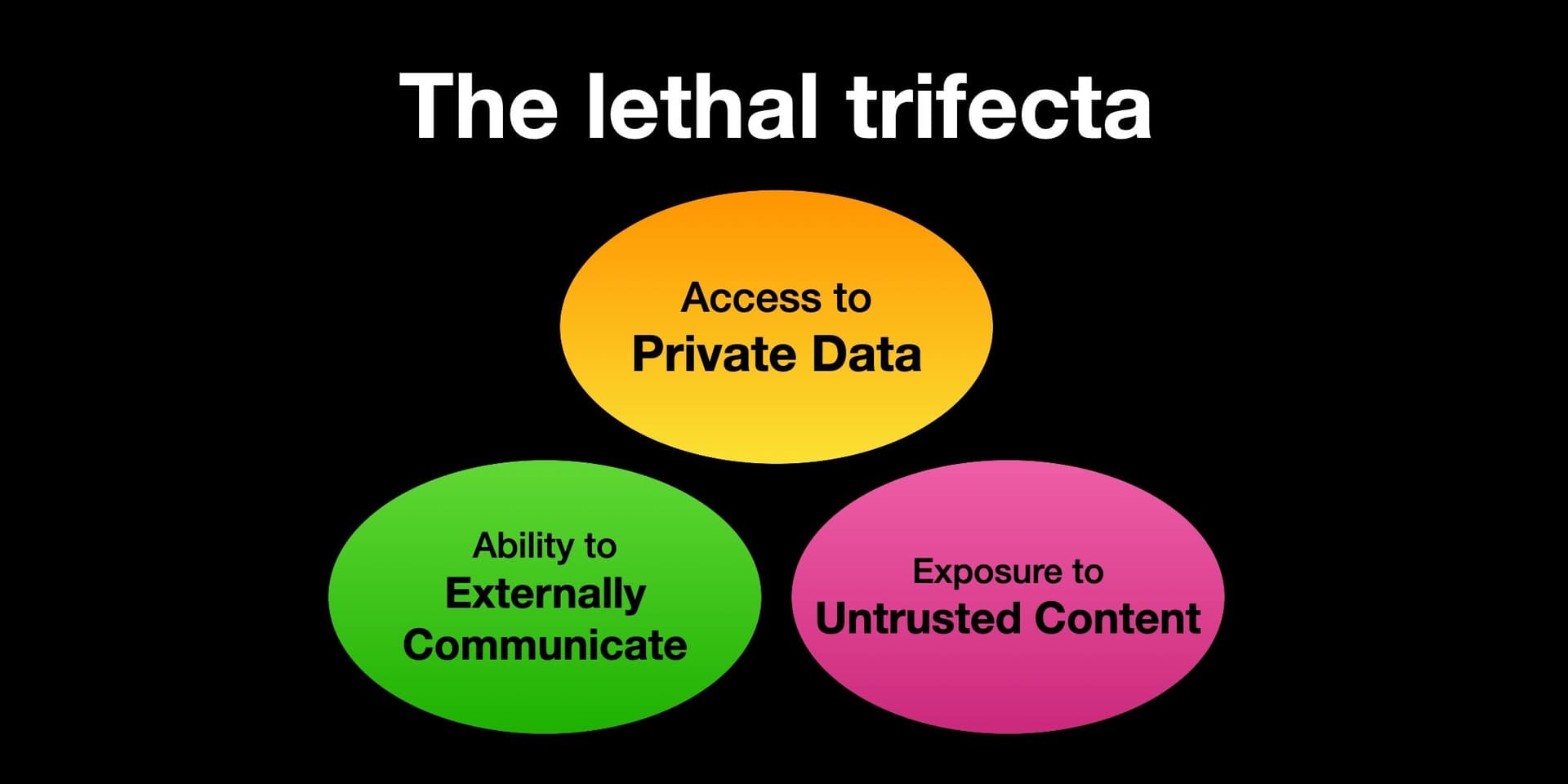

The MCP servers for most of these SaaS tools already exist. The problem is that when every engineer wires their own connection, you inherit:

- Inconsistent authorization — one engineer uses a personal token, another uses a service account

- Over-scoped credentials — the token can delete production databases even though the runbook only reads

- No audit trail — who ran what command against which system?

The gap is an MCP runtime, not a model. Managed auth, hosted compute, tool-level governance, persistent audit logs. Until something provides all four, outer-loop AI stays a party trick.

Arcade.dev positions itself as that runtime — an MCP runtime with a gateway inside it. But the pattern is what matters: you need centralized control over how Claude Code connects to production.

Try It Now

Start small. Pick one workflow — incident triage is the easiest — and wire up the MCP servers for PagerDuty, Datadog, and Slack. Add them to your CLAUDE.md:

## On-Call MCP Servers

- @pagerduty/mcp-server: Incident context, escalation policies

- @datadog/mcp-server: Metrics, logs, dashboards

- @slack/mcp-server: Channel history, message threads

- @github/mcp-server: Deploy history, commit SHAs

Then run your first triage prompt. You'll never go back to the dashboard shuffle.

gentic.news Analysis

This playbook from Arcade.dev arrives at a moment when Claude Code's ecosystem is expanding rapidly. We recently covered the AWS Bedrock MCP tools (April 22) that give Claude Code native access to AWS infrastructure — a natural complement to the SRE workflows described here. The Playwright MCP Server (April 21) also fits: you could extend these workflows to include automated rollback testing.

What's notable is how this aligns with the broader trend of Claude Code moving beyond code generation into operational control. With Claude Code appearing in 56 articles this week (total: 631), the conversation is shifting from "can it write code?" to "can it run production?"

The critical missing piece — the MCP runtime — is where we expect to see startups and cloud providers rush in. AWS Bedrock's MCP tools already provide some of the auth and compute layer. Anthropic's own Claude Agent framework (announced recently) could evolve to fill the runtime gap. Watch this space.

For Claude Code users: the fastest path to value is to wire up one MCP server per workflow this week. Don't wait for the perfect runtime. A working triage bot that saves you 5 minutes per incident pays for itself in one night.