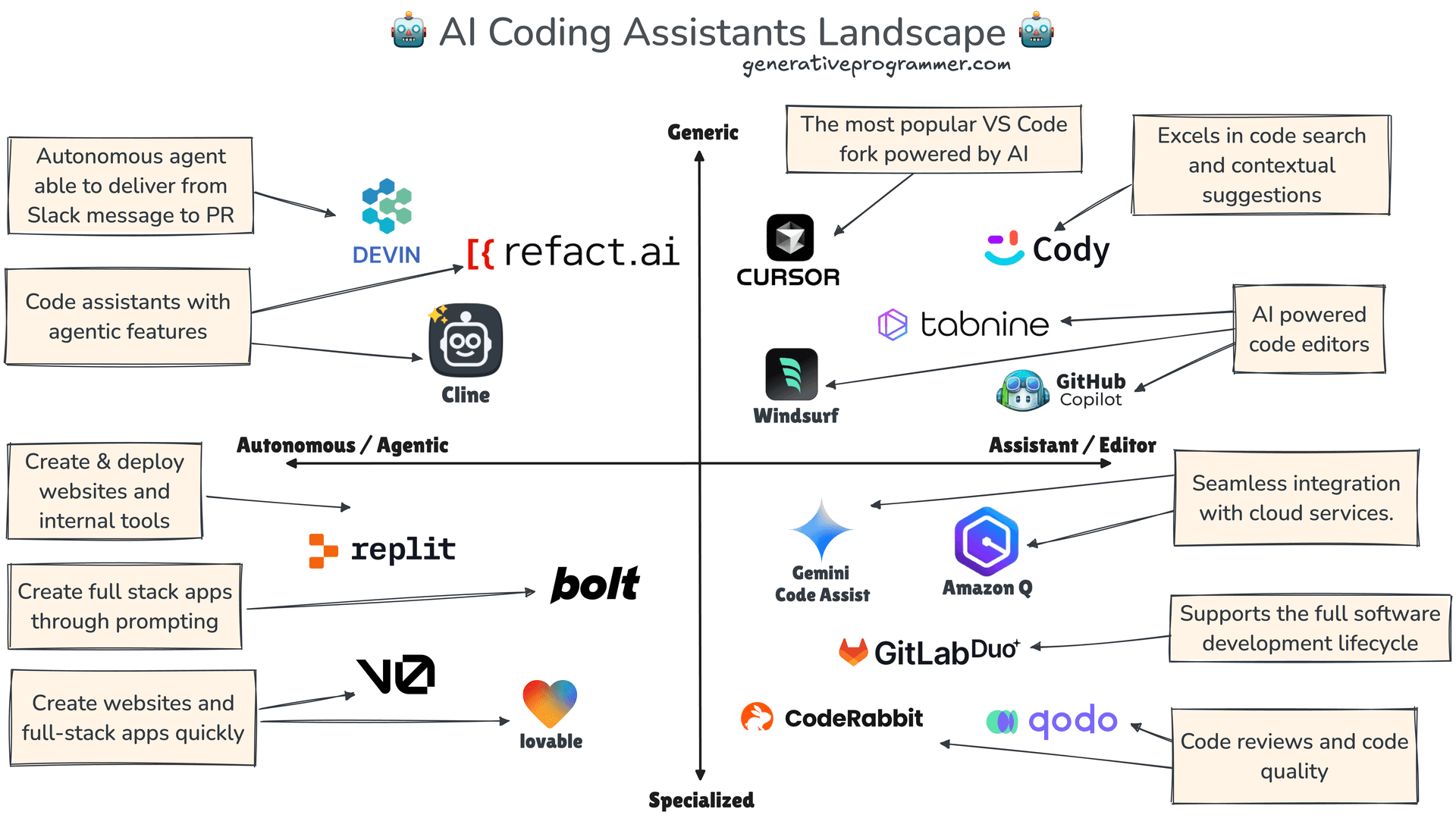

AI coding assistants like GitHub Copilot, Cursor, and Claude Code are proficient at generating functional logic and boilerplate. However, they consistently fail at maintaining visual design consistency—breaking color palettes, ignoring spacing rules, and forgetting the rationale behind design decisions like button radii. This gap between logic and aesthetics has been a major bottleneck in fully automated front-end development.

Google has proposed a solution: Design.md. It's a single, structured file that lives in a code repository, acting as a persistent "design brain" for AI agents.

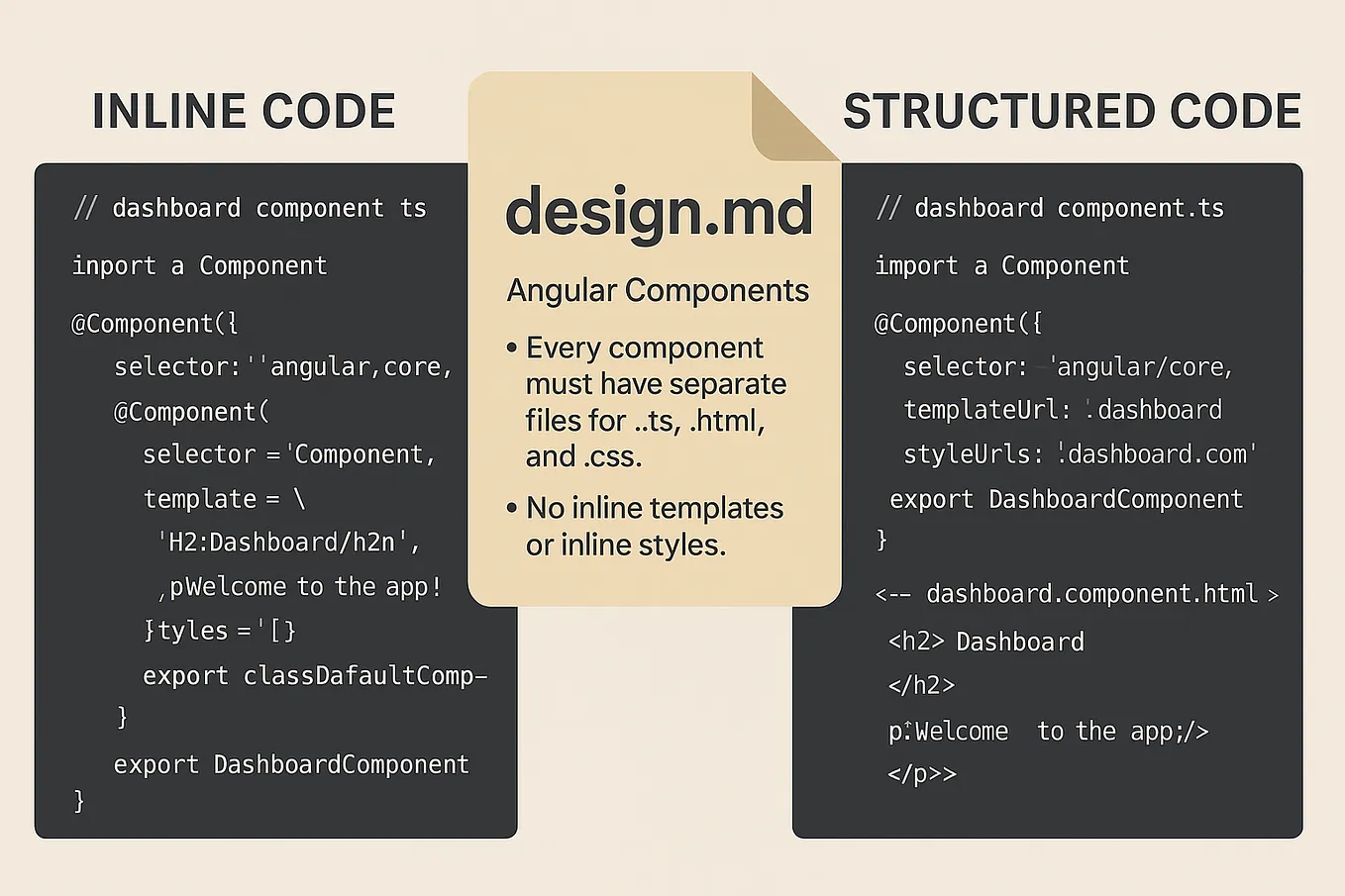

What's New: A Design Specification File for Machines

Design.md is a hybrid file format designed to be both human-readable and machine-parsable. Its core purpose is to codify a project's visual design system in a way that AI agents can reliably consume and adhere to.

The file has two main components:

- YAML Front Matter: This section holds the exact, structured design tokens: HEX color values, typography scales (font families, sizes, weights), spacing units (padding, margins, gaps), and border radii. These are the immutable constants of the design system.

- Markdown Prose: This section explains the "why" behind the design decisions. It documents the design rationale, accessibility considerations (like WCAG contrast targets), and component usage guidelines. This provides the context that agents typically lack.

The workflow is simple: an AI agent reads the Design.md file once at the start of a coding session and references it throughout, eliminating guesswork about visual rules.

Technical Details and Developer Workflow

Beyond being a static reference, Design.md is built for a development pipeline. Google's proposal includes tooling to:

- Lint It: Automatically catch broken token references and WCAG contrast failures before code is committed.

- Diff Versions: Compare two versions of a

Design.mdfile to catch visual regressions during design system updates. - Export: Generate Tailwind configuration or Design Tokens for Community Group (DTCG) format JSON with a single command, bridging the gap between design and development environments.

The file sits directly in the repository (e.g., at the root or in a /docs folder). Agents pick it up automatically, the same way they would a README.md or package.json file. This integrates design governance directly into the existing AI-assisted coding workflow.

How It Compares: From Ad-Hoc Prompts to Systematic Governance

Currently, developers using AI coders have two flawed options for enforcing design:

- Verbose, In-Prompt Instructions: Repeating design rules in every chat prompt is inefficient, prone to omission, and consumes valuable context window space.

- Post-Generation Review & Fix: Manually correcting AI-generated UI code defeats the purpose of automation and is a significant time sink.

Design.md introduces a third, systematic approach: declarative design governance. It moves design rules from being ephemeral chat context to being version-controlled, lintable, project-level configuration. This is analogous to how eslint config files codify code style rules for linters.

Design.md File

High (single source of truth)

High (read once)

Automated, version-controlled

What to Watch: Adoption and Agent Integration

The success of Design.md hinges on two factors:

- Adoption by AI Agent Developers: Tools like Cursor, Windsurf, and GitHub Next need to build native support for reading and prioritizing rules from

Design.mdfiles. Without this, it remains a manual reference. - Design Toolchain Integration: The value multiplies if design tools like Figma can export or sync with

Design.md, creating a seamless bridge from design mockups to AI-enforced code.

The initial proposal is lightweight and open, suggesting Google may be aiming to establish a de facto standard rather than a proprietary product. If widely adopted, it could significantly increase the reliability and utility of AI agents for front-end and full-stack development tasks.

gentic.news Analysis

This move by Google is a direct and pragmatic response to a well-documented pain point in the AI-assisted development lifecycle. It follows a clear trend we've covered where the industry is shifting from building more capable generic models to creating scaffolding and interfaces that make existing models more reliable and usable in specific domains. For instance, our coverage of Smol Agents highlighted the move towards smaller, more constrained models for deterministic tasks. Design.md applies a similar principle of constraint—not to the model, but to its operating environment—to drastically improve output quality.

This aligns with Google's broader strategy to own the infrastructure layer of AI development. Rather than just competing on model scale (Gemini), they are investing in the tools and protocols that organize how models interact with the real world. Think of this as complementary to their Gemini Code Assist and Project IDX initiatives, which aim to deeply integrate AI into the entire developer environment. If Design.md gains traction, it could become a subtle but powerful lock-in mechanism, establishing a Google-proposed standard for a critical part of the AI dev stack.

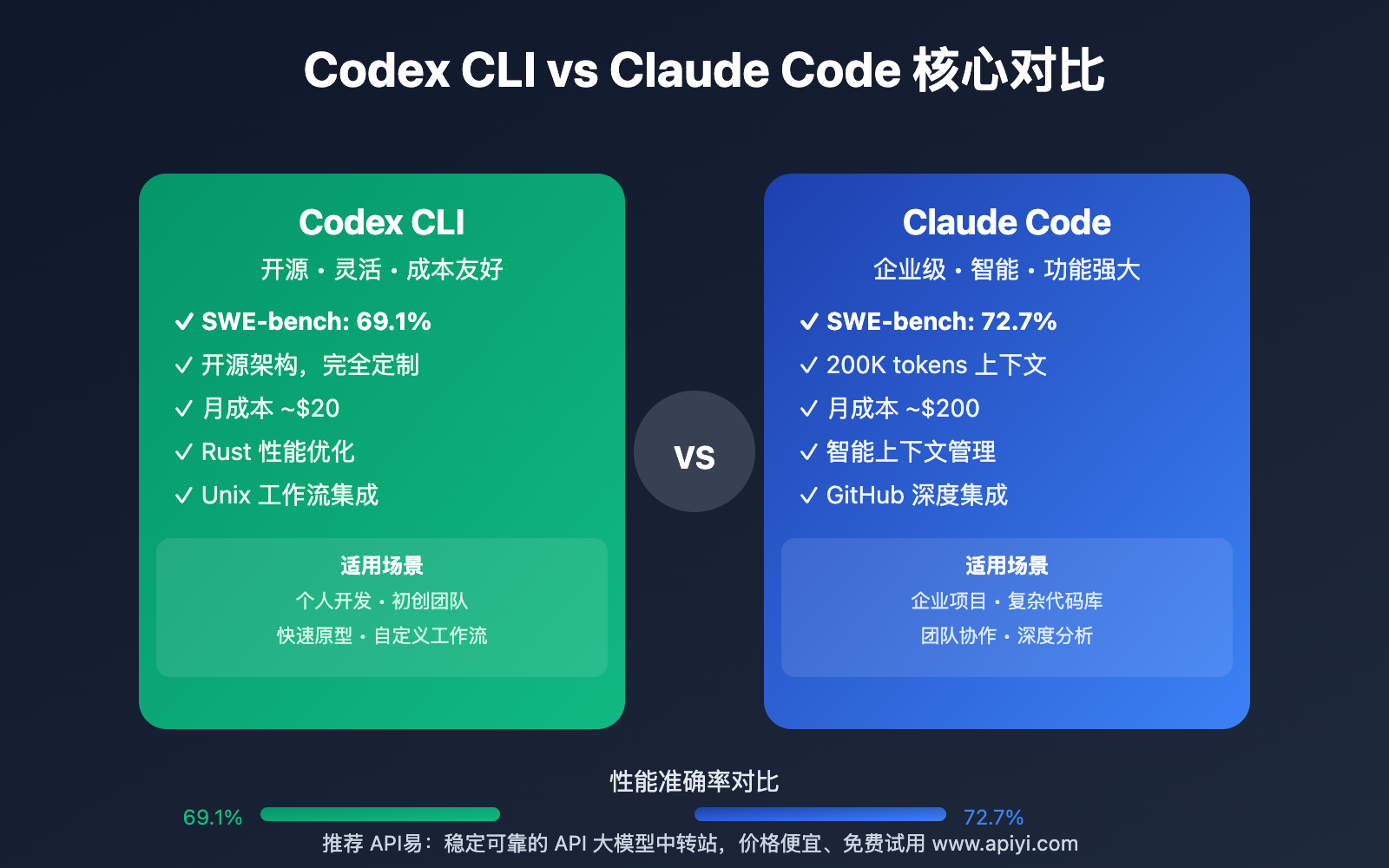

Furthermore, this addresses a key limitation highlighted in our analysis of SWE-Bench results, where even top-performing models struggled with holistic tasks requiring consistent application of non-functional requirements like styling. Design.md effectively externalizes that requirement into a verifiable file, turning a "reasoning" problem for the LLM into a simpler "lookup and apply" problem. This is a smart engineering workaround that delivers immediate practical value while the research community continues to work on improving long-context reasoning and consistency in foundational models.

Frequently Asked Questions

What is a design token?

A design token is a name-value pair that stores a fundamental visual design attribute, such as a color (--color-primary: #3b82f6), a spacing unit (--spacing-md: 1rem), or a font size (--text-lg: 1.125rem). They are the atomic building blocks of a design system, and Design.md uses them to give AI agents precise, unchanging values to use.

How is Design.md different from a Figma file or a style guide?

A Figma file or PDF style guide is designed for human interpretation. An AI agent cannot reliably parse a complex Figma frame to extract exact tokens and rules. Design.md is a machine-first, plain-text format with a strict structure (YAML + Markdown) that is trivial for an AI to read accurately and completely, ensuring no ambiguity in the design rules.

Do I need a specific AI agent to use Design.md?

Currently, Design.md is a proposal and specification. For it to work automatically, your AI coding assistant (e.g., Cursor, Windsurf) needs to build in support for it. However, even without native support, a developer can manually copy rules from the Design.md file into their chat prompts, which still centralizes the information.

Can Design.md handle complex responsive design or dark mode?

The YAML front matter can be structured to define design tokens for different modes or breakpoints (e.g., colors.light.primary, colors.dark.primary, spacing.mobile.base, spacing.desktop.base). The accompanying markdown prose would then explain the logic for when to apply which set of tokens, providing the necessary context to the AI agent.