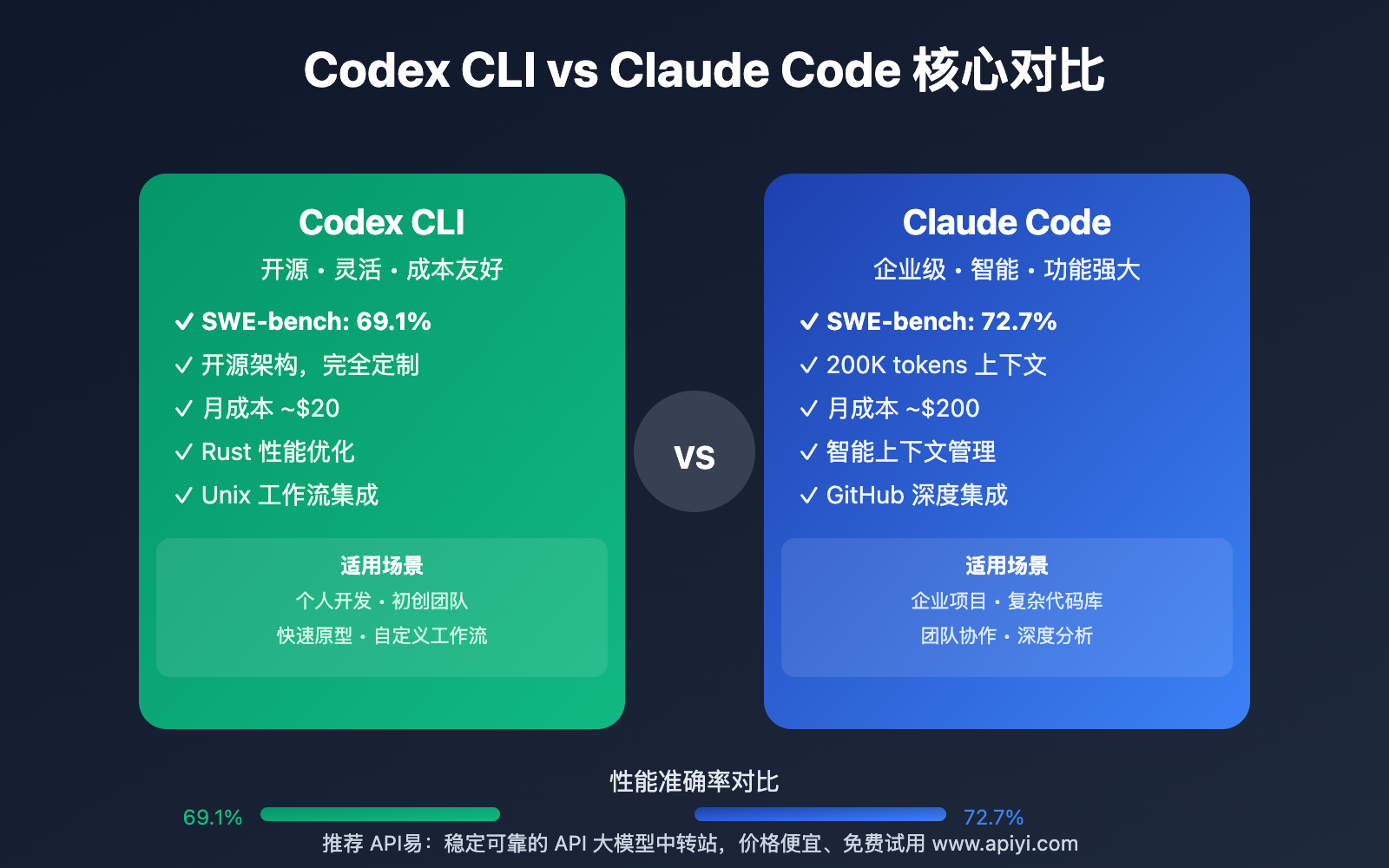

The Benchmark Landscape

Independent comparisons, like the one referenced in the Medium article, consistently place Claude Sonnet 4.6 among the top models for complex reasoning and coding tasks. While the article provides a general breakdown, the critical takeaway for developers is this: Sonnet 4.6 is a validated, high-performance engine for Claude Code. This isn't just marketing; it's data that confirms the model powering your terminal agent is competitive with the best from Google and OpenAI.

What It Means For Your Claude Code Sessions

You don't need to switch models. The benchmark validation means you can confidently rely on Sonnet 4.6's reasoning for the complex, multi-step tasks Claude Code is built for—like refactoring across files, debugging obscure errors, or designing new system architectures. Its performance in these head-to-head tests suggests it will handle the nuanced logic and context retention your work requires.

More importantly, Claude Code is built for Claude models. Using Sonnet 4.6 isn't just about raw capability; it's about native integration. Features like the recently launched Tool Search (which defers MCP tool definitions to save 90% of context tokens) and the underlying Model Context Protocol (MCP) architecture are optimized for Anthropic's models. Trying to force another model through the same pipeline would likely sacrifice the smooth tool use and system access that makes Claude Code effective.

How To Leverage This Now

Trust the Agent with Complex Tasks: Don't break down a large feature request into tiny prompts. Given Sonnet 4.6's strong reasoning score, you can present a substantial problem statement and let Claude Code plan and execute the steps. Example:

claude code "Refactor the authentication module to use JWT. The current session-based logic is in `auth/legacy.py`. Update the API routes in `app/routes/` and ensure the frontend token handling in `src/auth.js` is compatible."Optimize for Claude's Strengths: Benchmarks highlight strengths in reasoning and instruction following. In your

CLAUDE.md, be direct and strategic in your instructions rather than overly verbose. As per Anthropic's own guidance from April 1, avoid elaborate personas—they waste tokens and don't improve output for a model of this caliber.Double Down on MCP: Claude Code's edge is its tool-use framework. Install MCP servers for your database (

mcp-server-postgres), cloud infrastructure (mcp-server-aws), or project management tools. Sonnet 4.6's ability to reliably use these tools, as evidenced by its strong performance, turns Claude Code from a code generator into a true system agent.

The Bottom Line

Forget the model wars. As a Claude Code user, your advantage is the synergy between a top-tier model and a purpose-built, tool-using agent. The benchmarks confirm Sonnet 4.6 has the brains. Your job is to leverage Claude Code's brawn—its direct access to your filesystem, shell, and growing MCP ecosystem—to ship faster.

gentic.news Analysis

This benchmark data reinforces a trend we've been tracking: Claude Code's rise is tied to Anthropic's model advancements and its open MCP architecture. The recent launch of Tool Search (April 8) directly addresses token efficiency, a constant concern when using powerful, context-hungry models like Sonnet 4.6 for complex tasks. This follows the broader architectural shift noted on March 30, where Claude Code fully embraced MCP to connect to various backends, though its primary optimization is clearly for its own family of models.

The data also highlights the competitive context. While Gemini and GPT are formidable, Claude Code's strategy isn't just raw model performance—it's deep vertical integration. The model, the agent framework, and the tool protocol are designed together. This contrasts with more generic code assistants that might swap between different backends. For developers, the lesson is to invest in learning Claude Code's native capabilities—like MCP server use and precise CLAUDE.md instructions—rather than wondering if another model might be slightly better. The holistic system is where the real productivity gain lies, as explored in our recent article on building with Claude Managed Agents.