Key Takeaways

- A new arXiv paper proposes using LLM-based 'customer digital twins' (CDTs) — agents built from individual Reddit review histories via RAG — to perform conjoint analysis.

- The CDTs predict actual user preferences with 87.73% accuracy in a computer monitor case study, offering a scalable alternative to traditional market research.

What Happened

A research paper submitted to arXiv on March 6, 2026, proposes a radical simplification of conjoint analysis — the gold-standard market research method for estimating consumer preferences. Instead of recruiting human respondents, the authors build LLM-based 'customer digital twins' (CDTs) from individual users' Reddit review histories.

The method works: CDTs predicted the actual preferences of real users with 87.73% accuracy in a case study on computer monitors, successfully quantifying trade-offs between attributes like panel type and resolution.

Technical Details

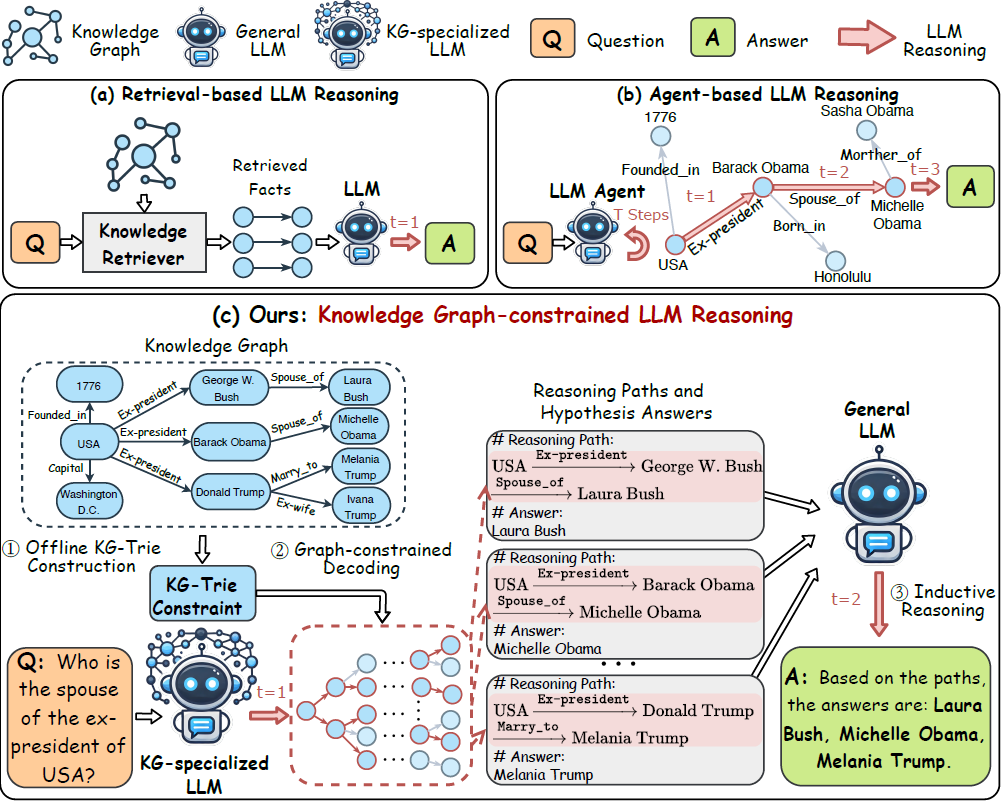

The framework operates in four steps:

- User identification: Active Reddit users with comprehensive review histories are identified.

- Vector database construction: Each user's review history is aggregated into an individualized vector database.

- Agent creation: Using retrieval-augmented generation (RAG) and prompt engineering, the system creates a customer agent that can dynamically retrieve and reason upon its specific past preferences and constraints.

- Conjoint analysis execution: CDTs perform pairwise comparison tasks on product profiles generated via fractional factorial design. The resulting choice data is analyzed via logistic regression to estimate part-worth utilities.

RAG is the critical enabler here — as noted in our prior coverage, it has been positioned as the go-to technique for dynamic, fact-heavy applications with frequently changing information (April 22, 2026). Rather than fine-tuning a model on each user, the system retrieves the relevant review context at inference time, making it both more scalable and more faithful to the user's actual expressed preferences.

Why This Matters for Retail & Luxury

Conjoint analysis is the backbone of product assortment decisions, pricing strategy, and feature prioritization at every major retailer and luxury house. But traditional conjoint studies are slow (2-4 weeks), expensive ($20k-$100k+ per study), and suffer from respondent fatigue — especially for luxury goods where target customers are hard to recruit.

LLM-based CDTs address all three pain points:

- Speed: Studies that take weeks could be run in hours.

- Cost: Marginal cost per 'respondent' drops to near-zero.

- Scale: Thousands of synthetic respondents can be queried simultaneously.

For luxury houses like LVMH, Kering, or Richemont, the implications are particularly interesting. Their customers are notoriously difficult to survey — high-net-worth individuals rarely participate in market research panels. But they do leave reviews on forums like PurseForum, Reddit's r/fragrance, or brand-specific communities. A CDT built from these reviews could surface preference signals that traditional methods cannot reach.

Business Impact

- 87.73% accuracy in predicting actual user preferences — strong enough for many product decisions, though not a complete replacement for human validation.

- Scalable to thousands of 'respondents' simultaneously, enabling micro-segmentation (e.g., preferences of 'luxury watch enthusiasts who also collect sneakers').

- Continuous updating — as users post new reviews, their CDT can be refreshed without re-running a study.

However, the paper's case study was limited to computer monitors — a category with well-understood attributes (panel type, resolution, refresh rate). Translating this to luxury categories (handbag leather quality, watch movement type, fragrance notes) will require richer review data and more nuanced attribute definitions.

Implementation Approach

Technical requirements:

- Access to user review data (public forums, first-party review platforms)

- Vector database infrastructure (Pinecone, Weaviate, or similar)

- LLM API access (the paper does not specify which model, but GPT-4 or Claude-class models are implied)

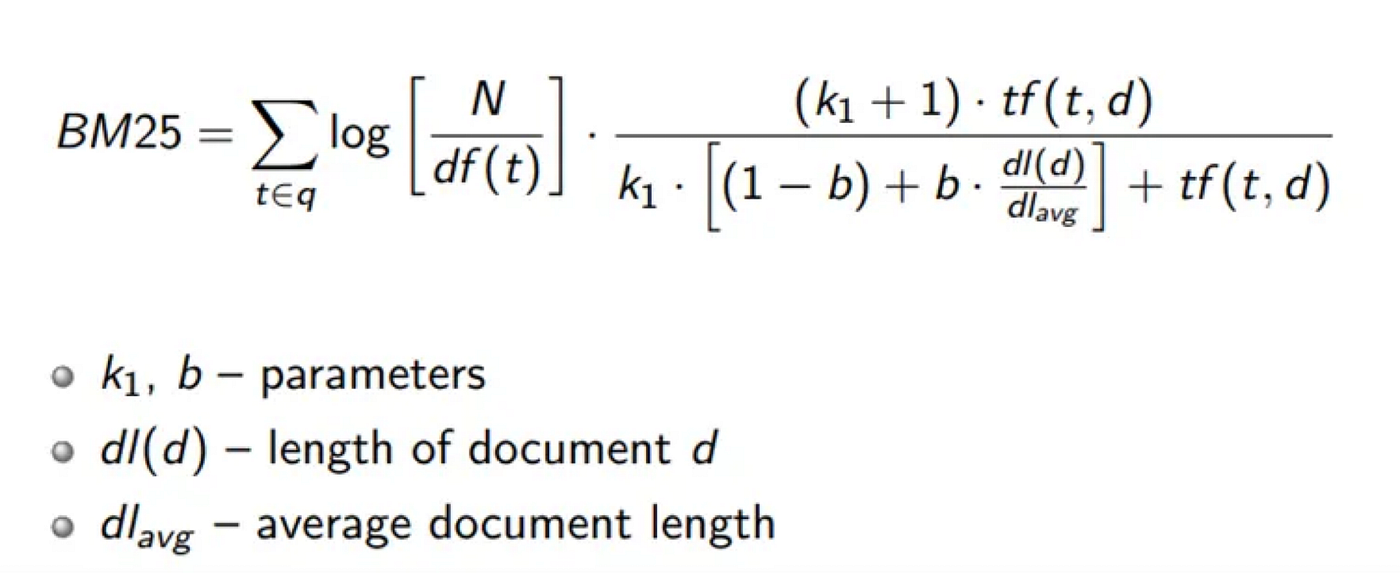

- Conjoint analysis pipeline (fractional factorial design + logistic regression)

Complexity level: Medium-high. The individual components are well-understood, but integrating them into a reliable, production-grade system requires significant engineering effort.

Effort estimate: 3-6 months for an initial prototype, depending on existing data infrastructure.

Governance & Risk Assessment

- Privacy: Using public Reddit data is legally permissible (per the platform's terms), but the ethical line is blurry. Users did not consent to being 'digital twinned.' Luxury brands using first-party review data should obtain explicit consent.

- Bias: Reddit users skew young, male, and tech-savvy. CDTs built from Reddit data will not represent a brand's actual customer base. Brands must validate against their own customer data.

- Maturity: This is a research paper, not a production system. Expect 12-18 months before commercial offerings emerge.

- Hallucination risk: LLMs may generate preferences consistent with a user's past reviews but inconsistent with their actual current preferences. The paper's 87.7% accuracy leaves a non-trivial error rate.

gentic.news Analysis

This paper sits at an interesting intersection of two trends we've been tracking. First, RAG continues to prove itself as the dominant paradigm for grounding LLMs in specific user data — this follows our April 21 coverage of agentic hybrid retrieval systems for dataset search and our April 22 note positioning RAG as the go-to technique for dynamic, fact-heavy applications. Second, the paper's use of synthetic respondents echoes the broader industry push toward simulation-based decision-making.

The key question for retail AI leaders: Does 87.7% accuracy cross the threshold for business decisions? For low-stakes choices (e.g., which monitor stand color to offer), absolutely. For high-stakes decisions (e.g., which $5,000 handbag to launch next season), probably not yet — but the trajectory is clear.

Luxury houses should watch this space closely. The ability to simulate customer preferences without recruiting actual respondents would be transformative for an industry where customer research is notoriously difficult. The first brand to build a reliable CDT system from its own CRM and review data will have a significant competitive advantage in product development speed and accuracy.

However, the gap between a computer monitor case study and a luxury handbag launch is non-trivial. Monitor attributes are objective (resolution, refresh rate). Luxury attributes are subjective (craftsmanship, brand heritage, exclusivity). CDTs will need to demonstrate they can capture these nuances before they become a primary decision-making tool for luxury product teams.

We'll be watching for follow-up papers that extend this method to fashion, beauty, and hard luxury categories — those would be real signal.