What Happened

A new preprint, "Cold-Starts in Generative Recommendation: A Reproducibility Study," was posted to arXiv on March 31, 2026. The paper addresses a core, persistent challenge in recommendation systems: the cold-start problem. This occurs when a system must make recommendations for newly registered users (user cold-start) or for newly introduced items to existing users (item cold-start) with little to no historical interaction data.

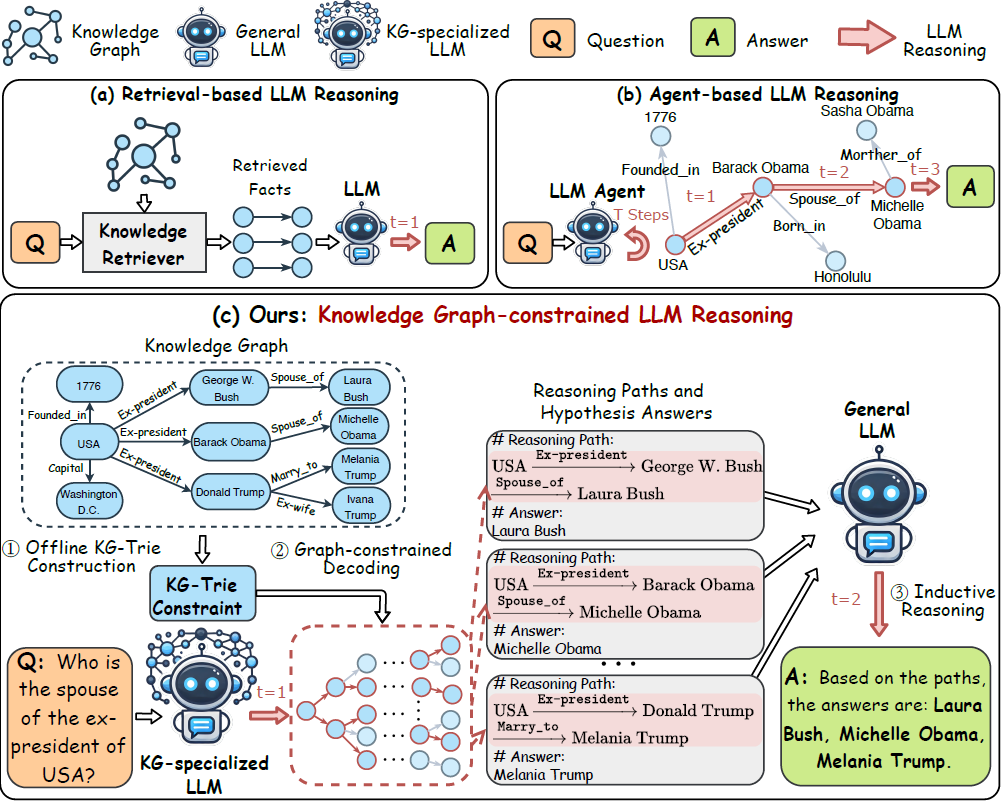

The research focuses specifically on a newer class of systems: generative recommenders built on top of pre-trained language models (PLMs). These models are often touted for their potential to mitigate cold-start issues by leveraging rich semantic information from item titles and descriptions, and by conditioning recommendations on limited, contextual user signals at test time.

However, the study's central argument is that the purported advantages of these generative systems in cold-start settings are poorly understood and difficult to verify. The authors contend that cold-start is rarely treated as a primary evaluation setting in existing literature. More critically, they identify a methodological flaw: reported performance improvements are confounded because researchers frequently change multiple key design variables simultaneously. These include model scale, the design of user/item identifiers, and the overall training strategy.

To address this, the paper presents a systematic reproducibility study under a unified suite of cold-start evaluation protocols. The goal is to disentangle the effects of individual design choices and provide a clearer, more honest assessment of whether and how generative PLM-based recommenders actually improve cold-start performance.

Technical Details

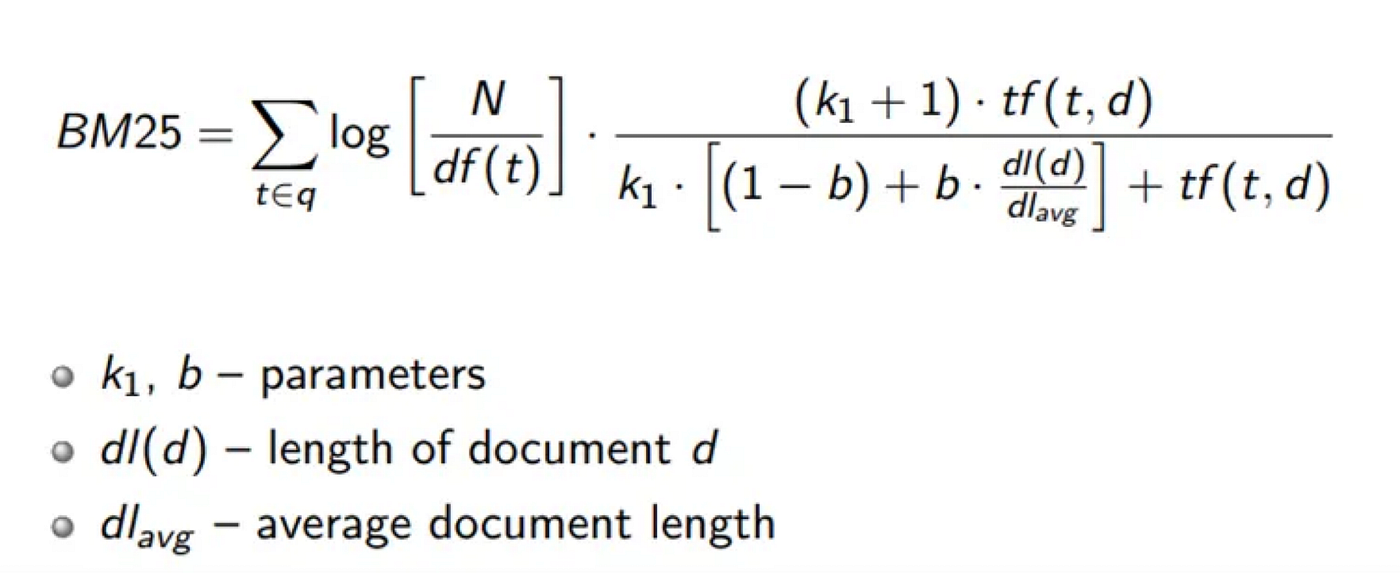

While the full paper details the experimental framework, the core technical premise revolves around the architecture of generative recommendation systems. Unlike traditional collaborative filtering or two-tower embedding models, generative recommenders often frame the task as a sequence-to-sequence or next-token prediction problem. A model might be trained to generate a sequence of item IDs (or their semantic representations) that a user is likely to engage with, conditioned on their history and item metadata.

For cold-start scenarios:

- Item Cold-Start: The model must rely almost entirely on the textual description of a new item (e.g., "cashmere blend turtleneck, slim fit, midnight blue") to place it in the correct latent space and recommend it to users whose profiles suggest an affinity for such items.

- User Cold-Start: With no clickstream history, the model might condition recommendations on minimal signals gathered during onboarding (e.g., answered preference questions, stated interests, or even the session context) combined with the semantic understanding of items.

The reproducibility study likely constructs controlled experiments where variables like PLM backbone size (e.g., 100M vs. 1B parameters), the method of incorporating item IDs (e.g., learned embeddings vs. textual descriptions), and training data regimes are varied independently. This allows the researchers to attribute performance changes on cold-start benchmarks to specific factors rather than a bundle of upgrades.

Retail & Luxury Implications

The cold-start problem is not academic; it is a multi-million dollar operational challenge for luxury and retail. Every season brings new collections (item cold-start), and high-value customer acquisition campaigns constantly introduce new users (user cold-start). The promise of AI that can accurately recommend a just-launched handbag or personalize a homepage for a first-time visitor based on minimal signals is the holy grail of digital merchandising.

If the study's findings hold, they suggest that the industry should be highly skeptical of blanket claims that "generative AI solves cold-start." The reality is more nuanced:

- Performance May Be Overstated: Early reported gains from switching to generative architectures may be due to increased model scale or other concurrent changes, not the generative paradigm itself. A luxury brand investing in a bespoke generative recommender needs to isolate the value of the architecture from simply using a larger, more expensive model.

- The Need for Rigorous Evaluation: This research underscores that brands must develop their own rigorous, scenario-specific evaluation protocols. Benchmarking a new system requires A/B tests that specifically measure lift on new user conversion and new product sell-through, not just overall site-wide metrics.

- Semantic Understanding is Key (But Not a Panacea): The generative approach's strength is its native use of language. For luxury, where product descriptions are carefully crafted narratives about craftsmanship, material, and heritage, this semantic layer is invaluable. A model that truly understands "Goyard St. Louis tote" versus "artisanal vegetable-tanned leather satchel" has a better chance of making nuanced cold-start recommendations. However, the study implies this advantage must be proven, not assumed.

In practice, a technically rigorous approach would involve phased testing: first validating that a generative model using rich product attributes outperforms a traditional model on cold-item scenarios in offline tests, before committing to a full, costly production deployment.