Collider-Bench, released May 13 on arXiv, tests LLM agents on reproducing LHC analyses from papers. No general-purpose coding agent reliably beats a physicist-in-the-loop solution, the paper reports.

Key facts

- Released on arXiv May 13, 2026

- Tasks require simulation-and-selection pipelines from LHC papers

- No agent reliably beats physicist-in-the-loop solution

- Evaluates computational cost per agent per task

- Uses LLM judge for hallucination and duplication detection

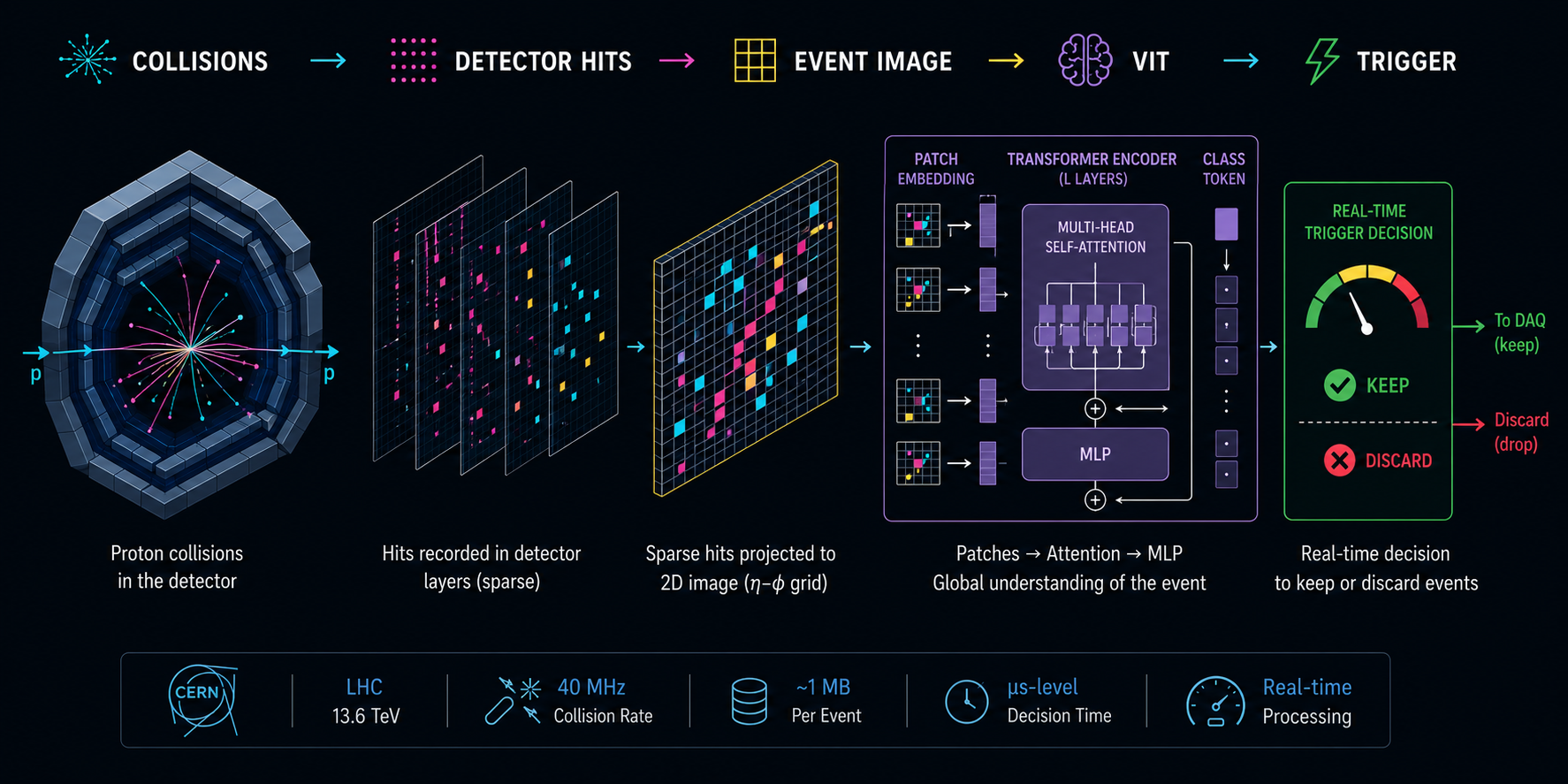

Collider-Bench, released on arXiv on May 13, 2026, introduces a new benchmark for evaluating whether LLM agents can reproduce experimental analyses from the Large Hadron Collider (LHC) using only public papers and open scientific software. The benchmark tasks require agents to convert a published analysis into an executable simulation-and-selection pipeline and submit predicted collision event yields in specified signal regions. These predictions are evaluated with standard histogram metrics that provide continuous fidelity scores without a hand-written rubric [According to Collider-Bench].

The benchmark also reports the computational cost incurred by each agent per task, and uses an LLM judge to evaluate the codebase and full session trace for qualitative failure modes such as fabrications, hallucinations, and duplications. The authors release an initial set of tasks drawn from LHC searches, together with a containerized sandbox and event simulation tools [per the arXiv preprint].

Why this matters

Existing benchmarks for long-horizon tool-use tasks rarely capture the complexity and nuance of real scientific work. Collider-Bench fills this gap by requiring agents to rely on physical reasoning, domain knowledge, and trial-and-error to fill gaps in published papers, which inevitably omit implementation details needed for faithful reconstruction. The results show that on average no agent reliably beats the physicist-in-the-loop solution, underscoring the gap between current AI agents and expert human performance in scientific domains [According to Collider-Bench].

Performance and failure modes

The paper evaluates across a capability ladder of general purpose coding agents, but specific model names and scores are not disclosed in the abstract. Key failure modes include hallucinations, where agents fabricate results, and high computational cost, which the benchmark tracks per task. The use of an LLM judge for qualitative evaluation is notable, echoing recent work on LLM-as-a-Judge frameworks [as previously reported on arXiv].

Key Takeaways

- Collider-Bench tests LLM agents on reproducing LHC analyses from papers.

- No agent beats physicist-in-the-loop, highlighting gaps in scientific reasoning.

What to watch

Watch for the release of specific agent scores and model names in the full paper, and whether subsequent benchmarks extend Collider-Bench to other experimental physics domains or higher-fidelity toolchains.