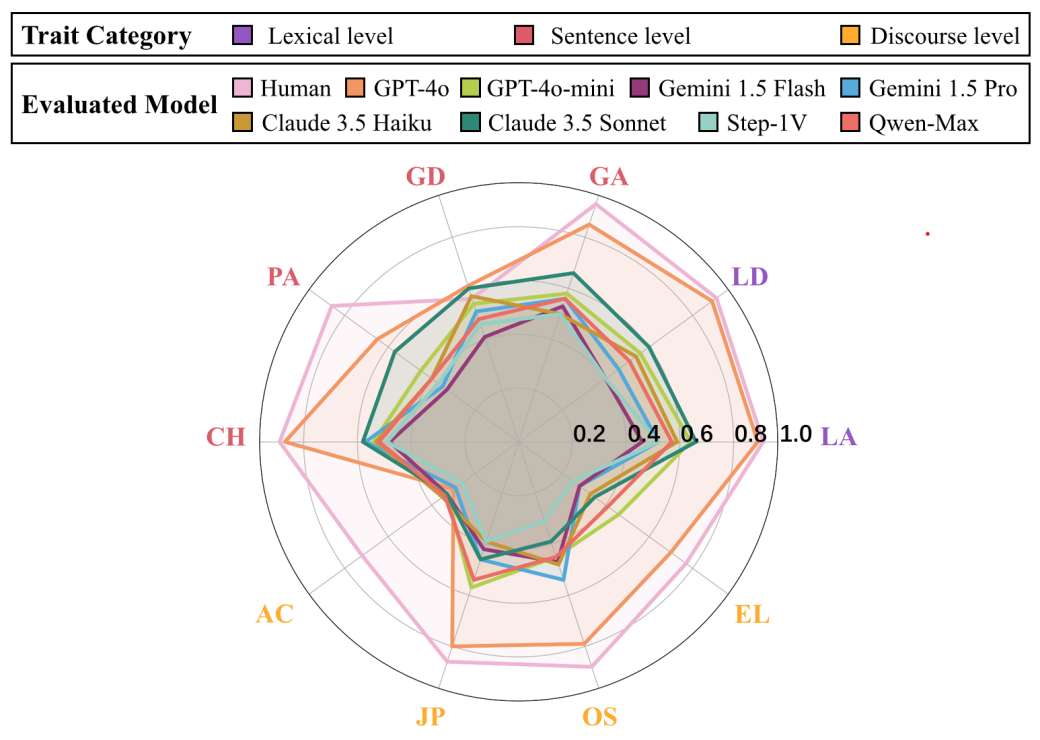

A new benchmark reveals frontier MLLMs judge aesthetics correctly only 26.5% of the time. The Visual Aesthetic Benchmark (VAB) shows a 42.4-point gap versus human experts.

Key facts

- Top MLLM scores 26.5% on VAB vs. 68.9% for humans.

- VAB includes 400 tasks and 1,195 images.

- Labels from 10 expert judges per task.

- Fine-tuning 35B model on 2,000 examples matches 397B model.

- 20 frontier MLLMs and 6 reward models evaluated.

Key Takeaways

- Frontier MLLMs achieve only 26.5% accuracy on VAB, far below human 68.9%.

- Fine-tuning bridges the gap.

The Problem with Scalar Scores

Most aesthetic evaluation systems reduce judgment to a single scalar score per image. The VAB authors first tested this approach with eight expert annotators: score-derived rankings aligned poorly with the same annotators' direct comparisons. Direct ranking yielded substantially higher inter-annotator agreement on best- and worst-image labels [According to Visual Aesthetic Benchmark].

VAB Design and Results

VAB casts aesthetic evaluation as comparative selection over candidate sets with matched subject matter. The benchmark contains 400 tasks and 1,195 images spanning fine art, photography, and illustration. Labels come from the consensus of 10 independent expert judges per task [per the arXiv preprint].

Evaluating 20 frontier MLLMs and six dedicated visual-quality reward models, the strongest system identified both the best and worst image correctly across three random permutations in only 26.5% of tasks. Human experts achieved 68.9% accuracy on the same tasks. The gap is clear and measurable.

Fine-Tuning Transfer

Fine-tuning a 35B-parameter model on 2,000 expert examples brought its accuracy close to a 397B-parameter open-weight model. This suggests the comparative signal in VAB is transferable. The result implies smaller, specialized models can approach frontier performance with targeted data [According to Visual Aesthetic Benchmark].

What This Means for Deployment

Multimodal large language models are routinely deployed for visual understanding, generation, and curation. A substantial fraction of these applications require explicit aesthetic judgment. The VAB results expose a structural weakness in current approaches. Scalar scoring fundamentally misrepresents comparative preference, and even the best models fall far short of expert consensus.

What to watch

Watch for open-weight model fine-tuned on VAB data to approach human-level performance in the next 6 months. Also track whether commercial API providers (OpenAI, Google, Anthropic) publish VAB scores or adopt comparative evaluation for aesthetic tasks.