Key Takeaways

- A 40-author survey introduces a 'levels × laws' framework for world models in AI agents, spanning 3 capability levels and 4 law regimes, synthesizing 400+ works.

- It provides a shared vocabulary for designing and evaluating world models across traditionally siloed research communities.

What Happened

A 40-author survey paper on "Agentic World Modeling" has been released, proposing a unified taxonomy for world models in agent research. The paper introduces a "levels × laws" framework that categorizes world models by three capability levels and four law regimes, synthesizing over 400 works and 100+ representative systems.

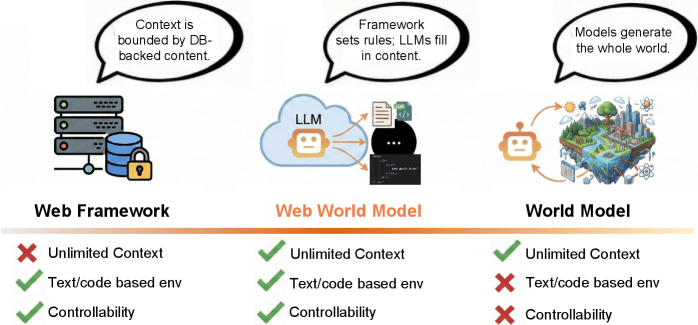

The framework is designed to give builders a shared vocabulary for designing and evaluating world models across communities that have been working in isolation—including model-based reinforcement learning, video generation, web/GUI agents, multi-agent simulation, and scientific discovery.

The Framework: Three Levels of World Model Capability

The paper defines three levels of world model capability:

L1 Predictors — Models that perform one-step transitions. These are the simplest form of world models, capable of predicting the next state given a current state and action, but unable to simulate multiple steps.

L2 Simulators — Models that do multi-step action-conditioned rollouts. These can simulate sequences of actions and their consequences over time, enabling planning and reasoning about future states.

L3 Evolvers — Models that self-revise as the world changes. These are adaptive world models that can update their understanding of the world as new information arrives, handling non-stationary environments and shifting dynamics.

The Four Law Regimes

The framework also identifies four law regimes that govern how world models operate:

- Physical — Models that operate according to physical laws (physics, mechanics, thermodynamics)

- Digital — Models that operate in digital environments (software, web, virtual worlds)

- Social — Models that simulate social dynamics (human behavior, group interactions, cultural norms)

- Scientific — Models that encode scientific knowledge (mathematics, formal systems, scientific theories)

Why It Matters

As agents shift from chatbots to goal-accomplishers, the bottleneck moves from language to environment. This paper is the first to provide a comprehensive, cross-community framework for world models—addressing a critical gap in agent research.

The survey identifies failure modes and proposes evaluation principles for each level, giving practitioners concrete guidance for building and testing world models. It synthesizes work from model-based RL, video generation, web/GUI agents, multi-agent simulation, and scientific discovery—communities that have largely operated independently.

What This Means in Practice

For AI engineers building agents, this framework provides:

- A shared vocabulary to discuss world model capabilities across teams and projects

- Clear evaluation principles for each capability level

- Identification of failure modes specific to each level and law regime

- A roadmap for advancing from simple predictors to adaptive evolvers

Key Numbers

- 40 authors contributed to the survey

- 400+ works synthesized

- 100+ representative systems analyzed

- 3 capability levels (Predictors, Simulators, Evolvers)

- 4 law regimes (Physical, Digital, Social, Scientific)

gentic.news Analysis

This survey arrives at a critical inflection point in agent AI. The field has been fragmenting: model-based RL researchers build world models for continuous control, video generation teams build diffusion-based simulators, and web agent developers build action-conditioned transformers—all solving similar problems with different vocabularies.

The "levels × laws" framework provides the missing Rosetta Stone. Practitioners can now map their work onto a shared spectrum, from simple one-step predictors (sufficient for static environments) to adaptive evolvers (necessary for dynamic, real-world deployments). This is particularly timely given the rapid shift toward autonomous agents that must operate in open-ended environments.

The failure mode analysis is especially valuable. Many current agent systems fail not because of language model limitations but because their world models cannot handle environmental shifts—a problem the framework explicitly addresses at the L3 Evolver level.

Frequently Asked Questions

What is a world model in AI agents?

A world model is an internal representation that an AI agent uses to predict how its environment will change in response to actions. It's distinct from language understanding—it's about modeling cause and effect in the world the agent operates in.

How does the 'levels × laws' framework help builders?

It provides a shared vocabulary for specifying what kind of world model an agent needs. Builders can identify whether they need a simple predictor (L1), a multi-step simulator (L2), or an adaptive evolver (L3), and which law regime applies (physical, digital, social, or scientific).

What are the failure modes identified for each level?

The paper identifies specific failure modes for each level: L1 predictors fail on multi-step dependencies, L2 simulators fail on distribution shift and compounding errors, and L3 evolvers face challenges in maintaining consistency while adapting to change.

How does this relate to current agent systems like AutoGPT or BabyAGI?

Most current agent systems operate at L2 (Simulator) level, using language models as implicit world models. The framework suggests that advancing to L3 (Evolver) capability—where agents can revise their world model as conditions change—is the next frontier for robust autonomous agents.

Paper: Agentic World Modeling: A Survey of World Models for AI Agents