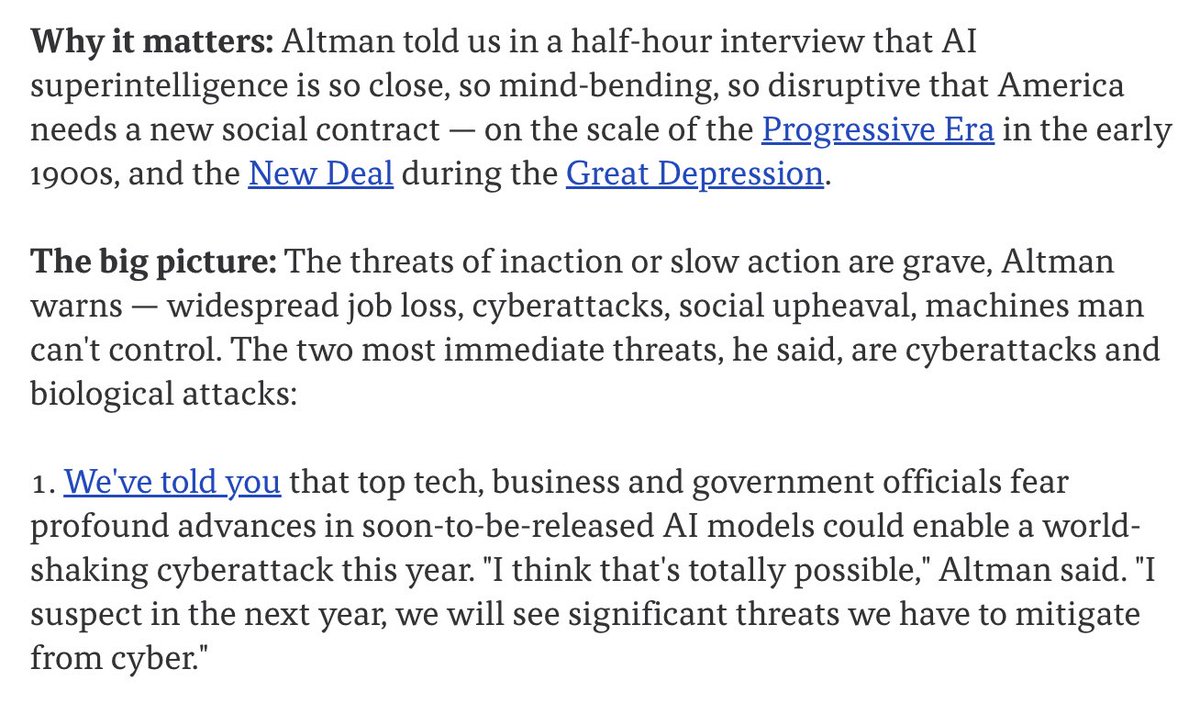

OpenAI CEO Sam Altman has drawn a stark historical parallel, stating that the current moment in artificial intelligence development feels "like early Feb 2020," when researchers at his company recognized the impending scale of the COVID-19 pandemic while most of society continued with business as usual.

In a recent statement, Altman recalled that OpenAI researchers at the time "saw COVID coming early, planned for remote work & got mocked as most people still acted like life was normal." He now applies this analogy to AI: "Now AI has already crossed key thresholds but society does not see it yet."

What Happened

Altman's comparison points to a perceived gap between technological reality and public awareness. While he didn't specify which "key thresholds" AI has crossed, the implication is that capabilities exist within research labs—particularly at OpenAI—that fundamentally change what's possible, yet this shift hasn't been widely recognized or integrated into societal planning.

The reference to February 2020 is particularly pointed. At that time, while some organizations and researchers were preparing for a major disruption, public discourse and government responses in many countries remained in a pre-pandemic mindset. Altman suggests a similar dynamic is unfolding with AI: insiders see transformative change coming, while broader systems operate on outdated assumptions.

Context

This isn't the first time Altman has warned about rapid, underappreciated AI advancement. However, framing it through the specific lens of COVID-19 preparedness—a event with globally understood consequences—represents a more urgent rhetorical strategy. It suggests he believes the coming AI transition could be similarly disruptive to daily life and economic structures, and that society is similarly underprepared.

The statement comes amid ongoing debates about AI safety, capability ceilings, and appropriate governance. Other AI leaders have expressed varying timelines, from immediate concern to decades-long horizons. Altman's position consistently leans toward the nearer-term transformative impact end of the spectrum.

gentic.news Analysis

Altman's analogy is strategically chosen for its emotional resonance and clarity. Everyone remembers the sudden, global shift of March 2020. By invoking that specific moment of realization—when abstract warnings became concrete reality—he's attempting to shortcut more abstract technical debates about AI capabilities. The message is less about specific benchmarks and more about psychological and institutional preparedness.

This follows a pattern of increasing urgency in Altman's public communications over the past year. Since OpenAI's leadership crisis in late 2024, where safety-focused board members were replaced, Altman has walked a tightrope—advocating for rapid development while calling for serious societal preparation. This statement leans heavily into the preparation side, perhaps responding to criticism that his company's pace outstrips safety planning.

The "key thresholds" language is deliberately vague but likely refers to internal metrics at OpenAI related to general reasoning, autonomous task execution, or economic productivity. We've previously covered how GPT-5 demonstrated unexpected multimodal reasoning leaps in late 2025, and how internal projections at major labs suggest AI could automate significant portions of cognitive work by 2027-2028. Altman's warning suggests those projections may be conservative, or that the foundational capabilities for such automation are already demonstrable in controlled environments.

What's missing from this analogy is scale. COVID-19 was a single, identifiable pathogen with a defined transmission mechanism. AI advancement is a multifaceted, continuous process without a single trigger event. Society may not experience a discrete "AI March 2020" moment, but rather a gradual accumulation of changes that eventually force recognition. Altman's warning might be most relevant for policymakers and corporate strategists who need to build flexible systems for a future whose exact contours are unclear but whose direction is increasingly certain.

Frequently Asked Questions

What thresholds has AI crossed according to Sam Altman?

Altman did not specify the exact thresholds in his statement. Based on context from other OpenAI communications and recent AI research, he is likely referring to capabilities in complex reasoning, long-horizon planning, or real-world task execution that were previously thought to be years away. These would be internal benchmarks demonstrating that AI can perform economically valuable cognitive work at a high level.

Is this comparison to COVID-19 accurate?

The analogy is more about the psychology of foresight and institutional preparedness than a direct equivalence between a pandemic and a technological shift. In early 2020, some organizations heeded early warnings and adapted their operations, giving them an advantage. Altman argues a similar dynamic is occurring with AI: those who recognize and prepare for its near-term impacts will be better positioned than those who dismiss the possibility of rapid change.

What should companies or individuals do in response to this warning?

For technical leaders, the implication is to audit which business processes or assumptions are based on the current limitations of AI. The pace of change in language models, agents, and multimodal systems suggests that workflows involving analysis, drafting, coding, or design could be augmented or automated sooner than expected. Developing internal expertise and pilot projects is a prudent step, rather than waiting for a market-wide shock.

How does this align with other AI leaders' views?

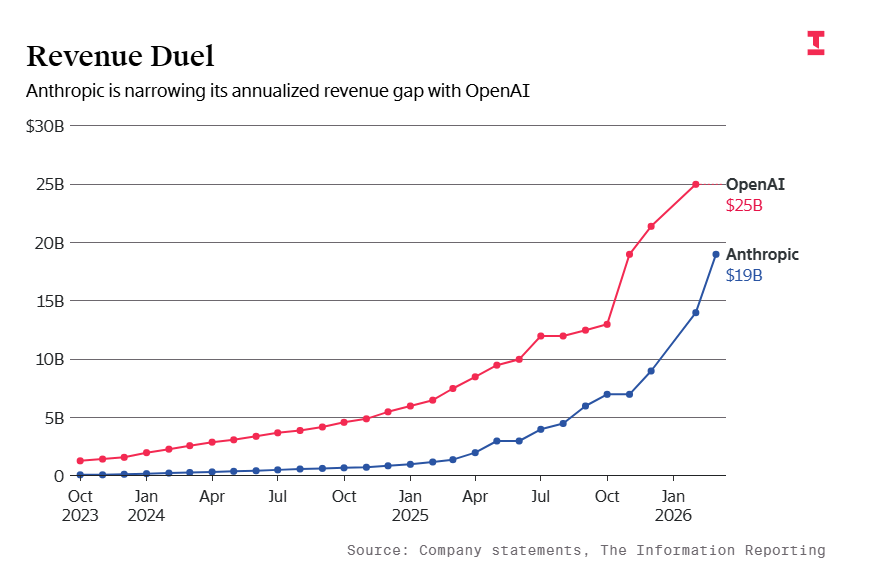

Views differ significantly. Some researchers at Anthropic and Google DeepMind have expressed concerns about rapid capability gains, while others in academia and industry believe progress will be more gradual and manageable. Altman's position is among the more urgent, though he typically focuses on societal adaptation rather than pausing development. The lack of consensus itself is a reason for organizations to develop their own informed perspectives.