World2Agent open-sourced a protocol standardizing real-world perception for AI agents. The protocol lets developers install sensors to feed environmental data directly into agentic workflows.

Key facts

- World2Agent open-sourced a perception protocol for AI agents.

- Protocol standardizes sensor-to-agent data flow.

- No adoption metrics or partner names disclosed.

- Competes with ROS 2, MQTT, and vendor SDKs.

- Announced via X on an unspecified date in 2026.

World2Agent just open-sourced a protocol that standardizes how AI agents perceive the real world. The announcement, made via X by @rohanpaul_ai, describes a method to connect physical sensors to AI agents, enabling them to ingest environmental data like temperature, motion, or visual inputs through a unified interface.

The protocol targets the growing gap between digital AI agents and physical environments. As agents increasingly control robots, drones, or IoT devices, a standardized perception layer becomes critical for interoperability. World2Agent's approach allows developers to "install a sensor" and have agents access that data without custom integration work [According to @rohanpaul_ai].

Why This Matters

This is not a product launch but an infrastructure play. By open-sourcing the protocol, World2Agent positions itself as a standard-setter in agent-environment communication, similar to how MQTT standardized IoT messaging or how Anthropic's Model Context Protocol (MCP) standardized tool access for LLMs. The unique take: the protocol could become the Rosetta Stone for agentic perception, but adoption depends on hardware vendors and agent frameworks implementing it.

No adoption metrics, pricing, or partner names were disclosed in the announcement. The source tweet is thin on technical details—no GitHub repo link, API spec, or sensor compatibility list was provided. The community response on X remains speculative, with some comparing it to existing protocols like ROS 2 or OpenAPI for sensors.

The Competitive Landscape

World2Agent enters a fragmented space. Existing solutions like ROS 2 for robotics, MQTT for IoT, and various vendor SDKs (Bosch, Texas Instruments) each solve parts of the problem but lack a universal agent-facing standard. If World2Agent's protocol gains traction, it could reduce integration costs for developers building multi-sensor agent systems, especially in warehouse automation, smart buildings, and autonomous vehicles.

What's Missing

The announcement lacks specifics: sensor types supported, latency benchmarks, security model, and whether it works with cloud-based or edge agents. Without these, the protocol remains a concept rather than a deployable tool. Developers should watch for a GitHub release or technical whitepaper in the coming weeks.

Key Takeaways

- World2Agent open-sourced a protocol to standardize how AI agents perceive the real world via sensors.

- No adoption metrics or technical details were disclosed.

What to watch

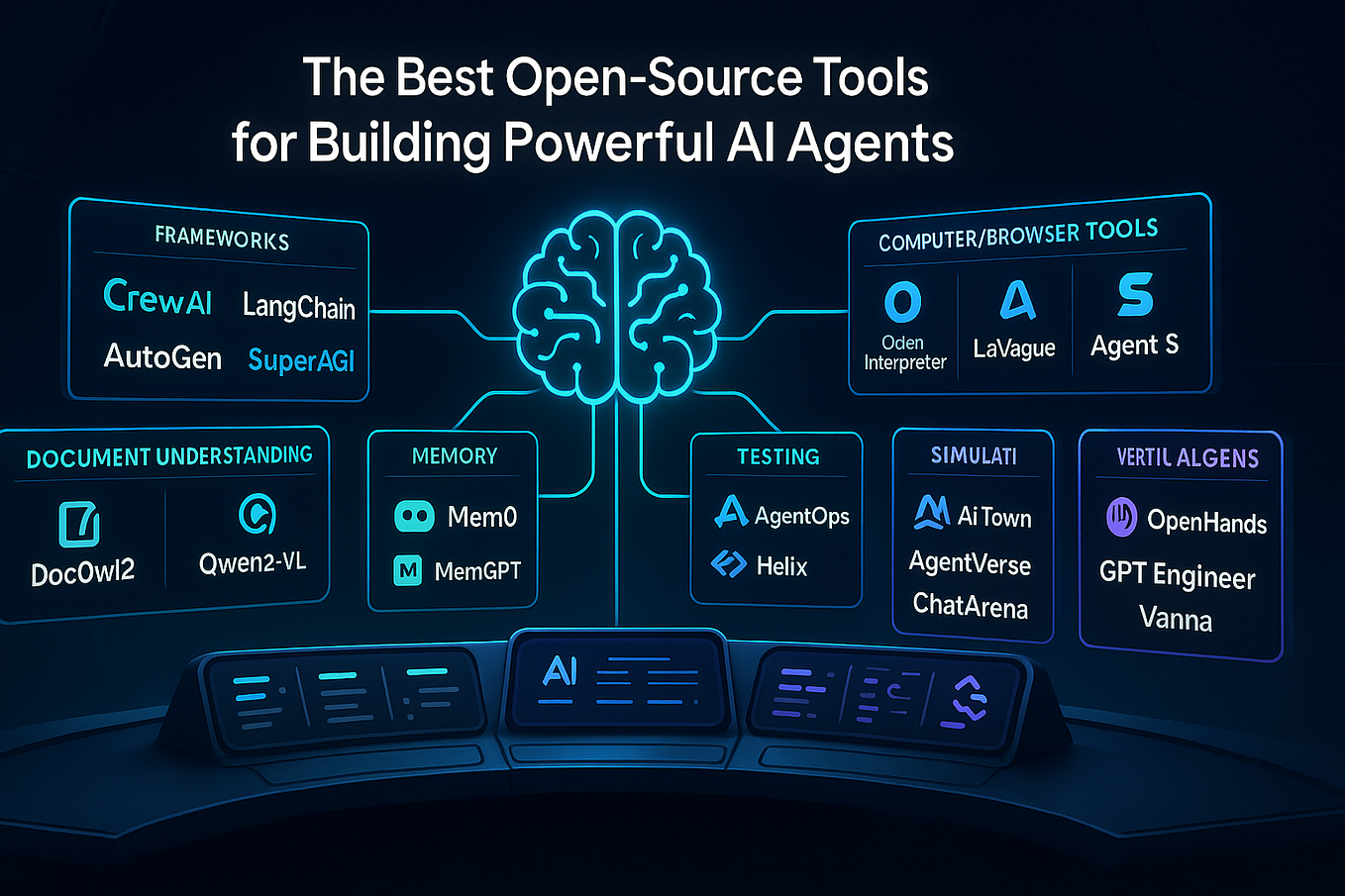

Watch for a GitHub repository release or technical whitepaper from World2Agent detailing supported sensors, latency, and security. Also monitor if agent frameworks like LangChain or CrewAI integrate the protocol—that would signal real adoption.