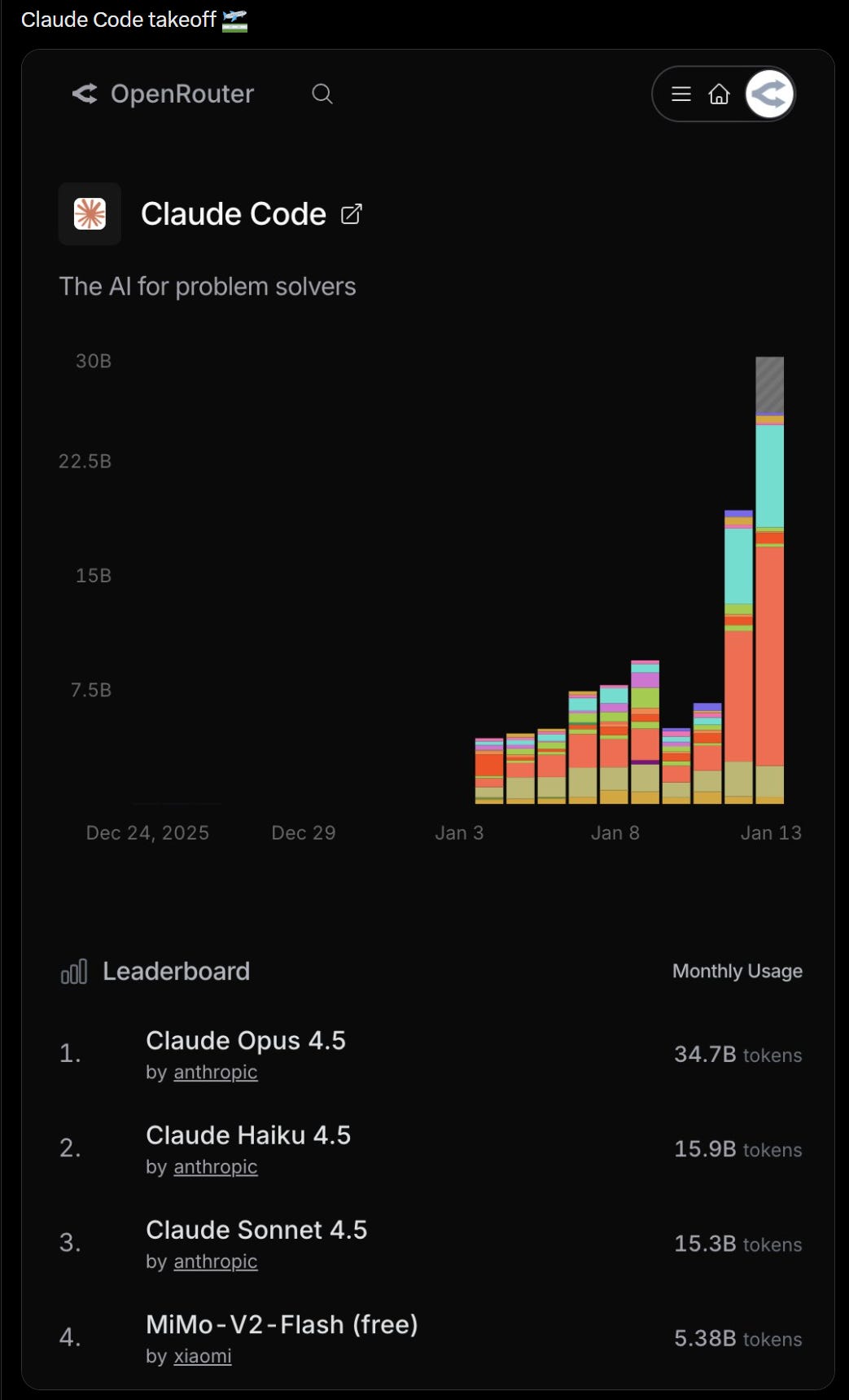

A new study revisiting a classic economics experiment has found that AI agents produce research results with dramatically less disagreement than human teams. When given the same dataset, AI models like Claude Code and Codex landed near the median of human economist responses but with a far tighter dispersion and no extreme outliers.

Key Takeaways

- A new study finds AI agents like Claude Code and Codex produce economic analyses with far less disagreement than human teams, landing near the human median but with no extreme outliers.

- This indicates AI's potential for scalable, consistent research support.

What Happened

The original 2015 study, "Economics: Is Reproducibility Practical?" published in Science, gave 146 teams of economists the same dataset and research question. The results showed wild variation in analytical choices, model specifications, and final conclusions, highlighting the subjective nature of economic research.

The new paper, referenced by researcher Ethan Mollick, reran this experiment using "agentic AI"—AI systems capable of performing multi-step research tasks. The AI agents were given the same prompt and data as the human teams.

Key Findings

The core finding is one of consistency over creativity. While the AI-generated analyses clustered around the human median result, the key difference was in the spread:

- Human Teams: Produced a wide range of answers, with significant extremes on both ends of the spectrum.

- AI Agents (Claude Code, Codex): Produced answers with "far tighter dispersion" and "no extremes."

This suggests AI doesn't necessarily find a "correct" answer that eludes humans, but rather converges on a narrow band of reasonable, consensus-like interpretations.

Context & Implications

The original study was a landmark in the reproducibility crisis discussion, showing how researcher degrees of freedom lead to inconsistent findings even with identical starting points. The AI result points to a different kind of tool: not an oracle, but a consistency engine.

Mollick notes this "suggests that AI is now useful for doing scalable research." The value proposition isn't that AI is smarter than top economists, but that it can perform competent, repeatable analysis at scale without the high variance of human judgment.

gentic.news Analysis

This study touches a nerve in the ongoing evolution of AI from a curiosity to a research tool. We've moved past the phase of asking if AI can do research (see our coverage of AI-assisted systematic reviews) to examining how it does it differently. The lack of extreme outliers is particularly telling. Human researchers are incentivized to find novel, publishable results, which can push analyses toward the edges. AI, trained on the corpus of existing literature, naturally regresses toward the methodological mean.

This aligns with a trend we noted in our analysis of Claude 3.5 Sonnet's coding capabilities: AI excels at producing competent, conventional solutions faster and more consistently than humans, but breakthrough innovation remains a human forte. For economic policy or business analysis where consistency and scalability matter more than novelty, this AI characteristic is a feature, not a bug.

However, this consistency comes with a caveat: it could calcify methodological consensus. If all AI tools are trained on similar data and converge on similar techniques, we risk enshrining current best practices as permanent, potentially slowing methodological innovation. The next phase of this research should involve deliberately prompting AI to generate divergent analyses to see if it can simulate the creative friction that drives science forward.

Frequently Asked Questions

What was the original economics study about?

The 2015 study, published in Science, gave 146 teams of professional economists the same dataset and research question to analyze. Despite identical starting points, the teams produced wildly different results due to subjective choices in data cleaning, model specification, and statistical technique, highlighting the reproducibility challenges in social science.

Which AI models were tested in the new study?

The new paper specifically mentions testing "Claude Code" and "Codex." Claude Code is likely a coding-specialized version of Anthropic's Claude model, while Codex is OpenAI's model that powers GitHub Copilot. Both are AI systems capable of understanding natural language prompts and generating code or analytical outputs.

Does this mean AI is better at economic research than humans?

Not necessarily. The AI agents landed near the median of human responses, not necessarily the best or most accurate ones. The key finding is about consistency, not superiority. AI produced tightly clustered results without extreme outliers, making it potentially valuable for scalable, standardized analysis where reducing variance is more important than chasing novel insights.

What does "agentic AI" mean in this context?

"Agentic AI" refers to AI systems that can perform multi-step tasks autonomously, such as reading a research prompt, accessing and cleaning a dataset, selecting appropriate analytical methods, running the analysis, and interpreting the results. This goes beyond simple text generation to encompass a workflow that mimics human research activity.