What Happened

A viral post by analyst George Pu (@TheGeorgePu) has crystallized a growing concern in AI circles: the pricing of frontier AI models is creating a widening global access gap. Pu's analysis shows that while $200/month represents just 0.3% of median monthly income in the United States, it balloons to 5% in the Philippines, 6% in India, and approaches 15% in Nigeria.

Both Anthropic and OpenAI have recently pushed users toward $200/month tiers — Anthropic's Max plan and OpenAI's Pro tier — arguing that the $20/month consumer plans don't cover the cost of frontier model inference. Pu's thread, which has garnered significant engagement, points out that even the "escape hatch" of self-hosting is increasingly out of reach: a Mac Studio capable of running local models costs more than many people's annual salary in developing economies.

The Numbers

United States ~$66,667 0.3% Philippines ~$4,000 5% India ~$3,333 6% Nigeria ~$1,333 15%These figures are rough approximations based on World Bank and national statistics data, but the order-of-magnitude differences are clear. For a Nigerian knowledge worker, a $200/month AI subscription would consume nearly one-fifth of their gross income.

The Frontier Cost Problem

The core tension is simple: frontier AI models are expensive to run. Inference costs for models like GPT-4o, Claude Opus, and Gemini Ultra remain high due to their size and the computational resources required. Both Anthropic and OpenAI have been transparent that their $20/month tiers are not profitable for heavy users.

However, the pricing structure creates a bifurcated market:

- Wealthy users in developed economies can access cutting-edge AI for a trivial fraction of income

- Price-sensitive users globally are priced out of frontier capabilities

- Self-hosting requires capital expenditure on hardware that may exceed annual income

This isn't just about consumer pricing. Many AI applications in healthcare, education, and agriculture — sectors where developing economies need the most help — require frontier-level reasoning capabilities that $200/month models provide.

Industry Context

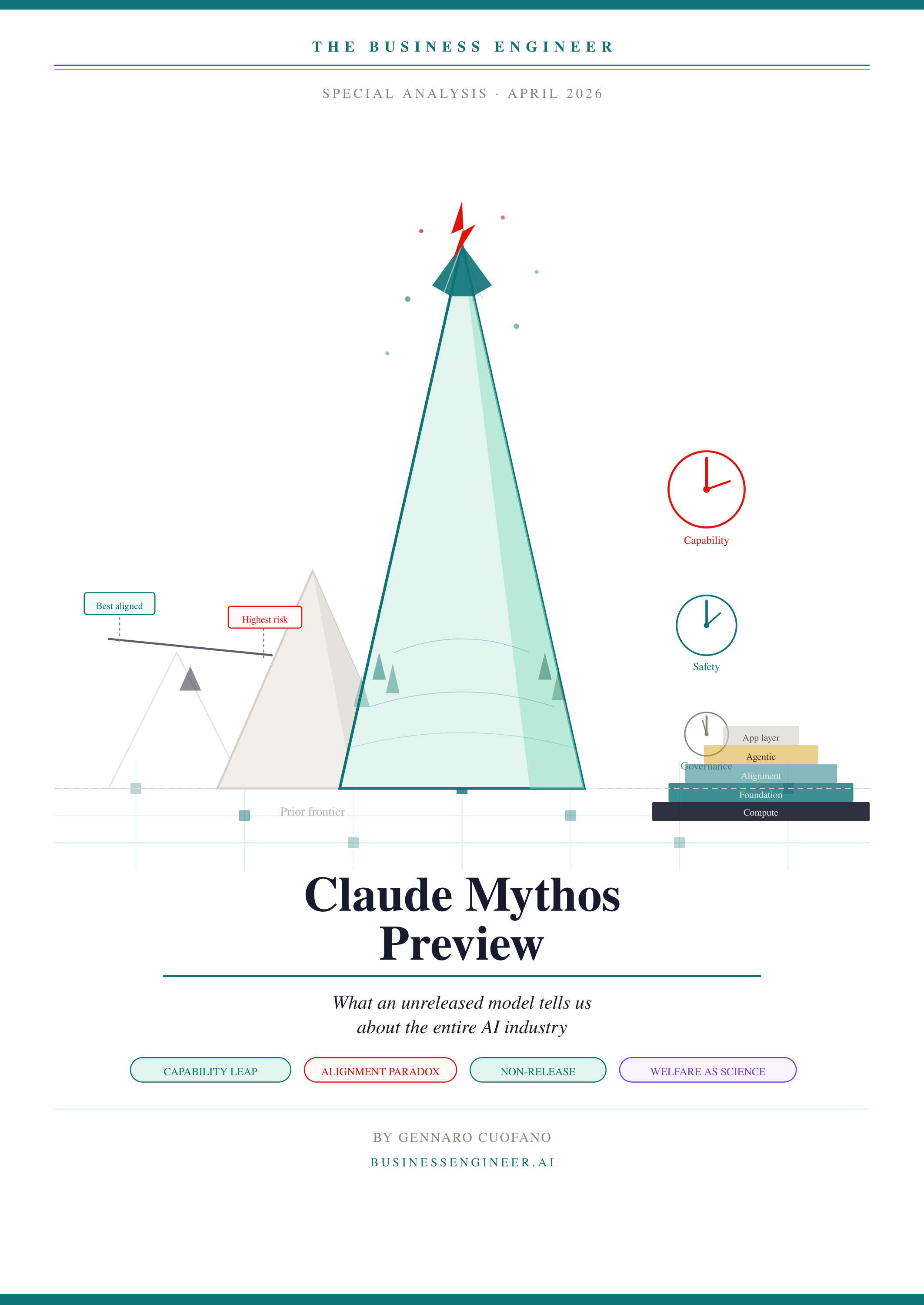

The pricing landscape has shifted rapidly over the past 18 months:

- OpenAI launched GPT-4 at $20/month in early 2023, then introduced a $200/month Pro tier in late 2024

- Anthropic followed with Claude Pro at $20/month and Claude Max at $200/month in early 2025

- Google offers Gemini Advanced at $20/month as part of Google One, with no $200 tier yet

- Meta continues to release open-weight models (Llama 3, Llama 4) that can be self-hosted, but require significant hardware

The open-weight alternative from Meta provides some relief, but running Llama 4-class models locally demands hardware (e.g., Mac Studio with 128GB+ unified memory, or multi-GPU setups) that costs $4,000–$10,000 — prohibitive for most individuals globally.

What This Means in Practice

For AI practitioners and businesses in developing economies, the calculus is stark:

- A Nigerian startup paying $200/user/month for 10 employees would spend $24,000/year — potentially exceeding the entire software budget

- A Philippine university wanting to provide frontier AI access to 100 students would face $240,000/year in subscription costs

- Self-hosting a frontier-class model requires capital expenditure of $10,000+ for a single-user workstation

The alternative — using smaller, cheaper models — means accepting lower capability. For tasks requiring complex reasoning, code generation, or multimodal understanding, the gap between frontier and mid-tier models remains significant.

Broader Implications

This pricing dynamic has several downstream effects:

Talent migration: Knowledge workers in developing economies may be at a competitive disadvantage if they cannot access the same AI tools as their counterparts in wealthier countries

Research inequality: AI research institutions in developing economies face higher barriers to frontier model access, potentially concentrating AI progress in wealthy nations

Market distortion: AI companies optimize for the highest-value users (enterprises in developed economies), potentially neglecting use cases specific to developing markets

Open-source pressure: The pricing gap increases demand for truly capable open-weight models that can run on consumer hardware — a bar that Meta's Llama series and Mistral's models are approaching but haven't fully met

gentic.news Analysis

This analysis from George Pu crystallizes a concern that has been building for months. We've previously covered Anthropic's pricing strategy shifts and the broader trend of AI companies moving toward usage-based and tiered pricing models. The fundamental issue is that frontier AI inference is genuinely expensive — estimates suggest GPT-4-class models cost $0.03–$0.06 per 1K tokens to run, meaning a heavy user consuming millions of tokens per month can easily exceed $200 in compute costs alone.

But the market dynamics here are more complex than simple economics. AI companies are currently in a land-grab phase, prioritizing user acquisition over profitability. The $20/month tier acts as a loss leader — capturing users who may upgrade to $200/month as they become dependent on the tools. This strategy works in wealthy markets but creates an access cliff in developing ones.

The comparison to self-hosting is particularly revealing. A Mac Studio M2 Ultra with 192GB unified memory costs $8,999 — roughly 7 months of median Nigerian income. Even if you could run a frontier-class model on it (which current models require more memory than even 192GB provides), the capital cost is prohibitive. The "just self-host" argument, while technically true, ignores the reality of global income inequality.

This pattern mirrors earlier technology access gaps — from mobile phones to cloud computing — but the stakes are higher with AI. Unlike previous technologies, frontier AI represents a qualitative leap in capability that may compound inequality: those who can afford it gain disproportionate productivity advantages, while those who cannot fall further behind.

Frequently Asked Questions

Why are AI companies charging $200/month?

Both Anthropic and OpenAI have stated that the $20/month consumer plans are not profitable for heavy users. Frontier model inference requires significant computational resources — estimates suggest GPT-4-class models cost $0.03–$0.06 per 1K tokens to run. Heavy users consuming millions of tokens monthly can cost the provider more than $20 in compute alone. The $200/month tier aligns pricing more closely with actual usage costs.

Can I get frontier AI capabilities for free or cheaper?

Several options exist but with trade-offs. Meta's open-weight Llama 3 and 4 models can be self-hosted on capable hardware (expect $4,000+ for a suitable machine). Smaller providers like Mistral offer competitive models at lower prices. Google's Gemini Advanced at $20/month includes some frontier capabilities. However, for the most capable models (GPT-4o, Claude Opus), there are currently no free or significantly cheaper alternatives.

How does this compare to previous technology access gaps?

The pattern is similar to earlier disparities in internet access, mobile phone adoption, and cloud computing — but the gap may be more consequential. AI tools directly enhance cognitive work and productivity. A knowledge worker with access to frontier AI may be 2-5x more productive in coding, analysis, and content creation tasks compared to one without. This compounds existing educational and economic inequalities.

Will AI pricing decrease over time?

Historical trends suggest inference costs will decrease as hardware improves and models become more efficient. However, frontier models are also becoming more capable and larger, which may offset efficiency gains. The current trajectory points to a tiered market: cheap or free access to capable but not frontier models, with premium pricing for the most advanced capabilities. This is similar to the GPU market, where high-end hardware remains expensive even as mid-range options become more affordable.