What's New

A new OpenAI model, reportedly called Spud (GPT-5.5), signals a strategic shift in AI development. According to a post by @kimmonismus, AI labs are moving away from relying on long chains of reasoning tokens (test-time compute) and instead focusing on making models smarter during pretraining.

Spud is described as smarter "out of the box" from pretraining, requiring fewer tokens for good answers. This means faster responses and lower costs per query, as it doesn't need to burn through extensive reasoning chains. The post also mentions Anthropic's Mythos as a similar model reflecting this trend.

Technical Details

While specific architecture details are not disclosed, the core idea is straightforward: instead of making models think harder at inference time (like OpenAI's o1 or o3), Spud is designed to be more capable from the start. This approach reduces latency and computational overhead, making it more efficient for real-world applications.

Fewer tokens per query directly translates to lower operational costs and faster response times, which is critical for scaling AI deployment.

How It Compares

This contrasts with recent trends in the industry, where models like OpenAI's o1 and DeepSeek-R1 rely heavily on chain-of-thought reasoning to improve accuracy. Spud's approach suggests a return to pretraining-focused innovation, potentially offering a more efficient path to better performance.

What to Watch

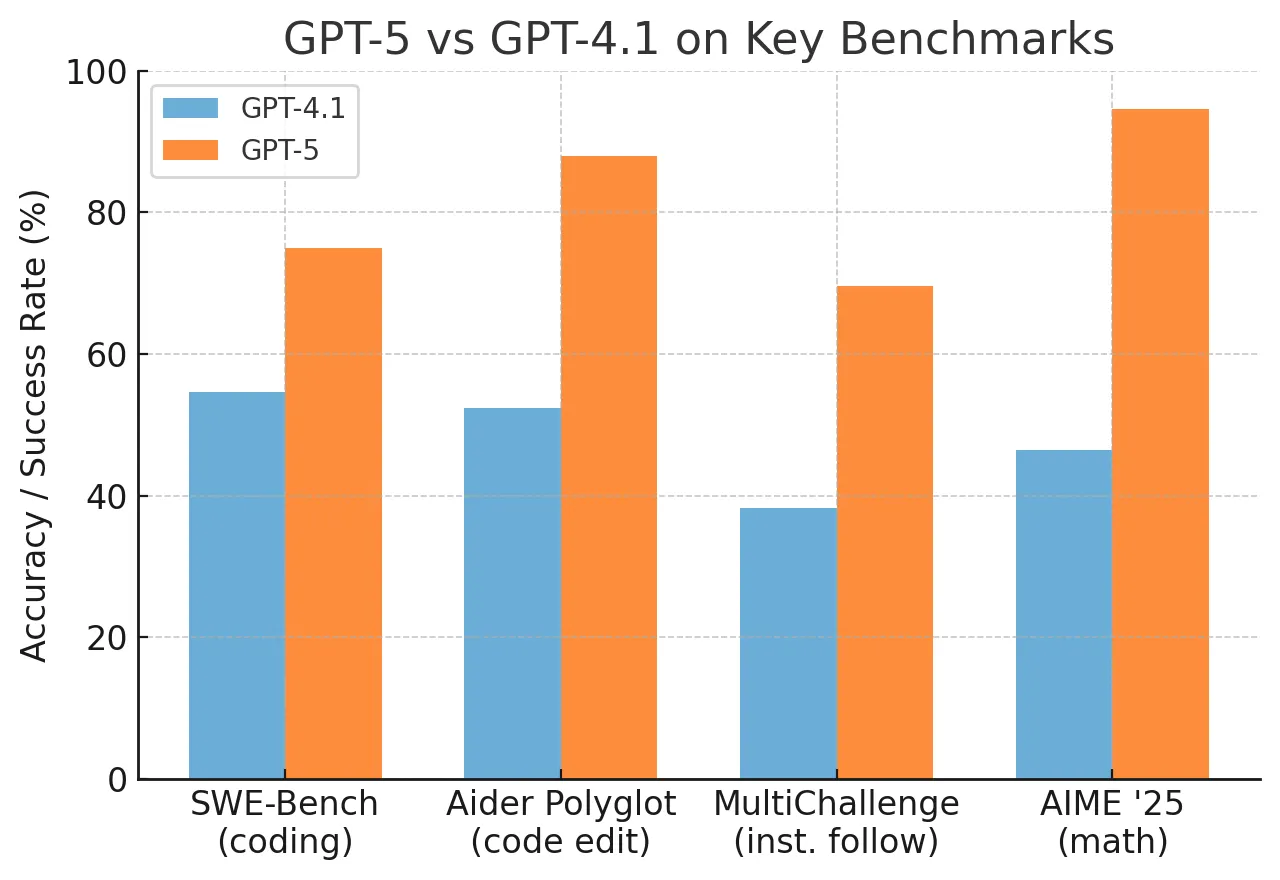

- Benchmarks: We need to see actual performance numbers to evaluate if this approach matches or exceeds reasoning-heavy models.

- Caveats: The source is a single post; official details from OpenAI are pending.

- Implications: If successful, this could reshape the cost structure of AI services, making advanced models more accessible.

gentic.news Analysis

This development reflects a broader industry trend of optimizing for efficiency. After the release of reasoning-heavy models like o1 and DeepSeek-R1, which showed impressive gains but at high computational costs, labs are now exploring pretraining improvements as a complementary path.

Our coverage of DeepSeek-R1's 79.8% on SWE-Bench Verified highlighted the power of test-time compute, but also noted the token overhead. Spud's approach could be seen as a response to that limitation, aiming for similar gains without the cost.

This also ties into the ongoing competition between OpenAI and Anthropic, with both reportedly working on models that prioritize pretraining. The mention of Anthropic's Mythos suggests this is a shared strategic direction, not an isolated experiment.

Frequently Asked Questions

What is GPT-5.5 Spud?

Spud is a rumored new model from OpenAI that focuses on improving performance through pretraining rather than relying on long chains of reasoning tokens at inference time.

How does Spud differ from GPT-5?

While GPT-5 likely uses reasoning-heavy approaches (like chain-of-thought), Spud is designed to be smarter from the start, requiring fewer tokens for good answers.

Why does fewer tokens matter?

Fewer tokens per query means faster response times and lower operational costs, making the model cheaper to run and more scalable for applications.

Is this confirmed by OpenAI?

No, this information comes from a post by @kimmonismus and has not been officially confirmed by OpenAI.