A recent analysis of Anthropic's rollout of its "adaptive thinking" feature posits that the development is less about user demand and more about a fundamental strategic and infrastructural challenge: a shortage of compute. The feature, which allows Claude models to dynamically allocate reasoning tokens per request, is framed as Anthropic's answer to OpenAI's model-routing system for GPT-5, but with a critical twist driven by resource limitations.

What's New: Dynamic Reasoning, Not Dynamic Models

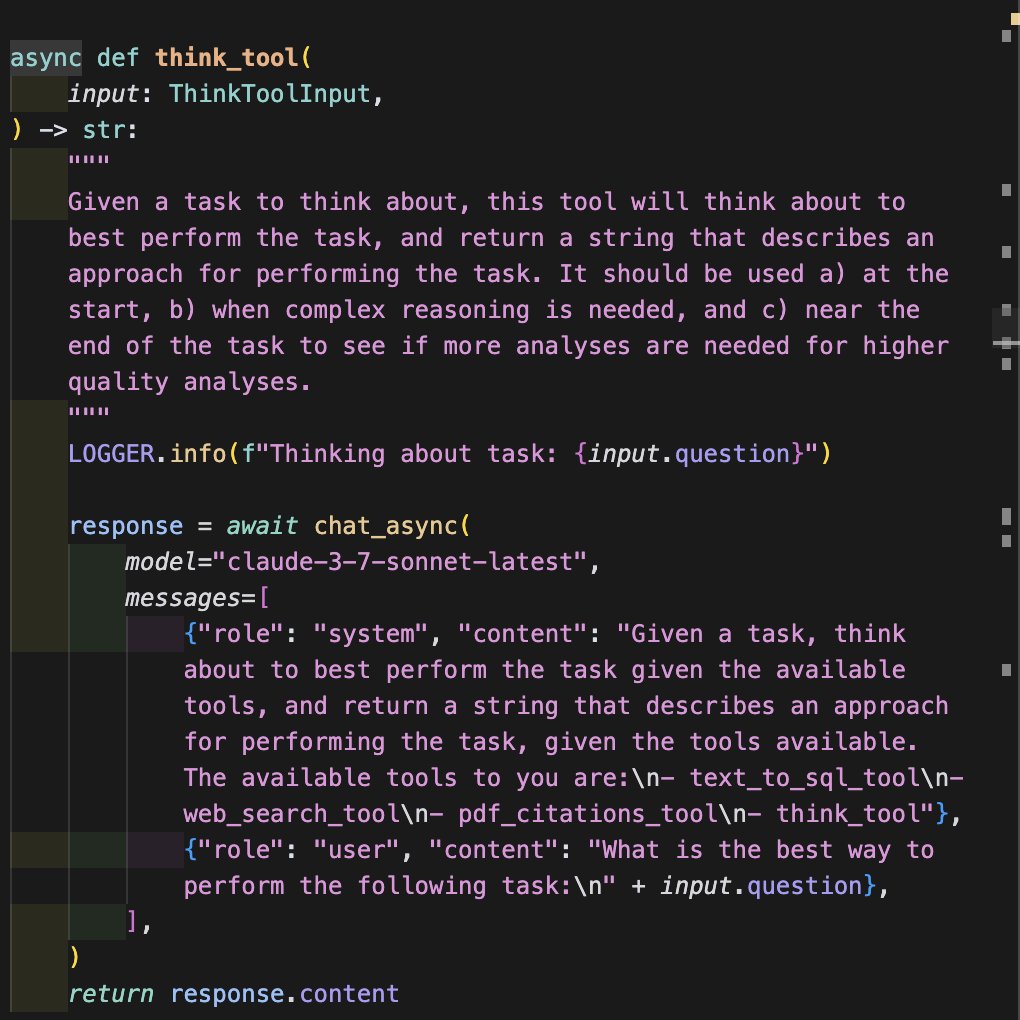

Unlike OpenAI's approach—which reportedly routes simple queries to a faster "Instant" model and complex ones to a more capable "Thinking" model—Anthropic's adaptive thinking keeps the user on a single model instance. That model internally decides how many reasoning steps (and therefore computational tokens) to expend on a given prompt. The stated goal is operational efficiency: using just enough compute to satisfactorily answer a query, thereby maximizing throughput from a finite pool of GPU resources.

The Strategic Context: Enterprise Focus and Compute Pressure

The analysis connects this technical feature directly to business realities. Anthropic has consistently positioned itself as a leader in the enterprise and business sectors, where reliability and predictable costs are paramount. However, the consumer-facing side of its API has been plagued by frequent "rate limit exhausted" errors, creating poor public relations optics compared to competitors who more frequently increase or reset usage caps.

This tension came to a head with the Claude 3.5 Sonnet release. The underlying driver, according to the analysis, is a referenced assessment from a leaked OpenAI memo: that Anthropic faces a "significant shortage of compute" and miscalculated its procurement needs. Adaptive thinking, therefore, is not a user-facing innovation but an infrastructural lever to stretch existing compute further, particularly for the high-value enterprise workloads that are Anthropic's profit center.

The Quality Trade-off and Industry Parallels

The initial implementation has raised concerns about consistent output quality, mirroring the early user experience with OpenAI's automated routing. Users reported OpenAI's system initially felt like a "bug," leading to the later introduction of a manual toggle for extended reasoning. The analysis expresses hope that Anthropic will follow a similar path, offering users more control, as purely automated efficiency gains can come at the cost of predictable performance.

gentic.news Analysis

This analysis aligns with a persistent trend we've tracked: the growing primacy of compute sovereignty in the foundation model war. Anthropic's strategic pivot to enterprise, which we covered in depth following its major funding rounds from Google and Amazon, was always going to strain resources against a dual-front competition with OpenAI (on capability) and open-source models (on cost).

The mention of the leaked OpenAI memo referencing Anthropic's compute shortage is particularly telling. It confirms the industry's worst-kept secret: that securing and financing massive GPU clusters is now the primary bottleneck, surpassing even algorithmic innovation. This development is less about a novel AI technique and more about a necessary rationing system. It echoes the rationale behind OpenAI's tiered model routing, a strategy we analyzed when GPT-5 launched, designed to manage inferencing costs at scale.

For practitioners, this signals a new phase. Model providers are no longer just selling capability but are actively managing the economic and physical constraints of delivering that capability. Features like adaptive thinking are the first visible symptoms of this backend reality. The risk, as noted, is user experience degradation. The opportunity for Anthropic is to turn this constraint into a refined efficiency product for its enterprise clients, who care deeply about total cost of operation.

Frequently Asked Questions

What is Anthropic's adaptive thinking?

Adaptive thinking is a feature for Claude models where the AI itself determines how many internal reasoning steps (or "tokens") to use when answering a query. It aims to optimize computational efficiency by not over-using resources on simple questions and allocating more to complex ones, all within a single model instance.

Why did Anthropic implement adaptive thinking?

According to industry analysis, the primary driver is not user demand but a strategic need to manage limited computing resources (GPU shortages) and improve operational efficiency. It's a response to competitive pressure and a focus on serving high-margin enterprise customers reliably, while mitigating poor PR from constant rate limits on the consumer API.

How does adaptive thinking differ from OpenAI's approach?

OpenAI's system for GPT-5 reportedly uses "routing," directing a query to one of two different model versions (a faster "Instant" or a deeper "Thinking" model). Anthropic's adaptive thinking uses the same model but varies its internal computational effort. Both aim for efficiency but through different architectural choices.

Are there quality concerns with adaptive thinking?

Early feedback suggests it can lead to inconsistent output quality, as the model's automatic decision on compute allocation may not always match user expectations. This mirrors early issues with OpenAI's automated routing, which later led to the introduction of a manual toggle for users who want guaranteed deeper reasoning.