Ethan Mollick, a professor at The Wharton School and prominent AI researcher, has introduced a new informal benchmark called PerfectSquashBench designed to measure how stubbornly image generation models anchor to initial prompts. The test reveals a significant behavioral difference between multimodal modalities: image models tend to get "much more stuck" on a particular direction than text models, often requiring users to clear the context window entirely to achieve meaningful variation.

The benchmark is simple in concept but revealing in practice. Users repeatedly prompt an image model to generate variations on a theme—in this case, creating "the perfect squash." Mollick's observation, shared on social media platform X, notes that "the squash remains merely fine after many attempts," indicating that the model struggles to break away from its initial interpretation or stylistic direction, even with iterative prompting.

What the Test Reveals

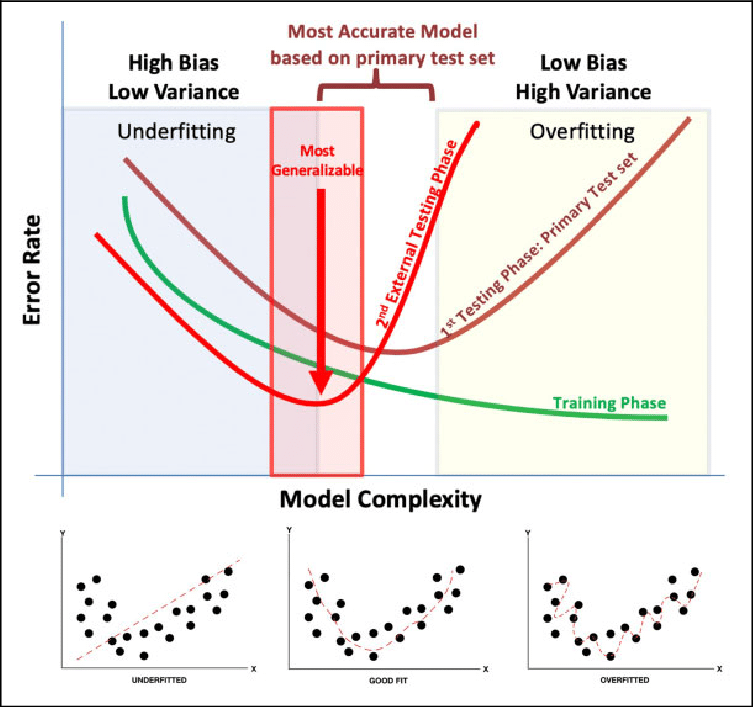

Anchoring bias—where an initial piece of information (the "anchor") disproportionately influences subsequent decisions—is a well-documented cognitive phenomenon in humans. Mollick's work suggests it's also a measurable characteristic in AI models, particularly in their generative behavior.

Key Finding: Image models demonstrate a stronger and more persistent anchoring effect than their text-only counterparts. When a text model receives a series of related prompts, it can more easily explore semantic variations, adjust tone, or shift perspective. An image model, however, often latches onto specific visual attributes from the first prompt—composition, color palette, art style, or object representation—and resists significant deviation in subsequent generations within the same session.

This has a direct impact on practical workflow. As Mollick notes, it often "requires clearing the context window fairly often" to get an image model to try a genuinely new approach. Simply asking for "a different version" or "more realistic" may produce only minor tweaks rather than a conceptual reset.

Context and Implications for Practitioners

This behavioral quirk isn't merely academic. For developers, designers, and researchers using image generation APIs (like those from OpenAI, Midjourney, or Stability AI), understanding this anchoring tendency is crucial for efficient prompting.

- Workflow Impact: Users may be wasting time and computational resources (and API credits) on long chains of iterative image prompts that yield diminishing returns. The more effective strategy, according to this finding, might be to start fresh conversations or sessions when seeking a substantially different visual direction.

- Benchmarking Model "Creativity": PerfectSquashBench could become a quick, qualitative tool for comparing different image models. A model that shows less anchoring and more flexibility across a prompt series might be considered more creatively responsive, all else being equal.

- Prompt Engineering: This highlights a difference in optimal prompt strategy between text and image generation. While text models can benefit from long, nuanced conversations building on context, image models might require a "reset and restart" approach for divergent ideas.

gentic.news Analysis

Mollick's informal benchmark touches on a growing area of research: quantifying the behavioral and psychological characteristics of AI models, not just their raw accuracy or fidelity. This aligns with trends we've covered, such as the push for more nuanced LLM evaluations beyond multiple-choice question benchmarks. It's less about "can the model draw a squash?" and more about "how does the model behave when asked to rethink the squash?"

The finding that image models anchor more strongly than text models is technically plausible. Text generation operates in a high-dimensional, discrete token space where synonyms, sentence structures, and narrative pivots offer many paths away from an anchor. Image generation, while also high-dimensional, often relies on latent spaces where initial noise seeds and early diffusion steps heavily constrain the final output. A model's internal representation of "squash" may be a narrower, more rigid cluster than its representation of the concept of a squash described in text.

For practitioners, this is a useful, practical heuristic. It suggests that the common practice of using a single, high-quality image as a "style reference" or starting point for a series (common in Midjourney's --sref or DALL-E 3's style seeds) is essentially formalizing and leveraging this anchoring bias. The challenge, as Mollick points out, is when the bias works against the user's goal of exploration.

Looking forward, we expect to see more research into "prompt adherence" versus "prompt flexibility" as desirable but competing model traits. Future model cards might even report metrics on anchoring bias, giving users a clearer expectation of how a model will behave in an extended creative session.

Frequently Asked Questions

What is anchoring bias in AI?

Anchoring bias in AI refers to a model's tendency to be overly influenced by the initial information or prompt it receives in a session, making it difficult to steer its outputs in a significantly different direction through follow-up prompts alone. It's an analogy to the cognitive bias of the same name in human psychology.

How can I get an image model to break its anchoring?

Based on Mollick's observation, the most reliable method is to clear the context window. This means starting a brand new chat session or generation thread. Simply adding negative prompts or asking for "a completely different style" within the same session is often less effective, as the model's internal state remains conditioned on the initial anchor.

Does this mean text models don't have anchoring bias?

No, text models can also exhibit anchoring, but Mollick's finding suggests the effect is quantitatively stronger in image models. Text models may fixate on a writing style, genre, or perspective, but they generally offer more degrees of freedom for semantic change without requiring a full reset.

Is PerfectSquashBench a formal, quantitative benchmark?

Not currently. As introduced, it is an informal, qualitative test and observation. However, it provides a clear framework that could be developed into a quantitative benchmark by, for example, measuring the visual similarity (via CLIP scores or other metrics) across a series of iterative prompts compared to a reset baseline.