Unverified social media reports are circulating that OpenAI has begun a limited, unannounced rollout of a model referred to as "GPT-5.5" for select users. The claims, originating from a post by user @kimmonismus on X, suggest the model is being "stealth tested."

The primary claim from these early, anonymous testers is that GPT-5.5 "outperforms Opus 4.7 for them." Opus 4.7 is the latest version of Anthropic's flagship Claude 3.5 Opus model. The source post explicitly notes that the specific tasks or benchmarks where this alleged outperformance occurs are unknown ("don’t know in which tasks tho"). No screenshots, benchmark results, API identifiers, or technical details were provided to substantiate the performance claim.

The post also speculates about a potential public release timeline, with the user hoping a release does not occur on an upcoming Monday due to travel plans. This is purely anecdotal and not an indication of any official schedule.

Key Takeaways

- Social media reports suggest OpenAI may be conducting limited, unannounced testing of GPT-5.5.

- Initial, unverified claims from testers indicate it outperforms Anthropic's Claude 3.5 Opus 4.7 model.

What This Means in Practice

If these user reports are accurate—a significant if—they would indicate OpenAI is following its established pattern of conducting limited external testing (a "red teaming" or preview phase) before a broader release. This phase is typically used to gather feedback on real-world performance, safety, and usability beyond internal evaluations.

For developers and enterprise users, a GPT-5.5 release would represent the next iterative update in OpenAI's product line, likely positioned between GPT-4 Turbo/4o and a future GPT-5. Based on OpenAI's naming conventions, a ".5" iteration typically signifies a substantial mid-cycle upgrade focusing on capability enhancements, cost reductions, or latency improvements, rather than a completely new architecture.

The Competitive Context

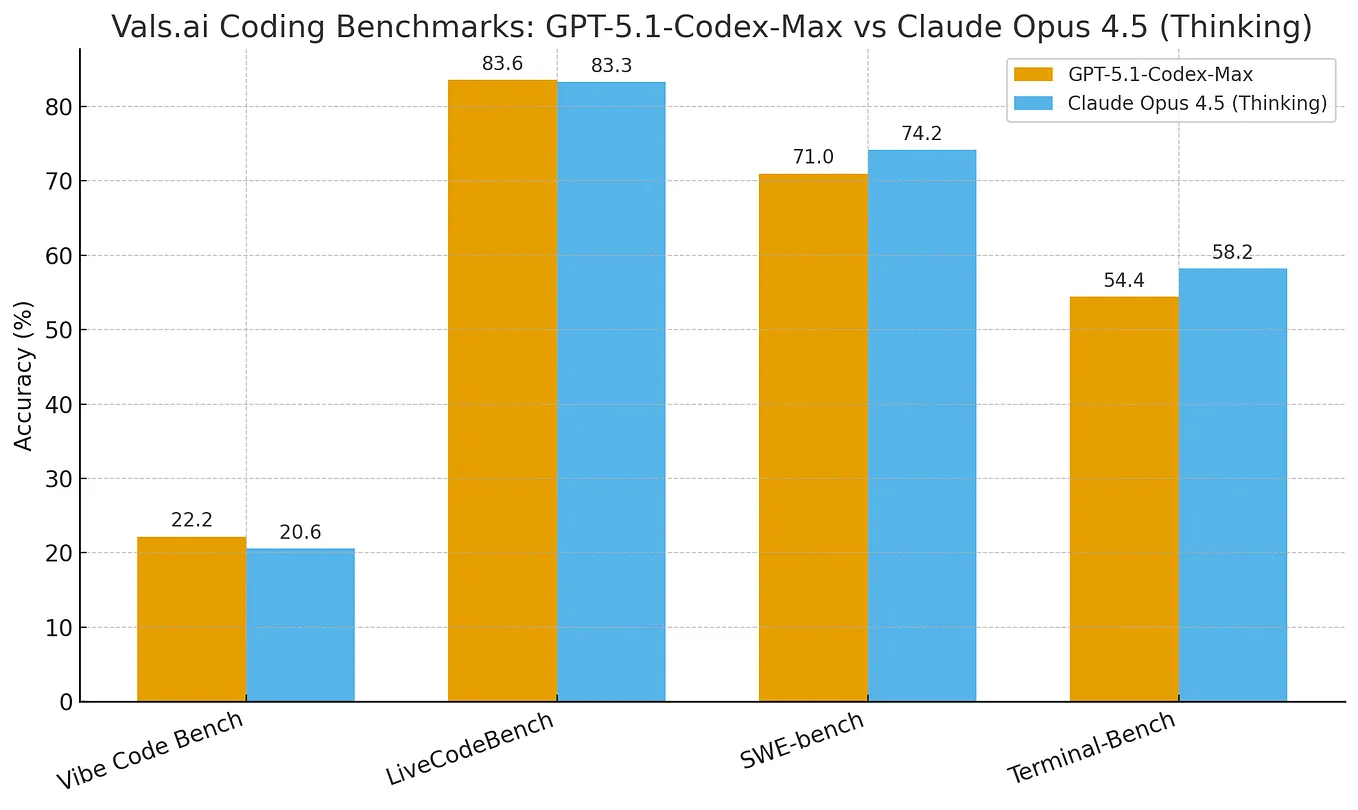

The immediate comparison drawn by testers is to Anthropic's Claude 3.5 Opus 4.7, which has been a strong competitor in the frontier model space, particularly praised for its reasoning, coding, and long-context capabilities. Any claim of outperforming Opus 4.7 would need to be validated across a standard suite of benchmarks (e.g., MMLU, GPQA, MATH, HumanEval) to be meaningful.

It is critical to treat these reports as unconfirmed rumors. OpenAI has made no official announcement regarding GPT-5.5. The company's standard practice is to announce model releases via blog posts, and its last major announced model was GPT-4o in May 2024. The AI community has been anticipating the next model iteration, whether called GPT-4.5, GPT-5, or another designation, for over a year.

gentic.news Analysis

This rumor aligns with the established competitive cadence and testing patterns of frontier AI labs. OpenAI and Anthropic have been in a tight, iterative race since the release of Claude 3 Opus in March 2024, which was quickly answered by OpenAI's GPT-4 Turbo update and then the multimodal GPT-4o. Anthropic regained attention with the Claude 3.5 Sonnet and Opus 4.x series, which made significant strides in coding and agentic workflows. A stealth test of a new OpenAI model would be a direct competitive response to maintain leadership.

Historically, credible leaks about OpenAI models, like details preceding the GPT-4 API release, have often originated from developers with early API access. However, performance claims without task specifics are of limited value. The mention of "Opus 4.7" is a specific and recent versioning, which lends a slight air of credibility to the reporter's awareness of the landscape, but it is far from proof.

For our readers—AI engineers and technical leaders—the actionable takeaway is to monitor the official OpenAI developer platform and blog for any updates. Should a model like GPT-5.5 be released, the key evaluation metrics will be its published benchmarks, API pricing, rate limits, and context window. Until then, these reports remain in the realm of speculation.

Frequently Asked Questions

Is GPT-5.5 officially announced by OpenAI?

No. As of now, there is no official announcement from OpenAI regarding a model named GPT-5.5. The information comes solely from unverified user reports on social media.

What would GPT-5.5 likely improve over GPT-4o?

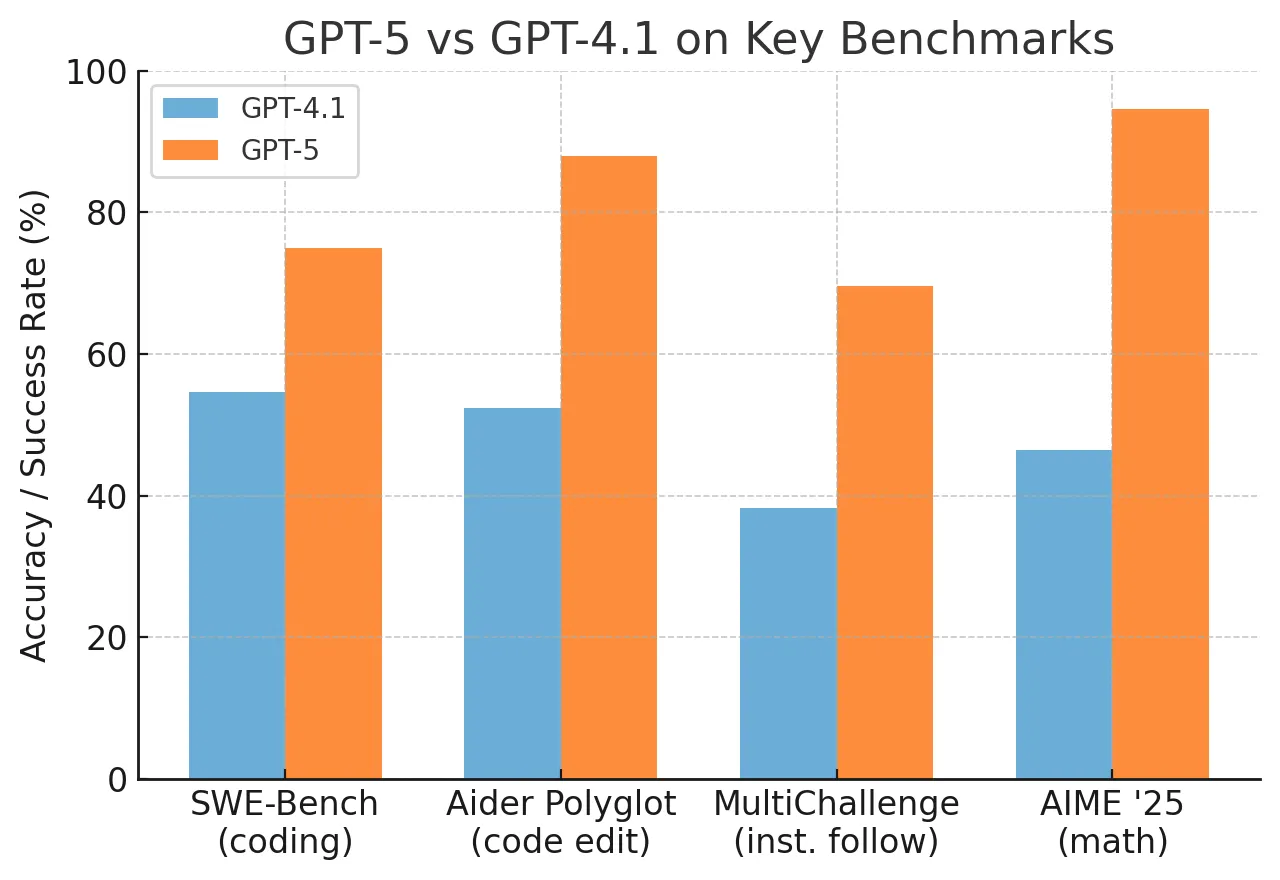

Based on OpenAI's past ".5" iterations and the current competitive landscape, a hypothetical GPT-5.5 would likely target improvements in complex reasoning, coding proficiency (challenging Claude 3.5 Sonnet/Opus), multimodal understanding, and efficiency (lower cost/token or faster inference). It might also expand context length beyond GPT-4o's 128K tokens.

How can I get access to test new OpenAI models early?

OpenAI typically grants early access to a select group of researchers, large enterprise partners, and developers in its red teaming network for safety and evaluation purposes. General developers can sometimes access new models through the API playground shortly before broad release, but structured early access programs are by invitation.

How does this rumor fit with expectations for GPT-5?

A "GPT-5.5" suggests an intermediate release. Many in the industry have been anticipating GPT-5 as a next-generation model with potentially new architectural paradigms. A 5.5 release could indicate that GPT-5 is further out, or that OpenAI is segmenting its releases into more frequent, incremental capability upgrades rather than monolithic generations.