Alibaba's Qwen team has released Qwen 3.6, a new iteration of its open-source large language model. The release, announced via social media, emphasizes three core attributes: it is free, uses open weights, and originates from China. The announcement positions it as a tool for "agentic coding on a small chip," highlighting its capability to run locally on consumer hardware like a laptop.

The release follows a user's testimony of running the previous version, Qwen 3.5, on a personal laptop for months, finding it "good enough" for coding tasks. The announcement frames Qwen 3.6 as a counterpoint to proprietary models from U.S. firms like Anthropic and OpenAI, which require identity verification ("wants my ID") for access to their advanced coding models, Mythos and Codex, respectively. The core argument presented is one of trust and ownership: "The AI I trust isn't on a rented server. It's on a drive I own."

Key Takeaways

- Alibaba's Qwen team released Qwen 3.6, an open-weights AI model for local deployment.

- This provides a free, private alternative to ID-verified models like Anthropic's Mythos and OpenAI's Codex.

What Happened

The Qwen team, under Alibaba Cloud, has shipped the Qwen 3.6 model. As a direct successor to Qwen 3.5, it continues the project's commitment to providing open-weight models. The key value proposition is immediate, free access without the need for identity verification, coupled with the ability for full local deployment. This allows developers and researchers to run the model on their own hardware, ensuring data privacy and operational control.

Context

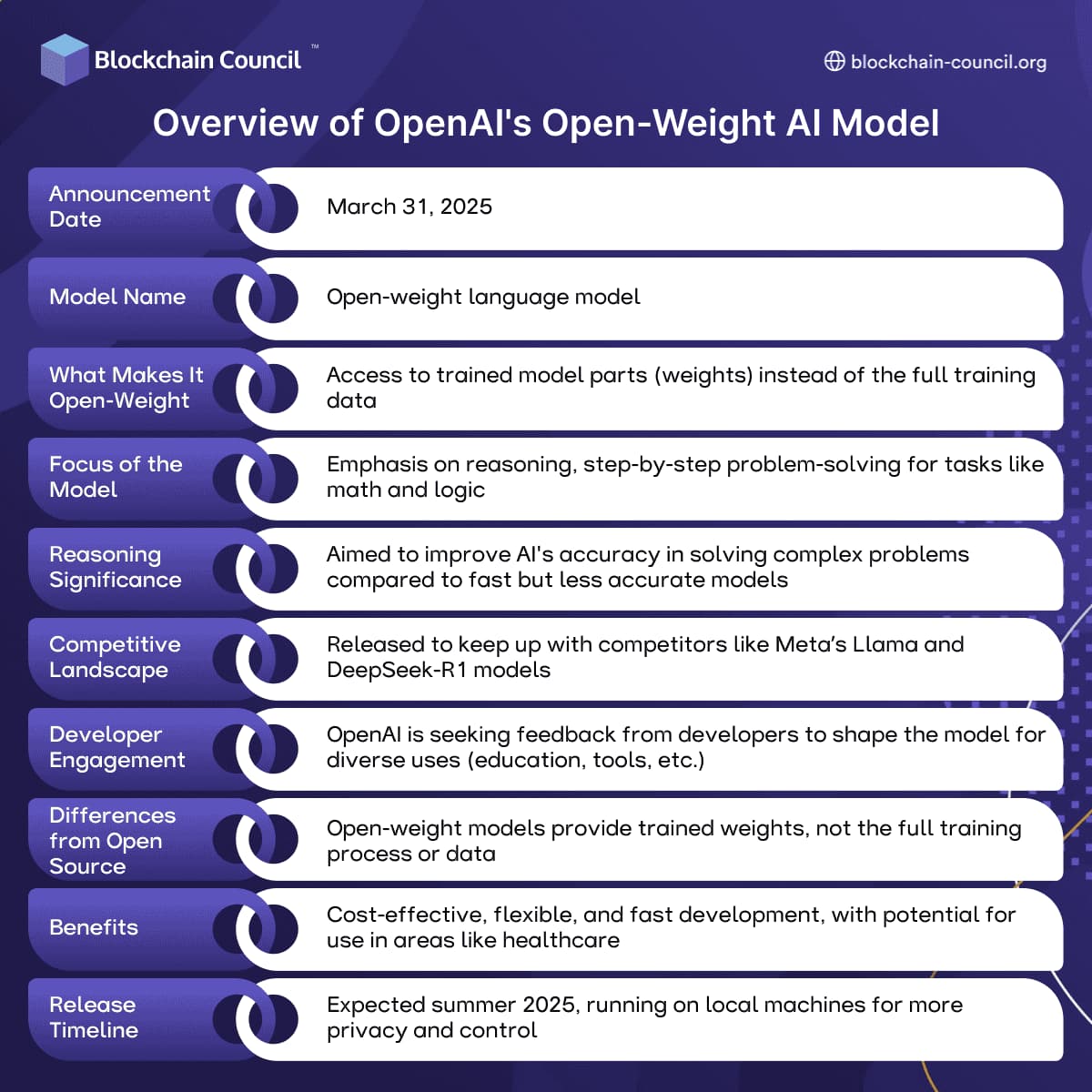

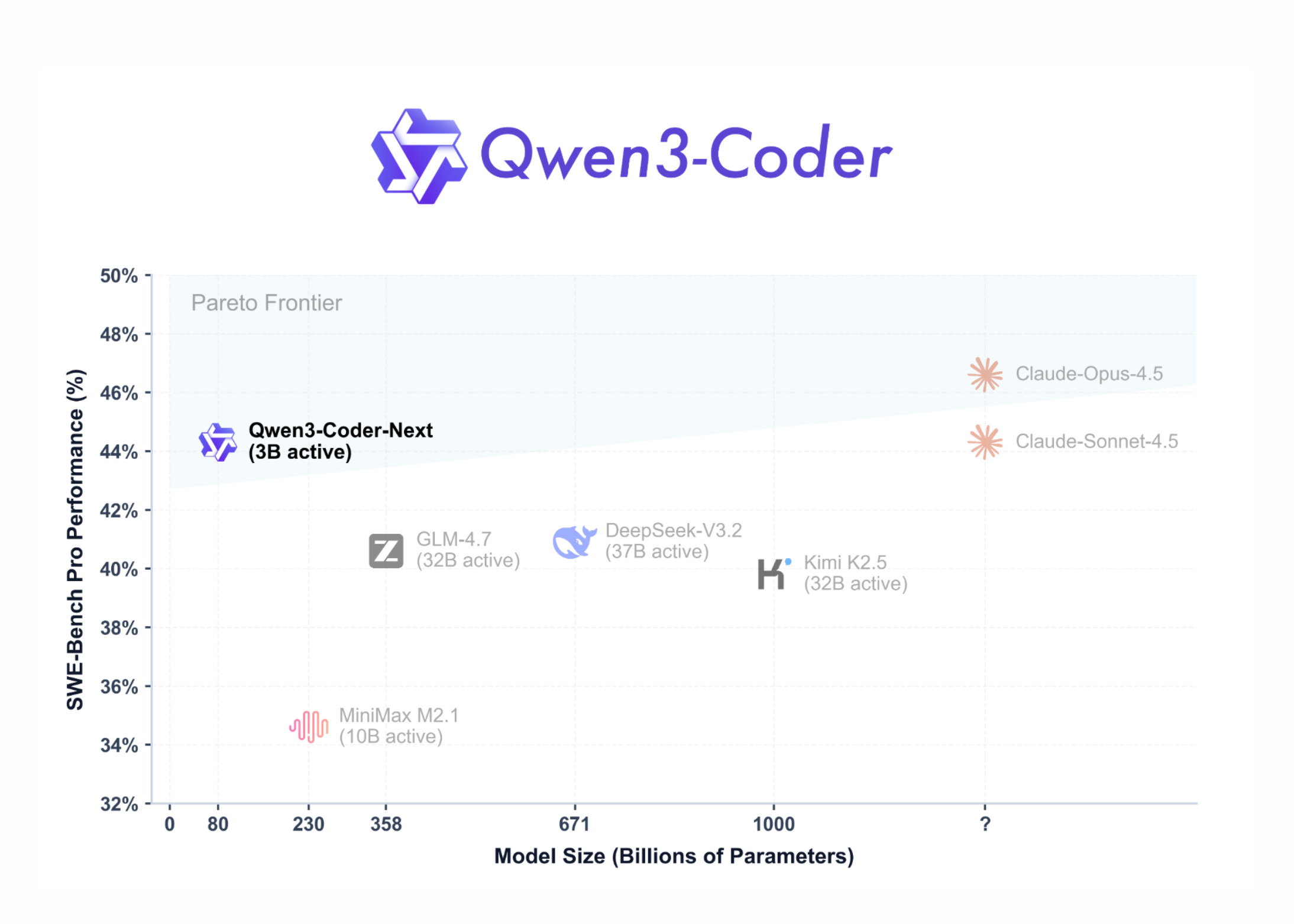

The Qwen series has been a significant player in the open-source LLM landscape, particularly noted for its strong coding capabilities. The release of Qwen 3.6 continues a trend of increasingly capable models being made available under permissive licenses, challenging the dominant API-based, closed-model paradigm led by OpenAI, Anthropic, and Google.

The mention of "agentic coding" refers to the model's ability to perform multi-step coding tasks, potentially involving planning, tool use, and execution—a key frontier in AI-assisted software development. The ability to perform this locally, without sending code to a third-party server, is a major draw for developers working with proprietary codebases or under strict data governance rules.

gentic.news Analysis

This release is a direct shot across the bow of the Western AI giants' commercialization strategies. While OpenAI's Codex (powering GitHub Copilot) and Anthropic's newly launched Mythos coder model operate behind paywalls and identity checks, Qwen provides a frictionless, private alternative. This aligns with a broader geopolitical and technological trend we've been tracking: the decoupling of AI stacks and the rise of sovereign, locally-runnable models. As we covered in our analysis of China's 2025 AI Sovereignty Directive, there is a concerted push to develop and deploy domestic AI infrastructure that reduces dependency on foreign technology.

The timing is also notable. It follows closely on the heels of Meta's release of Llama 3.2, another major open-weight model family, and Anthropic's launch of the Claude 3.5 model family and Mythos. The AI landscape is rapidly bifurcating into two camps: the closed, API-driven models optimized for revenue and control (OpenAI, Anthropic, Google Gemini) and the open-weight models optimized for adoption, customization, and local deployment (Meta's Llama, Qwen, Mistral AI). Qwen 3.6 strengthens the latter camp, particularly for the coding vertical.

For practitioners, the message is clear: high-performance coding AI no longer requires a credit card or a passport. The barrier to entry for private, agentic coding assistance is now the cost of a consumer GPU or even a high-end laptop. This will accelerate experimentation and integration of AI into local development workflows, especially in enterprises and regions with data residency laws. However, the trade-off remains: while Qwen is free and private, users must shoulder the computational cost and complexity of local deployment, and the model's performance may not match the very latest frontier models from OpenAI or Anthropic in all benchmarks.

Frequently Asked Questions

What is Qwen 3.6?

Qwen 3.6 is the latest large language model released by Alibaba's Qwen team. It is a free, open-weights model designed for tasks like coding and can be downloaded and run on local hardware, such as a personal laptop or server.

How does Qwen 3.6 differ from OpenAI's Codex or Anthropic's Mythos?

The primary differences are access, cost, and deployment. Qwen 3.6 is free and its model weights are publicly available, allowing for local execution. In contrast, Codex (via GitHub Copilot) and Anthropic's Mythos are proprietary services accessed via API, typically requiring payment and identity verification, and your code/data is processed on their servers.

What does "agentic coding on a small chip" mean?

This phrase suggests that Qwen 3.6 is capable of performing complex, multi-step programming tasks ("agentic" behavior) on hardware with limited computational resources, like a laptop's CPU or an integrated GPU ("a small chip"). It highlights the model's efficiency and suitability for local deployment.

Where can I download Qwen 3.6?

The model is likely available through the official Qwen project repositories, such as on Hugging Face or ModelScope. Users should search for "Qwen 3.6" on these platforms or visit the official project page from Alibaba Cloud for download links and documentation.