OpenAI has released GPT-5.4, the latest iteration in its flagship model series, introducing a significant new capability: direct computer control through an API. The model represents a push toward agentic AI that can perform tasks on a user's behalf by interacting with a graphical user interface. However, benchmark data reveals it continues to trail Anthropic's Claude 3.5 Sonnet on the demanding SWE-Bench coding evaluation, highlighting persistent competition in the frontier model space.

Key Takeaways

- OpenAI launched GPT-5.4, featuring a 'Computer Use' API that lets the model control a user's desktop.

- Despite improvements, it scores 78.5% on SWE-Bench, behind Claude 3.5 Sonnet's 81.2%.

What's New: The "Computer Use" API

The headline feature of GPT-5.4 is the Computer Use API, currently in limited beta. This allows the model to take screenshots of a user's desktop, process the visual information, and then execute actions via simulated mouse and keyboard inputs. The intended use case is automating multi-step digital tasks that involve navigating between applications, filling forms, or retrieving information from a visual interface.

According to the release notes, the system is designed with safeguards, requiring explicit user permission for each session and operating within a sandboxed environment. Early examples shown include automating data entry from a PDF into a spreadsheet and configuring software settings through a GUI.

Technical Details and Performance

OpenAI has not disclosed the precise architecture or scale of GPT-5.4. It is described as a multimodal model that processes both text and visual inputs from screen captures. The company has published a set of benchmark scores comparing it to its predecessor, GPT-4 Turbo, and key competitors.

Key Benchmark Results

MMLU (Knowledge) 87.2% 86.5% 85.9% GPQA Diamond (Expert QA) 58.1% 52.3% 56.7% MATH 88.5% 87.1% 89.1% SWE-Bench Verified (Coding) 78.5% 75.1% 81.2% HumanEval (Python) 92.7% 90.2% 94.3%The data shows a clear pattern: GPT-5.4 makes strong gains in knowledge and reasoning tasks (MMLU, GPQA) but remains narrowly behind Claude 3.5 Sonnet in mathematical and, most notably, software engineering benchmarks. The 4.3 percentage point gap on SWE-Bench Verified is particularly significant for developers evaluating coding assistants.

How the Computer Control Works

The technical approach involves a specialized vision encoder that converts screen pixels into a latent representation the language model can reason about. The model then outputs a structured action plan, which is translated into operating system-level commands via the API client. This is a step beyond previous "code interpreter" or function-calling features, as it deals with the unstructured, pixel-based reality of a desktop environment.

Training likely involved a combination of traditional web text, code data, and a novel dataset of screen recordings paired with corresponding action sequences. This aligns with a broader industry trend of training models on "agent trajectories" to teach sequential decision-making in digital environments.

Limitations and Access

OpenAI explicitly notes the model's limitations. The computer control feature is not designed for real-time, high-precision tasks like gaming. Hallucination remains a risk—the model might misinterpret screen elements or attempt incorrect actions. Rate limits are strict in the beta phase.

Pricing for the standard text/vision API is set at $0.005 per 1K input tokens and $0.015 per 1K output tokens. The Computer Use API carries a 20% surcharge. The model is available now in the API and is rolling out to ChatGPT Plus and Enterprise tiers over the coming week.

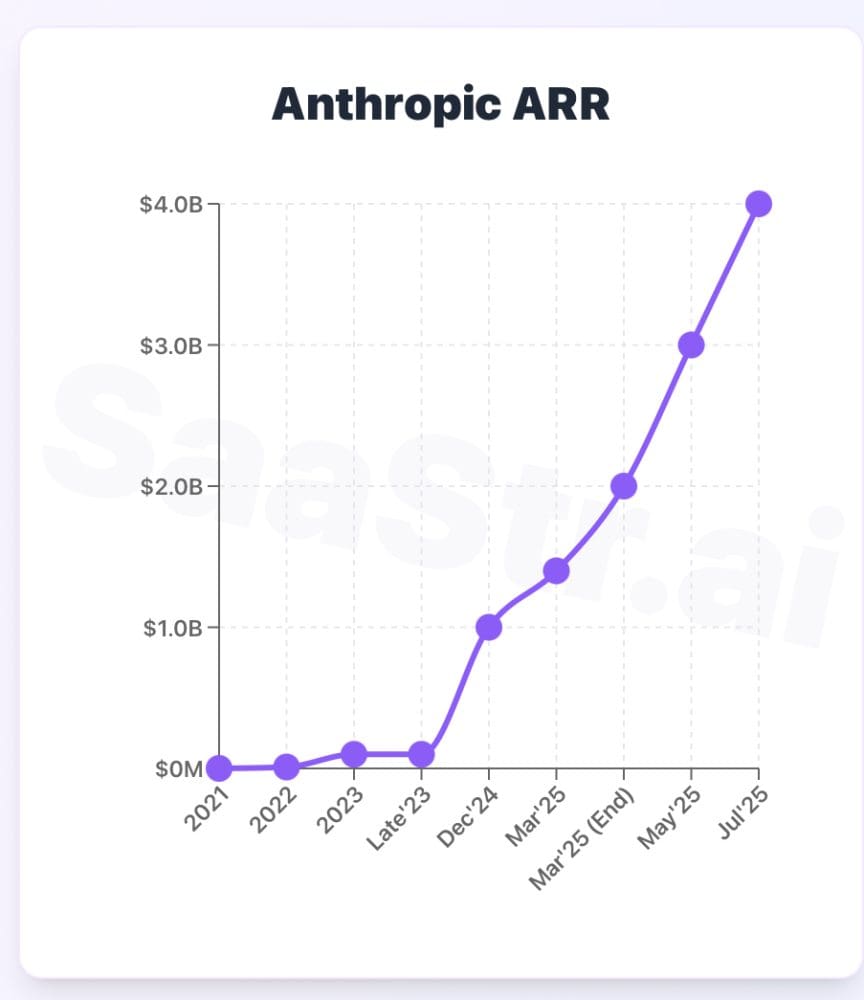

gentic.news Analysis

GPT-5.4's launch continues the direct, benchmark-for-benchmark competition between OpenAI and Anthropic that has defined the last 18 months. This follows Anthropic's release of Claude 3.5 Sonnet in June 2025, which itself was a direct response to OpenAI's GPT-4o. The persistent Claude lead on SWE-Bench, a benchmark based on real GitHub issues, underscores Anthropic's continued strength in coding—a critical battleground for developer adoption and revenue.

The Computer Use API represents the most concrete step yet toward commercial AI agent products from a major lab. This aligns with trends we've covered, including Google's Project Astra demos and xAI's Grok integrating real-time web search. However, OpenAI is taking a more API-centric, tool-integration approach compared to Google's assistant-like vision. The success of this feature will depend less on benchmark scores and more on reliability, safety, and the cost-effectiveness of automating tasks versus human labor.

For practitioners, the message is clear: model selection is becoming increasingly task-specific. GPT-5.4 may be the preferred choice for research or agentic workflows requiring computer control, while Claude 3.5 Sonnet retains an edge for pure code generation and complex reasoning tasks. The gap is narrowing, but specialization remains.

Frequently Asked Questions

What is the GPT-5.4 Computer Use API?

It is a new API endpoint that allows GPT-5.4 to take screenshots of a desktop, understand the visual context, and perform actions by simulating mouse clicks and keyboard typing. It is designed to automate multi-step digital tasks within a secured, user-permissioned session.

How does GPT-5.4 compare to Claude 3.5 Sonnet for coding?

Based on published benchmarks, Claude 3.5 Sonnet still holds a lead on coding-specific evaluations. It scores 81.2% versus GPT-5.4's 78.5% on SWE-Bench Verified, and 94.3% versus 92.7% on HumanEval. For developers focused solely on code generation, Claude may still be the stronger option.

Is GPT-5.4 available to the public?

Yes, the model is available via the OpenAI API now. The Computer Use feature is in limited beta, requiring a separate access request. The standard text/vision capabilities are rolling out to ChatGPT Plus and Enterprise subscribers throughout the week of April 7, 2026.

What are the main risks of the computer control feature?

The primary risks are hallucination (the model misinterpreting the screen and taking wrong actions) and security. OpenAI has implemented safeguards like session-specific user consent and sandboxing, but users should avoid granting access to sensitive systems or financial accounts during the beta period.