OpenAI has formally announced GPT-5.4-Cyber, a specialized cybersecurity variant of its GPT-5.4 model. Unlike a standard product launch, this model will not be publicly available. Instead, OpenAI is restricting access to a select group of verified cybersecurity professionals and organizations through its Trusted Access for Cyber (TAC) framework, citing the sensitive dual-use nature of advanced cyber capabilities.

This move mirrors a broader industry shift, exemplified by Anthropic's recent Claude Mythos Preview, where the most powerful AI models are being deployed through controlled, trust-based channels rather than open APIs.

Key Takeaways

- OpenAI has released GPT-5.4-Cyber, a fine-tuned version of its flagship model optimized for cybersecurity tasks.

- Access is strictly limited to verified defenders through a new trust-based framework, continuing a trend of controlled high-capability AI releases.

What OpenAI Announced

GPT-5.4-Cyber is not a new foundation model. It is a fine-tuned version of GPT-5.4, OpenAI's current flagship model, specifically optimized for cybersecurity workflows. According to the announcement, the fine-tuning was performed in two key ways:

- Enhanced Capability: The model has been trained to excel at security-specific tasks like vulnerability research, threat analysis, and defensive programming.

- Relaxed Safeguards: The model's internal safety filters have been selectively adjusted to allow it to respond to queries and perform actions related to cybersecurity that a standard model would refuse. This includes discussing exploit techniques, analyzing malware, or simulating attacks for defensive purposes.

The core of the announcement is not the model's specs, but its governance model. OpenAI is explicitly withholding GPT-5.4-Cyber from general availability to prevent misuse.

The Trusted Access for Cyber (TAC) Framework

OpenAI is managing access through its TAC framework, described as an "identity- and trust-based system." The goal is to provide enhanced AI tools exclusively to verified defenders.

- Expanded Reach: OpenAI states it is expanding TAC to "thousands of verified individual defenders" and "hundreds of teams" defending critical software.

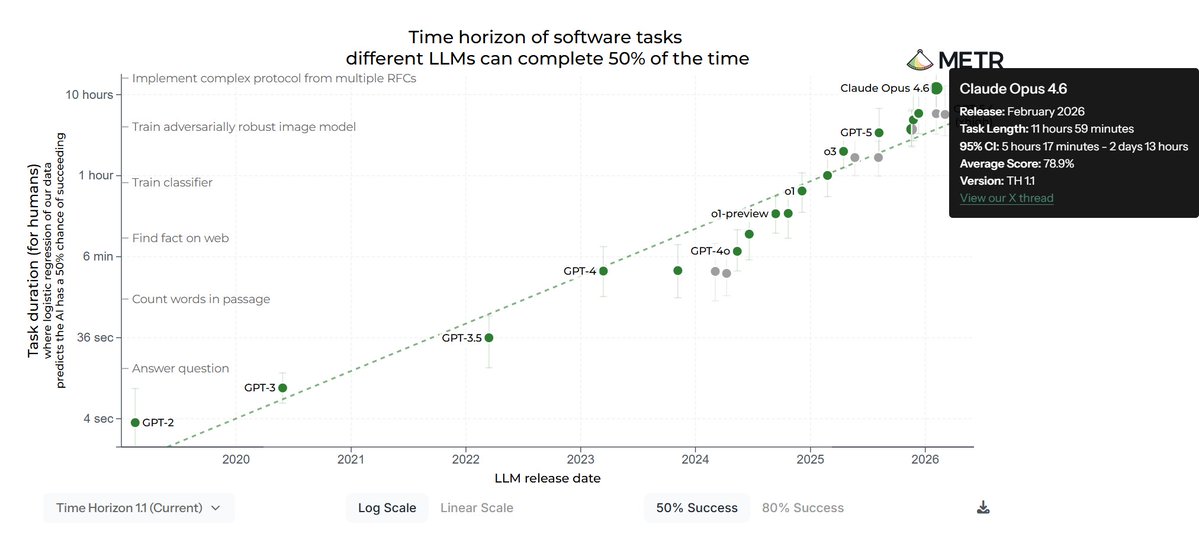

- Pyramid of Access: TAC functions like a tiered pyramid. Broader access is granted to less-capable models (like GPT-5.2 variants), while GPT-5.4-Cyber sits at the top tier.

- Eligibility: Currently, only existing TAC customers who undergo further authentication to prove they are "legitimate cyber defenders" are eligible to request access to GPT-5.4-Cyber. There is no guarantee of approval.

How to Request Access (If Eligible)

For those in the top tier of TAC, OpenAI outlines a direct request process. The primary method is to contact the company through a dedicated channel, though the public announcement does not specify the exact point of contact.

The Stated Use Cases

OpenAI positions GPT-5.4-Cyber for legitimate security work:

- Security Education

- Defensive Programming

- Responsible Vulnerability Research

)

The model is intended to act as an advanced assistant for professionals who are already operating in these domains, not as a tool for novice exploration of cyber techniques.

gentic.news Analysis

This announcement is a significant data point in the evolving strategy of frontier AI labs. It's not about a technical breakthrough, but a policy and deployment breakthrough. OpenAI is institutionalizing a model where capability is directly gated by identity and trust, moving beyond pure API rate limits or cost barriers.

This follows a clear pattern we've tracked. In late 2025, Anthropic launched its Claude Mythos Preview under similar restrictive terms, exclusively for partnered firms in cybersecurity. OpenAI's TAC framework appears to be a more structured and scalable version of this concept. The trend indicates that labs now view their most capable models not as commodities, but as controlled instruments for specific high-stakes industries.

Technically, the mention of "relaxed safeguards" for dual-use cyber activity is the most critical detail. It acknowledges a fundamental tension: the same reasoning that makes an AI powerful for a defender (understanding attack chains) makes it dangerous in the wrong hands. OpenAI's solution is to outsource the vetting of "right hands" to a rigorous identity framework rather than trying to solve the alignment problem perfectly within the model itself.

For practitioners, the message is clear: the era of easily accessing the absolute cutting-edge of AI capability via a credit card is ending for certain domains. Strategic partnerships and verified professional status are becoming key to unlocking the most powerful tools. This will likely accelerate the formation of AI-powered security consortiums and could create a two-tier ecosystem within the infosec industry.

Frequently Asked Questions

What is GPT-5.4-Cyber?

GPT-5.4-Cyber is a specialized version of OpenAI's GPT-5.4 model that has been fine-tuned for cybersecurity tasks. It has enhanced capabilities for security analysis and relaxed internal safeguards to allow it to engage with sensitive dual-use topics that standard models would refuse.

How can I get access to GPT-5.4-Cyber?

Access is severely restricted. You must first be an existing customer within OpenAI's Trusted Access for Cyber (TAC) framework. From there, you must undergo further authentication to prove you are a legitimate cybersecurity defender and formally request access from OpenAI. Approval is not guaranteed.

Why is OpenAI not releasing GPT-5.4-Cyber to the public?

OpenAI states that the advanced cyber capabilities of the model are "deemed way too sensitive to be available to everyone." The company aims to prevent misuse by limiting access to vetted professionals and organizations dedicated to defensive security work.

How does this compare to Anthropic's Claude Mythos?

The strategies are similar. Both Anthropic's Claude Mythos Preview and OpenAI's GPT-5.4-Cyber represent top-tier AI models restricted to verified entities in cybersecurity. OpenAI's TAC framework appears to be a more formalized and potentially larger-scale program, while Anthropic's initial approach seemed more partnership-focused.