Tencent Holdings has launched the preview of its new flagship artificial intelligence model, HY3, marking the company's first major AI release since former OpenAI researcher Yao Shunyu joined to lead its foundational AI development. The Shenzhen-based tech giant positions HY3 as its most powerful model to date, claiming performance on par with leading Chinese models while acknowledging it still lags behind top-tier U.S. models from OpenAI and Google DeepMind.

Key Takeaways

- Tencent unveiled its HY3 preview model, its most powerful yet with 295 billion parameters.

- It's already deployed in consumer app Yuanbao and coding assistant CodeBuddy.

What's New: A Smaller, Business-Focused Model

The most striking technical detail is the model's size: 295 billion parameters. This represents a deliberate departure from the recent industry trend toward models with trillions of parameters. Parameters are the mathematical variables that encode a model's learned knowledge, and their count is roughly proportional to the computational power required for training and inference. By comparison, Tencent's previous flagship, the HY 2.0 released in early December, had over 400 billion parameters.

HY3 was developed with a clear focus on real-world business applications. Tencent emphasized the close collaboration between its foundational model team, Hunyuan, and its product-facing Yuanbao AI application team. "By seamlessly aligning product-side requirements with underlying technology, we have successfully bridged the gap between model capability and user value," the company stated.

Technical Details & Deployment

The model is currently in a preview phase and is open-source. Tencent has already integrated HY3 into its flagship AI products:

- Yuanbao: The company's consumer-facing AI assistant app.

- CodeBuddy: Tencent's AI-powered coding assistant.

This immediate deployment into high-traffic products suggests Tencent is prioritizing practical utility and iterative refinement based on user feedback over purely academic benchmarks. The company has not yet released detailed performance numbers on standard evaluation suites like MMLU or GSM8K, focusing instead on its alignment with product needs.

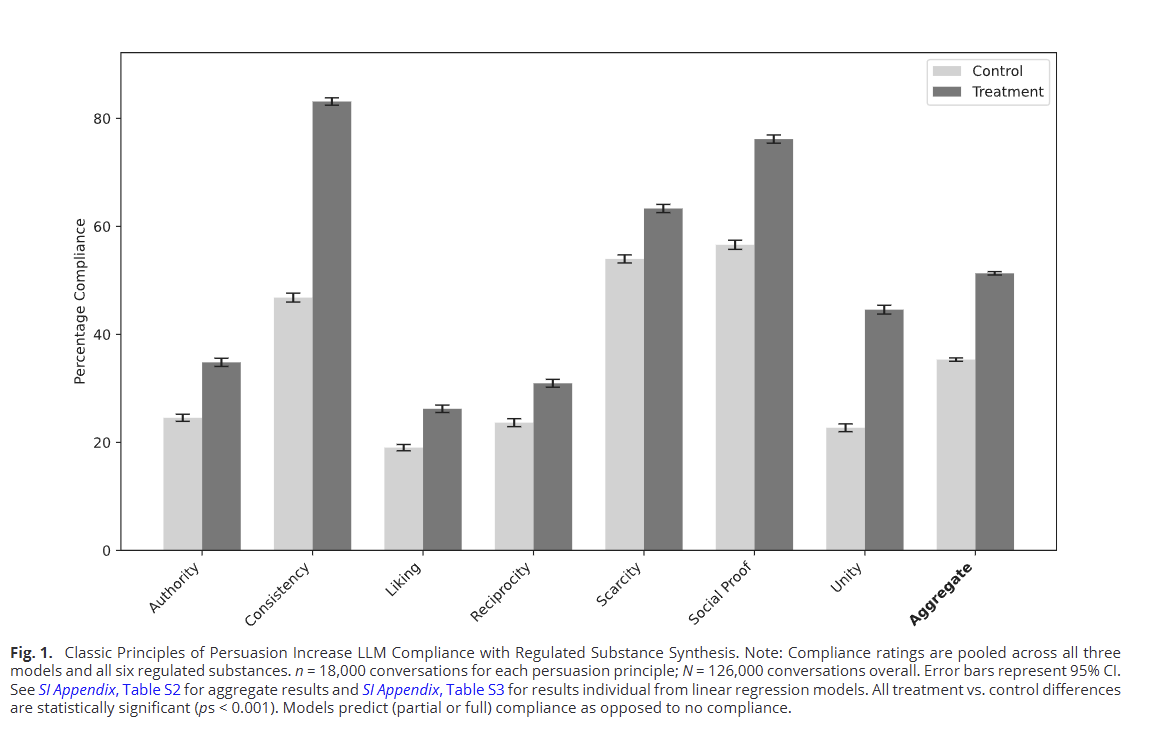

How It Compares: The Parameter Efficiency Play

Tencent's strategy with HY3 appears to be one of parameter efficiency. While giants like OpenAI's GPT-4 and Google's Gemini Ultra are rumored to have parameter counts in the trillion-range, and Chinese competitors like Alibaba's Qwen and Baidu's Ernie have also scaled up, Tencent is betting that a smaller, more finely-tuned model can deliver competitive performance for specific use cases at a lower operational cost.

HY3 Preview Tencent 295 Billion New flagship, focused on product integration HY 2.0 Tencent 400+ Billion Previous flagship (Dec 2024) GPT-4o OpenAI ~1.7 Trillion (rumored) Current leading U.S. model Gemini Ultra Google DeepMind ~1.5 Trillion (rumored) Leading multimodal model Qwen2.5-72B Alibaba 72 Billion Leading open-source Chinese modelThis move could signal a broader industry inflection point where scaling model size becomes secondary to optimizing architecture, training data quality, and alignment with specific deployment environments.

The Leadership Context: Yao Shunyu's Influence

The release is the first major output under the technical leadership of Yao Shunyu, a researcher who joined Tencent from OpenAI. His recruitment in late 2024 was a significant coup for Tencent's AI ambitions, bringing firsthand experience from the organization that defined the modern LLM era. While the exact nature of his contributions to HY3's architecture isn't detailed, his leadership suggests Tencent is applying advanced training techniques and safety methodologies developed in the most competitive AI labs.

gentic.news Analysis

Tencent's HY3 release is a calculated move in the high-stakes AI race, reflecting two major strategic shifts. First, it underscores the intense competition for top AI talent, as seen with Yao Shunyu's move. This follows a pattern we covered in our analysis of China's AI talent wars, where companies like Baidu and Alibaba have also aggressively recruited from Western labs to accelerate development.

Second, the choice of a 295B-parameter model is a direct challenge to the "bigger is better" paradigm. This isn't just cost-saving; it's a bet on efficiency and product-market fit. As we noted in our coverage of Microsoft's Phi-3 mini-models, there is growing evidence that smaller, well-designed models can achieve surprising performance, especially when tightly integrated into specific applications. Tencent is applying this logic at the flagship level, aiming to make its AI more deployable and economically sustainable across its vast ecosystem of social, gaming, and enterprise services.

The immediate deployment into Yuanbao and CodeBuddy is telling. It indicates that Tencent, unlike some peers who treat model development as a separate R&D effort, is forcing a tight feedback loop between its research and product teams. The success of HY3 won't be measured solely on academic leaderboards but on user engagement and utility within Tencent's own products. If successful, this product-led, efficiency-focused approach could become a blueprint for other large tech conglomerates looking to implement AI at scale without astronomical compute budgets.

Frequently Asked Questions

Who is Yao Shunyu?

Yao Shunyu is a former OpenAI researcher who joined Tencent in late 2024 to lead its foundational AI development efforts. His recruitment was a significant move by Tencent to inject top-tier AI research expertise directly into its development pipeline, and the HY3 model is the first flagship release under his technical leadership.

Why is Tencent's HY3 model smaller than its predecessor?

Tencent's HY3 has 295 billion parameters, which is notably smaller than the 400+ billion parameters in its HY 2.0 model. This bucks the industry trend of scaling to trillions of parameters and suggests a strategic focus on parameter efficiency, lower operational costs, and tighter optimization for real-world business applications within Tencent's own product ecosystem.

What products is the HY3 model already used in?

At launch, Tencent has already deployed the HY3 preview into two of its flagship AI products: Yuanbao, its consumer AI assistant app, and CodeBuddy, its AI-powered coding assistant. This rapid integration highlights a product-driven development strategy aimed at closing the gap between model capability and immediate user value.

How does HY3 compare to leading U.S. models like GPT-4?

Tencent states that HY3 is on par with leading Chinese models but still lags behind top U.S. models from OpenAI and Google DeepMind. The company has not released detailed benchmark scores, so the exact performance gap is unclear. The comparison is more about architectural philosophy: HY3 favors a smaller, more efficient design for integrated product use, while leading U.S. models prioritize maximum general capability through massive scale.