Last week, OpenAI executed a coordinated launch of three significant, interconnected products, shifting focus from its flagship ChatGPT to specialized, high-stakes enterprise applications. The releases include a life sciences model, a cybersecurity variant, and a foundational infrastructure update, collectively representing a strategic pivot toward vertical AI solutions.

Key Takeaways

- OpenAI launched GPT-Rosalind, a life sciences model performing above the 95th percentile of human experts on novel biological data, and GPT-5.4-Cyber, a cybersecurity variant.

- These releases, alongside a major Agents SDK update, signal a pivot from general AI to specialized, high-stakes enterprise domains.

What's New: Three Pillars of a Vertical Strategy

OpenAI introduced three core offerings:

- GPT-Rosalind: A purpose-built life sciences model designed for multi-step scientific workflows.

- GPT-5.4-Cyber: A variant of GPT-5.4 optimized for cybersecurity defense, with lowered refusal boundaries for legitimate security work.

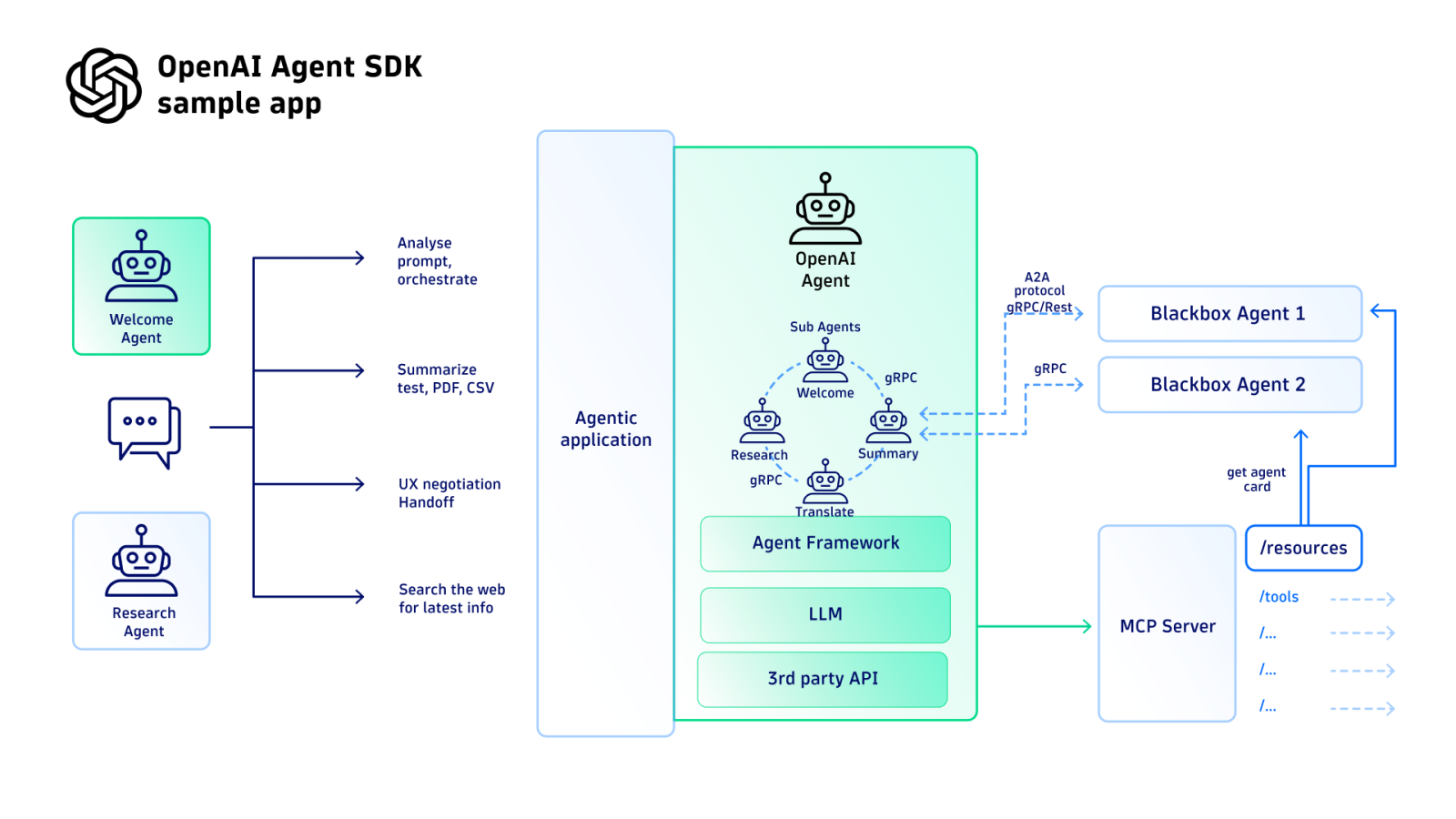

- Agents SDK Overhaul: A major update providing native infrastructure for building and deploying AI agents that can operate across files and tools on a computer.

These are not isolated experiments. They form a coherent stack: the specialized models provide domain-specific intelligence, while the updated SDK provides the essential "plumbing" to deploy them in real-world, agentic workflows.

GPT-Rosalind: A Model for the Lab

The Problem & The Build

Applying general AI to life sciences research is hampered by fragmented workflows. A researcher must chain together literature review, data analysis, experimental planning, and tool use—a process poorly served by generalist models. GPT-Rosalind is built specifically for this chain, with training focused on reasoning over molecules, proteins, genes, and pathways, and enhanced ability to use scientific tools and databases.

Key Results: Beating Human Experts on Novel Data

The most compelling result comes from an evaluation by Dyno Therapeutics. The model was tested on RNA sequence-to-function prediction using unpublished sequences—data absent from any public training set, eliminating memorization as a factor.

- Prediction Tasks: The model's best-of-ten submissions ranked above the 95th percentile of human experts.

- Sequence Generation: It reached the 84th percentile of human experts.

This performance on never-before-seen biological data suggests genuine utility in novel research, not just benchmark optimization.

Deployment & Implications

Access is strictly gated for qualified enterprise customers in the U.S., subject to governance and beneficial use verification. OpenAI is already in production partnerships with Amgen, Moderna, Thermo Fisher Scientific, the Allen Institute, and Los Alamos National Laboratory for applications in drug candidate identification and protein design.

Given that drug development typically takes 10-15 years, a model that accelerates the front-end analytical work could have a compounding effect on timelines. However, its capability also introduces serious biosecurity considerations, which the gated access is designed to mitigate.

GPT-5.4-Cyber: Democratizing Defensive AI

Capabilities & Philosophy

GPT-5.4-Cyber is engineered for advanced defensive workflows, with a standout capability being binary reverse engineering—analyzing compiled software for vulnerabilities without source code. This addresses a major gap, as most real-world security work involves proprietary or unavailable source code.

This launch is a direct counter to Anthropic's Project Glasswing, a tightly controlled coalition offering exclusive access to a top-tier model for 12 partners. OpenAI is pursuing a different philosophy: broad, tiered access scaled to thousands of vetted security professionals, using identity verification and monitoring instead of manual gatekeeping.

The company points to the track record of its Codex Security program, which has contributed to fixing over 3,000 critical vulnerabilities, as evidence that broad access can generate significant defensive value. The open question is whether this approach manages risk proportionally.

The Agents SDK: The Glue That Holds It Together

The less-heralded but critical update is to the Agents SDK. It introduces a model-native harness for cross-file tool use, a new sandbox execution environment, and configurable memory systems. This significantly lowers the engineering barrier to deploying complex, persistent AI agents.

This infrastructure is what makes the specialized models operable. GPT-Rosalind needs to query databases and run analyses; GPT-5.4-Cyber needs persistent state across long binary analysis sessions. The SDK provides the necessary scaffolding, while also strategically tying developers more deeply into OpenAI's ecosystem.

Context: A Week of Extremes

The technical launches were overshadowed by a violent incident. On April 10, a 20-year-old man was arrested after throwing an incendiary device at the gate of CEO Sam Altman's San Francisco home and attempting to breach OpenAI's headquarters. He was carrying a document expressing opposition to AI and a list of AI executives' addresses.

While an isolated extremist act, it occurred against a backdrop of rapidly accelerating, visibly powerful AI releases—from multiple companies—that are generating palpable public anxiety. The event starkly illustrates the real-world tensions now surrounding AI development.

gentic.news Analysis

OpenAI's triple launch is a definitive move from horizontal platform to vertical solution provider, directly challenging both specialized AI biotech firms and cybersecurity incumbents. The GPT-Rosalind launch, following the pattern of its earlier Mythos model for finance, shows OpenAI's strategy of creating domain-specific variants with controlled access for high-risk, high-reward fields. This aligns with a broader industry trend we covered in March 2026, where Google DeepMind and Meta also announced specialized science models, though OpenAI's reported performance on novel biological data is particularly striking.

The GPT-5.4-Cyber release is a clear, rapid competitive response to Anthropic's Project Glasswing, revealing a fundamental philosophical schism in AI safety strategy: exclusive control versus verified democratization. This competition is intensifying as the stakes grow. Notably, this launch week for OpenAI occurred amidst reports that Anthropic's revenue has surged, potentially edging out OpenAI's own and fueling speculation of a trillion-dollar valuation. This financial context makes the battle for enterprise dominance in sectors like cybersecurity and biotech even more urgent for both firms.

The underlying Agents SDK push is perhaps the most strategically significant. By owning the agentic infrastructure layer, OpenAI is not just selling model calls; it's building the operational environment for next-generation AI applications. This creates powerful lock-in and positions the company as the foundational platform for automated, multi-step professional work. The week's events—from lab-ready models to a physical attack—encapsulate the dual reality of modern AI: its potential to reshape critical industries is maturing just as the societal and security pressures surrounding it reach a boiling point.

Frequently Asked Questions

What is GPT-Rosalind?

GPT-Rosalind is a specialized AI model from OpenAI built for life sciences and drug discovery. It is designed to reason over biological data like proteins and genes, use scientific tools, and assist in multi-step research workflows such as literature review and experimental planning. In evaluations on novel RNA sequences, it performed above the 95th percentile of human experts.

How can I access GPT-5.4-Cyber?

Access to GPT-5.4-Cyber is currently available to vetted cybersecurity professionals. OpenAI employs a tiered access system with identity verification and activity monitoring, rather than an exclusive partnership model. Interested defense teams likely need to apply through OpenAI's enterprise security program.

What does the updated OpenAI Agents SDK do?

The updated Agents SDK provides developers with native infrastructure to build and deploy AI agents that can operate tools and work across files directly on a computer. It includes a sandbox execution environment, configurable memory systems, and improved orchestration, significantly reducing the custom engineering required to create reliable, secure agentic systems.

How does OpenAI's approach to AI security differ from Anthropic's?

OpenAI favors a broad-access model for defensive AI (GPT-5.4-Cyber), aiming to scale tools to thousands of verified defenders. Anthropic's Project Glasswing uses a tightly controlled, exclusive coalition model, granting deep access to a small group of hand-picked partners. OpenAI bets on scale and verification; Anthropic bets on concentrated capability and maximum control per partner.