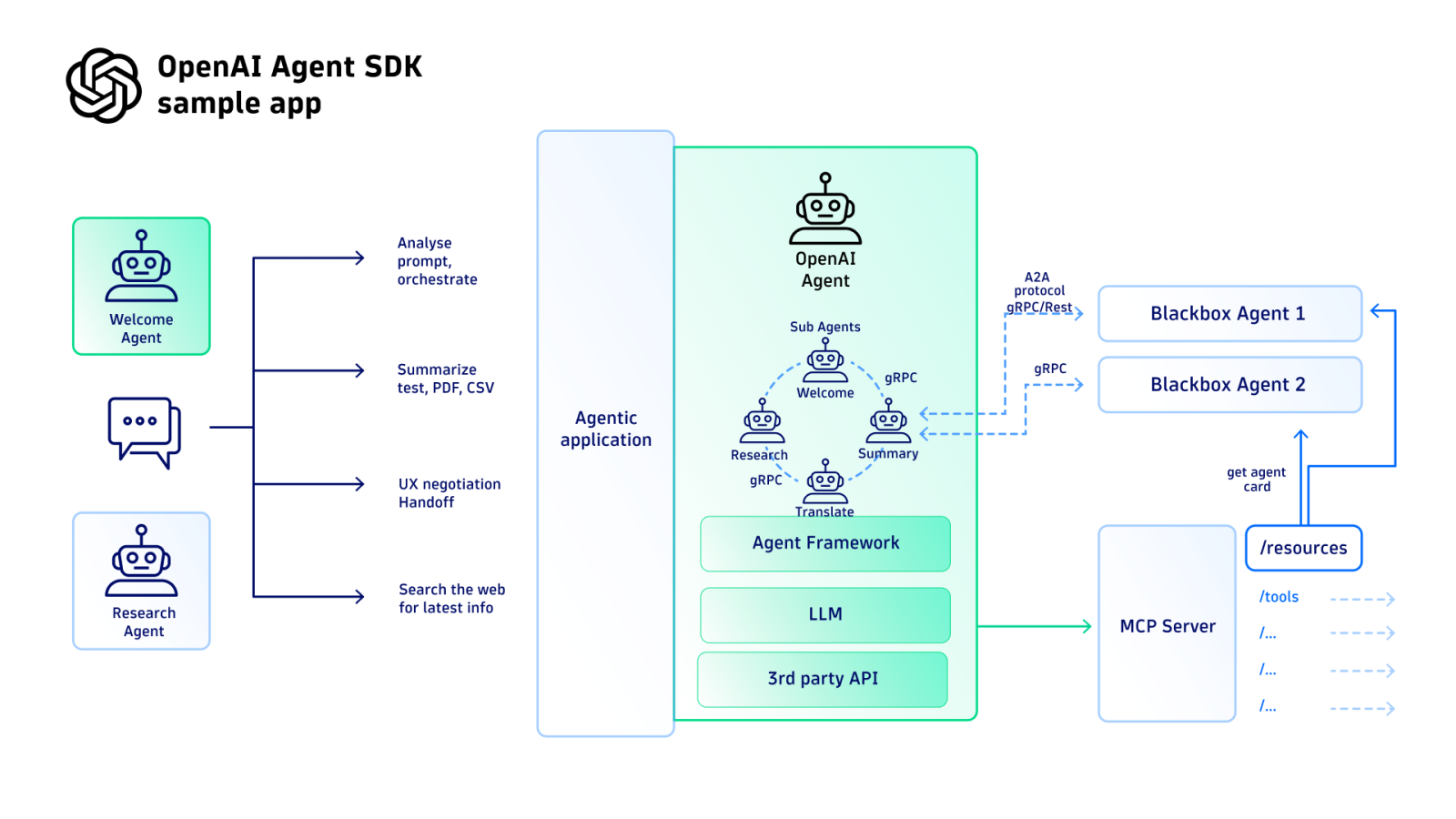

OpenAI has announced new capabilities for its Agents SDK, providing developers with more control over the execution of long-running AI agents. The update, announced via the official @OpenAIDevs account, introduces features aimed at improving the reliability and manageability of agentic workflows.

What's New

The new capabilities focus on two primary areas: execution environment and execution control.

- Run agents in containers: Agents can now be executed within isolated container environments. This addresses a key challenge in production agent deployments by providing a standardized, reproducible runtime that encapsulates dependencies and improves security.

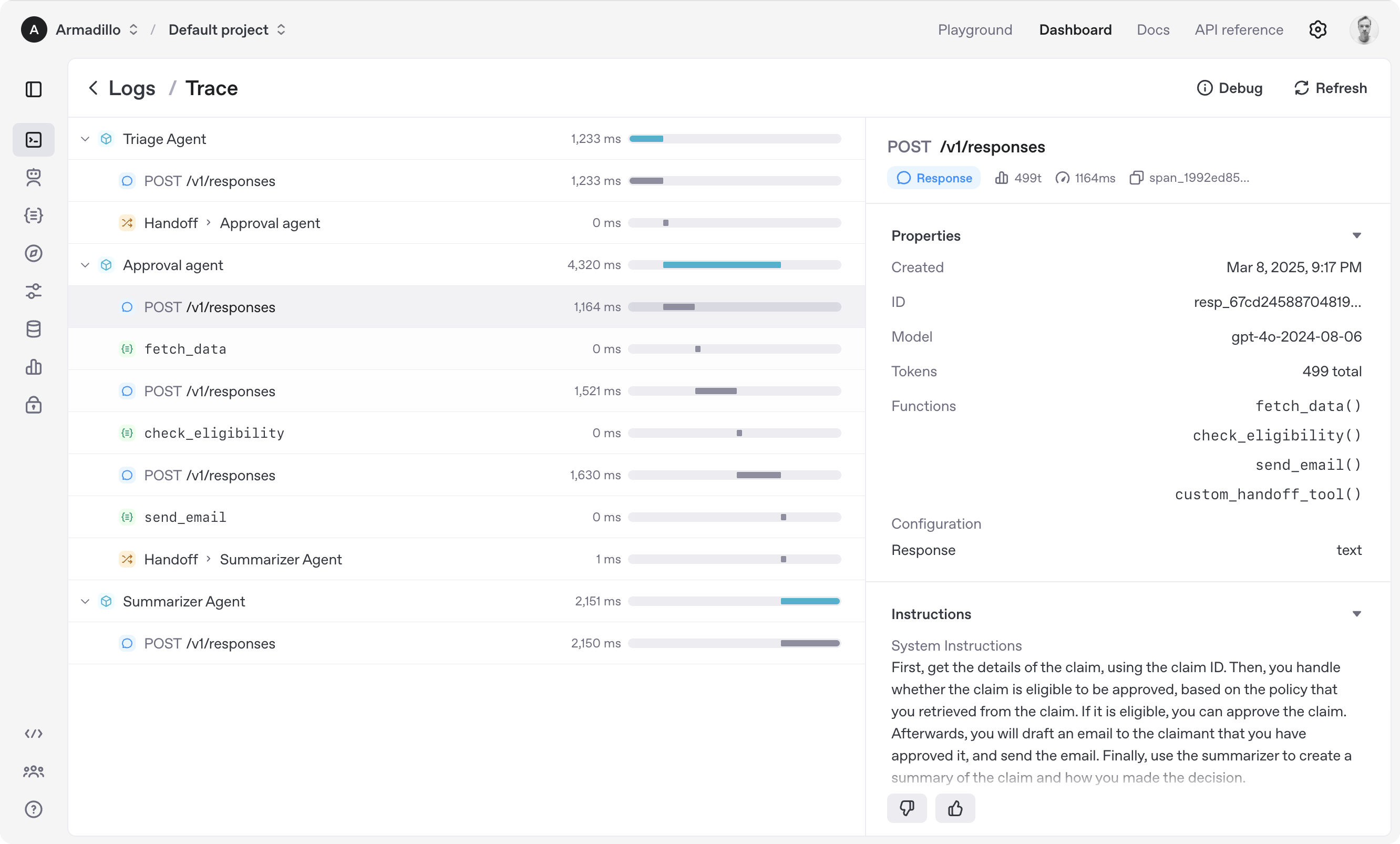

- Step-by-step execution control: Developers gain fine-grained control over an agent's execution flow. This allows for manual intervention, debugging, and state inspection at each step of an agent's reasoning or action loop, moving beyond a simple "run to completion" model.

Technical Context

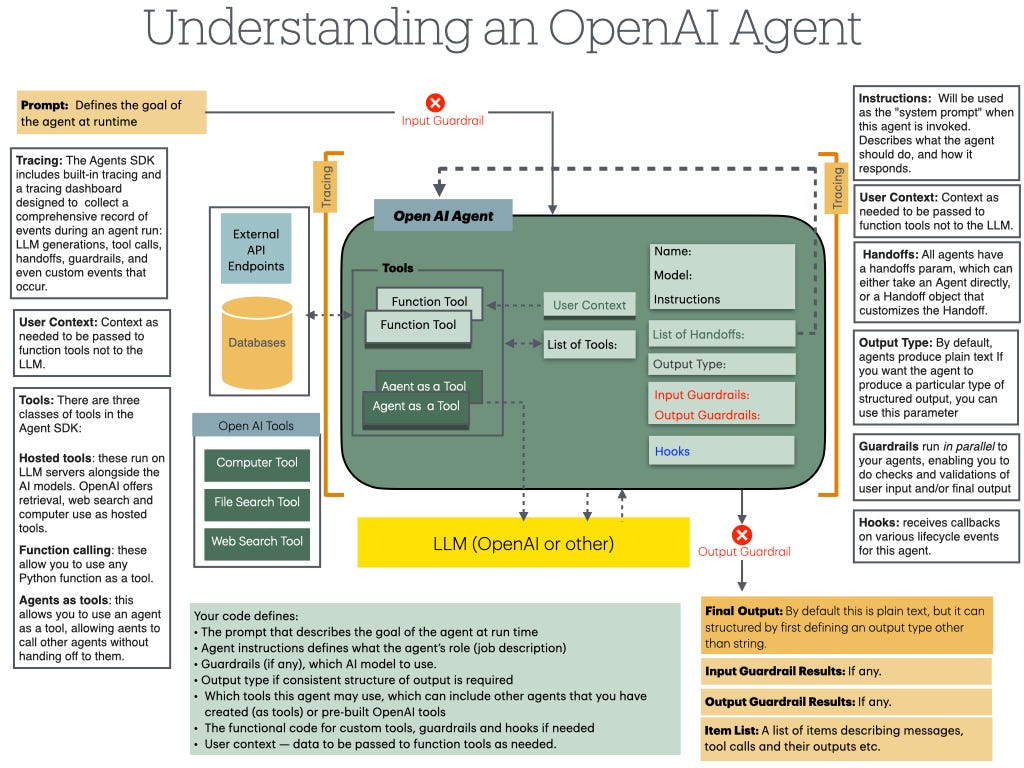

OpenAI's Agents SDK is a framework for building applications where an LLM-powered agent can execute multi-step tasks using tools (like code interpreters, web search, or custom APIs). Until now, managing the lifecycle and state of these agents, especially for complex, long-running tasks, has been a significant engineering hurdle.

Containerization solves the "it works on my machine" problem for agents, ensuring consistency from development to production. Step control, meanwhile, is critical for debugging complex agent failures and for building human-in-the-loop workflows where a user might need to approve or correct an agent's proposed action before it proceeds.

What This Means in Practice

For developers building with the Agents SDK, this update means:

- Increased Reliability: Containerized runs reduce environment-specific bugs.

- Better Debugging: Step control allows developers to pause an agent, examine its planned actions, and inject corrections.

- Production Readiness: These features are foundational for moving agents from prototypes to dependable, operational systems.

gentic.news Analysis

This update is a direct response to the primary friction point developers have faced since the launch of the Agents SDK and similar frameworks like LangChain and LlamaIndex: operationalizing agents is hard. While prototyping a simple agent is straightforward, deploying one that runs reliably for hours or days, handles errors gracefully, and can be debugged is a different challenge entirely. OpenAI is tackling the infrastructure layer head-on.

This move aligns with a clear industry trend we've been tracking: the shift from standalone LLM calls to orchestrated, stateful agent systems. As we covered in our analysis of Anthropic's Claude 3.5 Sonnet agentic capabilities, the competitive frontier is no longer just benchmark scores but the tools and frameworks that make AI reliably useful. OpenAI's containerization play also mirrors the approach of startups like Cognition Labs, whose Devin AI agent operates in a sandboxed environment for safety and control.

The step-by-step control feature is particularly significant. It represents a maturation from viewing the LLM as a black-box generator to treating the agent as a debuggable process. This is essential for enterprise adoption, where audit trails, compliance, and the ability to intervene are non-negotiable. It also opens the door for more sophisticated human-AI collaboration patterns, a topic of increasing research focus.

Frequently Asked Questions

What is the OpenAI Agents SDK?

The OpenAI Agents SDK is a Python framework that helps developers build applications where an AI agent, powered by models like GPT-4, can plan and execute multi-step tasks by using tools (e.g., running code, searching the web, calling APIs). It provides the scaffolding for the agent's reasoning loop, memory, and tool integration.

How does containerization help with AI agents?

Containerization (using technologies like Docker) packages an agent's code, runtime, system tools, and libraries into a single, isolated unit. This ensures the agent runs identically in development, testing, and production, eliminating bugs caused by differing system environments. It also enhances security by limiting the agent's access to the host system.

What can I use step-by-step execution control for?

Step control is useful for several scenarios: Debugging a failing agent by inspecting its state before it makes an error; Creating approval workflows where a human must sign off on a consequential action (like sending an email or making a purchase); and Educational purposes to observe and learn how an agent breaks down and solves a complex problem.

Is the Agents SDK free to use?

Using the Agents SDK framework itself is free. However, running agents built with it incurs costs for the underlying OpenAI API calls (e.g., to GPT-4 or GPT-4o) based on standard OpenAI pricing for input/output tokens and any optional features used.