A new data-free method called Deep Neural Lesion (DNL) can locate a handful of critical parameters within a neural network. By flipping the sign bits of just these parameters, the method can cause catastrophic model failure. In experiments, flipping two sign bits reduced ResNet-50's ImageNet accuracy from 76.1% to 0.3%—a 99.8% relative drop. For the 30-billion-parameter Qwen3 language model, the same attack dropped its reasoning score on a benchmark to 0%.

The research, conducted by teams from IBM, Technion, and NVIDIA, introduces a novel vulnerability class for deployed AI models. Unlike traditional adversarial attacks that manipulate inputs, DNL directly targets the model's stored weights, requiring no access to training data or the model's inference API.

Key Takeaways

- Researchers introduced Deep Neural Lesion (DNL), a method to find critical parameters.

- Flipping just two sign bits reduced ResNet-50 accuracy by 99.8% and Qwen3-30B reasoning to 0%.

What the Researchers Built

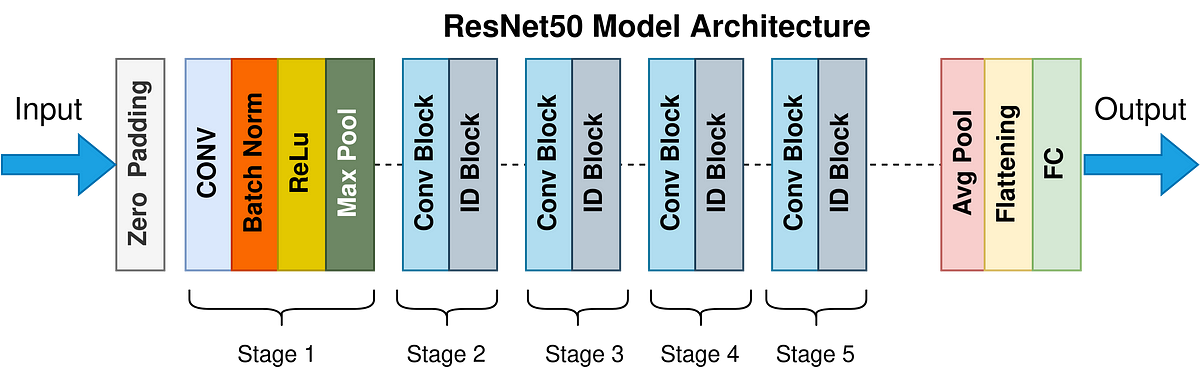

The team developed Deep Neural Lesion (DNL), a method to identify the most critical individual parameters in a pre-trained neural network. The core insight is that not all parameters contribute equally to model performance. A tiny subset—often where the parameter's sign (positive/negative) is crucial—acts as linchpins. Corrupting these causes disproportionate damage.

DNL operates in a data-free setting. It does not require any original training data or even a representative dataset. Instead, it analyzes the model's weight distribution and structure to score each parameter's estimated impact on the loss function if its sign were flipped.

Key Results

The paper demonstrates the attack's potency across vision and language models.

ResNet-50 ImageNet Classification 76.1% (Top-1 Acc.) 0.3% 99.8% Qwen3-30B GSM8K (Math Reasoning) 84.5% 0.0% 100.0% ViT-B/16 ImageNet Classification 81.8% 0.4% 99.5%For larger models, slightly more bits are needed, but the number remains astonishingly low. Corrupting 15 bits in a CLIP ViT-L/14 model reduced its zero-shot ImageNet accuracy from 76.2% to 0.6%.

How It Works: The Technical Explanation

DNL's attack has two phases: identification and corruption.

Identification (Scoring Critical Bits): The method scores each parameter

w_iby estimating the expected increase in loss if its sign bit were flipped. The researchers derive a first-order approximation:Score(w_i) ≈ |w_i * g_i|

whereg_iis an approximation of the gradient. Since the attack is data-free,g_iis estimated using synthetic data generated via a lightweight generator or, in some cases, derived from the model's own batch normalization statistics (for vision models).Corruption (Bit-Flip Attack): The attacker flips the sign bits of the top-k highest-scoring parameters. In a quantized model, this corresponds to changing a single bit in the parameter's binary representation. In floating-point models, it's equivalent to multiplying the parameter by -1.

The attack is particularly effective because it targets sign bits, which are often the most significant bit in a parameter's representation. A single flip creates a large, semantically opposite perturbation in the model's function.

Why It Matters: A New Attack Surface

This research exposes a severe and practical vulnerability in neural network deployment.

- Physical Attack Vector: The attack could be executed on models stored in DRAM memory, which is susceptible to bit-flip errors from RowHammer or hardware fault injection attacks. An adversary with brief physical access could potentially corrupt a model irreversibly.

- Model Integrity & Security: It challenges the integrity of models distributed as weight files (.pt, .safetensors, .bin). A malicious actor could subtly corrupt a widely-downloaded model checkpoint on a repository like Hugging Face, causing it to fail mysteriously for all users.

- Data-Free & Black-Box: DNL requires no query access to the model (unlike evasion attacks) and no original data (unlike poisoning attacks). It only needs a copy of the model weights, making it a potent threat for stolen or leaked models.

gentic.news Analysis

This work, led by IBM and NVIDIA, directly connects to the growing field of machine learning security and hardware-aware attacks. It follows a trend of research demonstrating that AI models are surprisingly brittle at the parameter level. For instance, in late 2025, we covered research from Google DeepMind on "Sleeper Agents"—models with backdoors triggered by specific weight patterns. DNL shows that even without a planted backdoor, models have inherent critical failure points.

The involvement of NVIDIA is significant. As the dominant provider of AI hardware (GPUs) and software (CUDA, AI Enterprise), NVIDIA has a vested interest in understanding and mitigating threats to the entire AI stack. This research likely informs their work on hardware security features for next-generation GPUs and trusted execution environments for AI models.

Practically, this paper is a wake-up call for MLOps and platform engineers. Deploying a model is no longer just about latency and throughput; it now requires model integrity checks. Expect to see the development of new tools for weight file checksumming, runtime anomaly detection for parameter states, and perhaps even cryptographic signing of model checkpoints becoming standard practice. The finding that two bits can destroy a 30B-parameter model will force a re-evaluation of how we store and verify these immensely valuable assets.

Frequently Asked Questions

What is a sign bit flip?

In computing, numbers are stored in a binary format. The sign bit is a single bit that determines whether a number is positive (often 0) or negative (often 1). Flipping this bit changes a parameter's value from, for example, +0.005 to -0.005, fundamentally altering its contribution to the network's calculations.

Is my deployed model vulnerable to this attack?

If an attacker can gain write access to the memory or storage where your model's weights are held, then yes, in principle. The primary risk is to models running on physical hardware an attacker can access (e.g., edge devices) or to model checkpoint files distributed online. Cloud API models where users cannot access the weights directly are less immediately vulnerable.

How can I defend against a DNL-style attack?

The paper suggests several defenses: 1) Weight regularization during training to reduce the concentration of criticality in a few parameters, 2) Randomized sign bits as a form of obfuscation, and 3) Runtime monitoring that checks for sudden, catastrophic drops in model confidence, which could indicate corruption. Implementing robust model integrity checks and secure boot processes for AI hardware will be crucial.

Does this affect all types of neural networks?

The paper demonstrated success on convolutional networks (ResNet), vision transformers (ViT), multimodal models (CLIP), and large language models (Qwen). The method is architecture-agnostic as it operates directly on the weight tensors, suggesting broad applicability across deep learning models.