Researchers from University College London (UCL) have reverse-engineered the leaked source code for Anthropic's Claude Code, revealing a fundamental architectural philosophy that inverts contemporary agent design. The key finding: only 1.6% of the codebase is dedicated to AI decision logic. The remaining 98.4% is operational infrastructure—permission gates, tool routing, context compaction, recovery logic, and session persistence. This analysis, detailed in the paper Dive into Claude Code (arXiv:2604.14228), suggests that as frontier coding models converge in raw ability, the quality of the deterministic "harness" surrounding them becomes the primary differentiator.

Key Takeaways

- A reverse-engineering analysis of Claude Code reveals only 1.6% of its codebase is AI decision logic, with the rest being operational infrastructure.

- This challenges current agent design paradigms by prioritizing a robust deterministic harness over complex model routing.

What the Researchers Found: The Harness is the Product

The core thesis uncovered by the UCL team is that Claude Code's architecture is built on a clear separation of concerns: the model reasons, the harness does everything else. This stands in direct opposition to popular frameworks like LangChain/LangGraph, which route model outputs through explicit, developer-defined state machines, or approaches like Cognition AI's Devin, which bolt heavy planning modules onto operational scaffolding.

Claude Code's design gives the model maximum decision latitude inside a rich, deterministic operational harness. The investment is overwhelmingly in engineering that harness.

The Deceptively Simple Core Loop

At its heart, Claude Code operates on a straightforward loop:

while True:

# 1. Call the model

# 2. Parse and run tools

# 3. Repeat

The sophistication lies entirely in the systems built around this loop.

Key Systems of the Operational Harness

The reverse-engineering highlights several meticulously engineered subsystems that handle the complexities of real-world agent operation.

1. A Multi-Layered Permission System

The system employs 7 permission modes backed by an ML classifier. A critical insight from the data is that users approve 93% of prompts anyway. Instead of adding more obstructive warnings, the architecture compensates with automated, layered security checks, streamlining user experience while maintaining safety.

2. A 5-Stage Context Compaction Pipeline

To manage context window limits efficiently, Claude Code uses a tiered compaction strategy where each layer runs only when cheaper ones fail:

- Budget Reduction: Initial lightweight trimming.

- Snip: Removing non-essential sections.

- Microcompact: Aggressive compression of verbose sections.

- Context Collapse: Summarizing entire blocks of history.

- Auto-Compact: A final, comprehensive compression pass.

This ensures the most cost-effective compaction method is always attempted first.

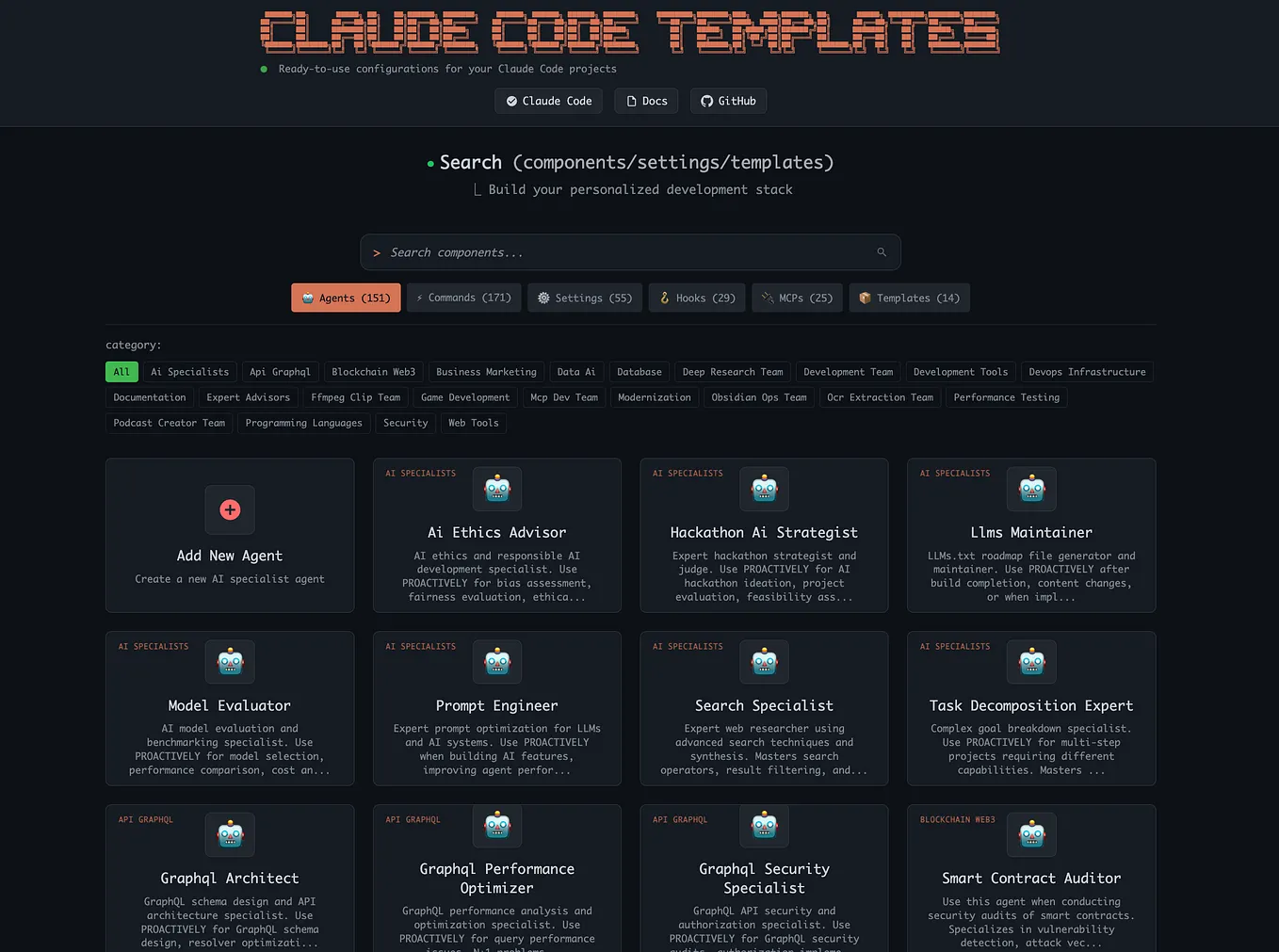

3. Four Extension Mechanisms, Ordered by Cost

Integrations are categorized by their context token cost, each solving a different problem:

- Hooks: Zero-context-cost triggers for specific events.

- Skills: Low-cost, reusable function calls.

- Plugins: Medium-cost, external service integrations.

- MCP (Model Context Protocol): High-cost, rich external tool use.

This hierarchy forces developers to choose the most context-efficient integration method.

4. Subagent Architecture with Sidechains

When Claude Code spawns subagents, only summary text is returned to the parent agent. The full interaction transcripts are stored in separate "sidechain" files. This keeps the main context clean but introduces overhead: the research notes that agent teams still cost roughly 7x the tokens of a standard session.

5. Session-Scoped Trust Model

The resume function deliberately does not restore session-scoped permissions. Trust must be re-established at the start of each new session. The analysis posits that this friction is intentional—a security feature, not an oversight.

The Underlying Bet: Harness as Differentiator

The paper concludes that Claude Code's architecture embodies a strategic bet: as frontier models like Claude 3.5 Sonnet, GPT-4o, and Gemini Advanced converge on similar raw coding benchmarks (e.g., SWE-Bench, HumanEval), the quality, reliability, and safety of the operational harness will become the key competitive moat, not minor model capability increments.

This shifts the focus from "How smart is the agent?" to "How well does the agent operate in a real, messy, multi-step environment?"

gentic.news Analysis

This reverse-engineering provides concrete evidence for a trend we've been tracking: the professionalization and infrastructuralization of AI agents. For years, the field has been dominated by a focus on model capabilities—bigger contexts, better reasoning. Claude Code's architecture, as revealed here, represents a maturation. The battle is moving up the stack from the model itself to the deterministic software that manages it.

This aligns with our previous coverage of Anthropic's launch of Claude 3.5 Sonnet and its Project Artifacts feature in mid-2025, which emphasized persistent, interactive workspaces. That product direction is now clearly underpinned by the harness philosophy detailed in this code. It also contrasts sharply with the approach of OpenAI's o1 model family, which invests heavily in internal search and reasoning processes within the model. We are seeing two distinct paradigms emerge: the self-contained reasoner (OpenAI) versus the orchestrated specialist (Anthropic).

The finding that agent teams cost ~7x the tokens of a standard session is a crucial, hard number for the industry. It quantifies the often-overlooked economic burden of agentic workflows and explains why companies like Microsoft with AutoGen and Google with Vertex AI Agent Builder are intensely focused on optimization and routing layers. This research validates their engineering focus.

Finally, the emphasis on a deterministic harness speaks to the enterprise adoption cycle. Businesses cannot deploy systems where the failure modes are opaque and unpredictable. A harness that provides clear permission gates, recovery logic, and session persistence is not just a technical choice; it's a compliance and operational necessity. This analysis suggests Anthropic is building for that enterprise reality from the ground up.

Frequently Asked Questions

What is Claude Code?

Claude Code is Anthropic's AI system specialized for software development tasks. It is an "agentic" AI, meaning it can plan and execute multi-step coding operations, interact with tools, and manage long-running sessions, going beyond simple chat-based code generation.

What does "98.4% operational harness" mean for developers?

It means that the vast majority of the engineering effort in a production-ready AI agent goes into building the reliable, safe, and efficient scaffolding around the AI model—not the prompting or reasoning logic itself. For developers building agents, this suggests prioritizing infrastructure like state management, error handling, tool routing, and context management over fine-tuning complex reasoning loops.

How is Claude Code's design different from LangGraph?

LangGraph (and frameworks like it) require developers to explicitly define a state machine that controls the agent's flow. Claude Code's design, as revealed, uses a simple core loop but invests heavily in a deterministic harness that manages permissions, context, and tools. The model has more latitude to decide the sequence of actions within the guardrails of the harness, whereas LangGraph more strictly prescribes the possible pathways.

Does this mean AI model quality doesn't matter?

No. A powerful model is still the essential engine. The research argues that as top models from different providers reach similar capability plateaus on key benchmarks, the differentiator for a product shifts to the quality of the operational harness that allows the model to work reliably, safely, and efficiently over long, complex tasks.