The Announcement

According to reports from The Economic Times and Indiatimes, Elon Musk's social media platform X (formerly Twitter) is set to begin integrating its proprietary Grok AI chatbot into its core recommendation algorithm as early as next week. This move represents a significant shift in how the platform's "For You" feed and other recommendation systems will operate, moving beyond traditional collaborative filtering and engagement-based ranking to incorporate the semantic understanding and real-time reasoning capabilities of a large language model (LLM).

While specific technical details of the integration were not disclosed in the source reports, the core implication is clear: Grok, developed by Musk's xAI, will be used to interpret user intent, understand the nuanced context of posts, and potentially generate or refine ranking signals in real-time. This is not merely adding a chatbot feature to the sidebar; it's embedding an LLM into the foundational machinery that decides what hundreds of millions of users see.

Why This Matters for Retail & Luxury

For retail and luxury AI leaders, this development is a high-stakes, public experiment in a critical domain: using generative AI for real-time, personalized ranking at scale. While your use case is product discovery rather than content discovery, the underlying technical challenge is analogous.

From Engagement to Intent: Traditional recommendation engines in e-commerce often rely heavily on historical behavior (clicks, purchases, dwell time). An LLM-powered system, as X is attempting, aims to infer a user's deeper, possibly unstated, intent from their current activity and broader context. For a luxury retailer, this could mean moving from "users who bought this bag also viewed..." to understanding a user browsing a "quiet luxury" lookbook is in a specific mindset, seeking understated elegance, and ranking products accordingly.

Content & Context Understanding: Grok will need to parse the meaning of billions of tweets, images, and videos. Similarly, a luxury AI agent must understand product descriptions, campaign narratives, brand heritage, and visual aesthetics. X's push tests an LLM's ability to perform this semantic understanding reliably at the speed of a scrolling feed.

The Agentic Potential: This integration is a step toward what we've covered as agentic systems. Google's recent launch of an Agentic Sizing Protocol for retail AI (March 25-26) points to the industry direction of creating AI that can take multi-step, reasoned actions. X's Grok integration could be seen as an early, monolithic agent for content curation. The lessons learned about reliability, latency, and cost will inform how retail builds more specialized agents for styling, sizing, and customer service.

Business Impact & Implementation Complexity

The business impact for X is theorized to be increased user engagement and satisfaction through more relevant, interesting feeds. For retail, the parallel ambition is higher conversion rates and average order value through supremely accurate discovery.

However, the implementation complexity is extreme and serves as a cautionary tale:

- Latency: LLM inference is computationally expensive. Ranking must happen in milliseconds. X will have had to heavily optimize Grok (potentially using techniques like the Google TurboQuant KV cache compression we covered on March 25) or use it as a secondary, offline signal generator.

- Cost: Running an LLM on every ranking decision for hundreds of millions of daily active users is astronomically costly compared to traditional models. The ROI must be proven.

- Hallucination & Safety: An LLM misunderstanding context could promote harmful or irrelevant content. In retail, a misunderstanding could misattribute brand values or suggest wildly inappropriate products, damaging brand equity.

- Evaluation: Measuring the success of an LLM-driven ranker is harder than A/B testing click-through rates. It requires nuanced metrics for user satisfaction and long-term value.

Governance & Risk Assessment

For a luxury brand considering a similar architectural shift, the risks are magnified by the need for brand safety and consistency.

- Brand Voice Dilution: An LLM must be meticulously fine-tuned to understand and reflect a brand's unique heritage and aesthetic. Off-the-shelf models lack this nuance.

- Bias Amplification: LLMs trained on broad internet data can perpetuate societal biases. For luxury, this could manifest in skewed product recommendations based on gender, age, or ethnicity, leading to reputational damage.

- Data Privacy: Using an LLM to reason about user intent requires processing potentially sensitive browsing data. Governance frameworks must be airtight, especially under regulations like GDPR.

- Maturity Level: X's integration is a bold, early-stage production experiment. The retail and luxury sector should observe its public rollout for lessons on stability and performance before committing to a core-system overhaul. Pilot projects in lower-stakes areas (e.g., email campaign personalization, search query understanding) are a more prudent first step.

gentic.news Analysis

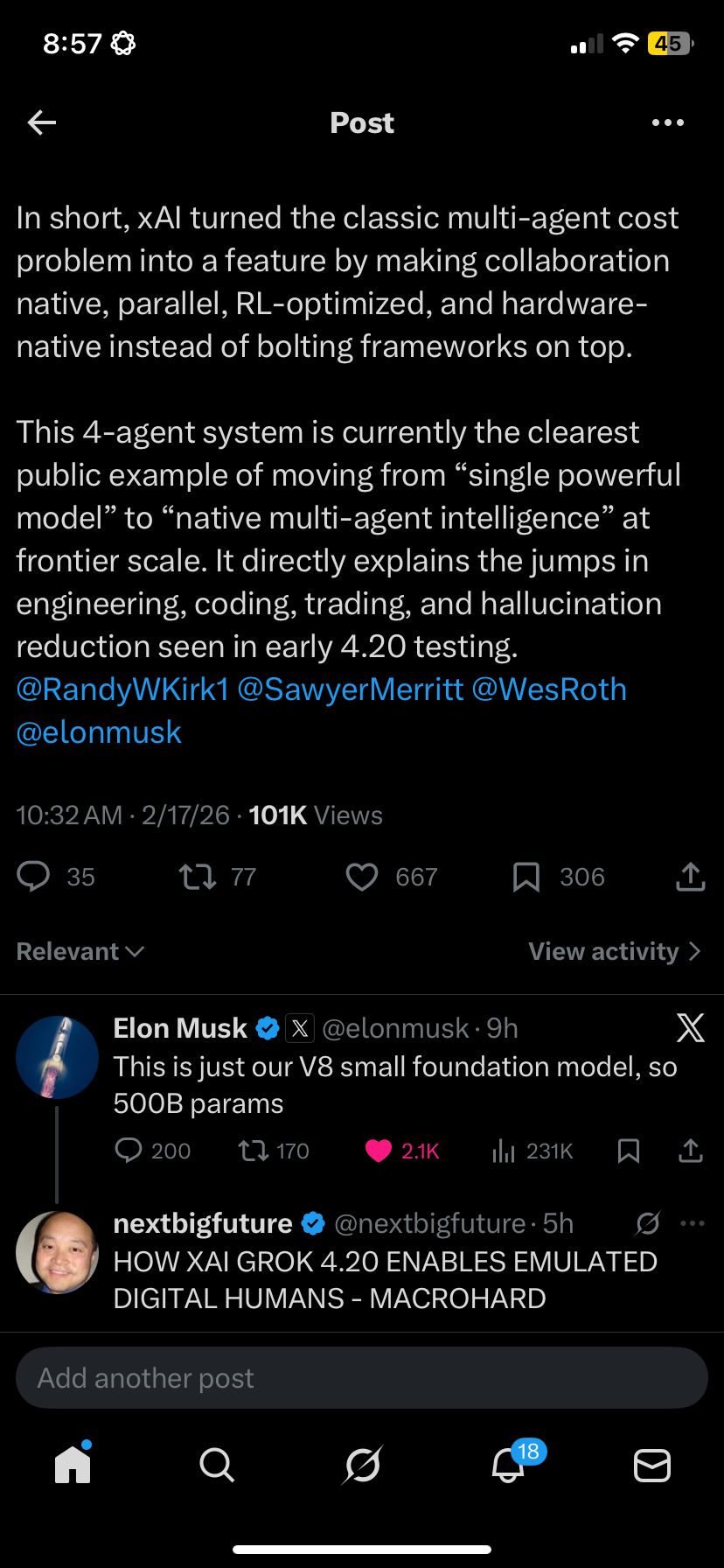

This move by X and xAI must be seen within the fierce competitive landscape of AI infrastructure. Elon Musk's xAI, which developed Grok, is directly competing with Google, OpenAI, and Anthropic (as noted in our KG, these entities are in repeated competition). By deploying Grok at the heart of a major consumer platform, Musk is not just improving a product; he is creating a massive, real-world training and validation loop for his AI model. The data generated from this integration will be invaluable for refining Grok's next iterations.

This follows a pattern of platform owners leveraging their distribution to advance their AI ambitions, similar to how Google integrates its Gemini models across Search and Workspace. The timing is also notable, coming in a week where Google has been particularly active, launching retail-specific AI protocols and novel quantization techniques (TurboQuant).

For our audience, the connection to our prior coverage is clear. Last week, we analyzed "DIET: A New Framework for Continually Distilling Streaming Datasets in Recommender Systems" and "MCLMR: A Model-Agnostic Causal Framework for Multi-Behavior Recommendation". These research papers address the very challenges X will now face at scale: efficiently updating recommendation models with streaming data and disentangling causal user intent. X's Grok experiment will be a live stress test of whether a single, large generative model can solve these problems more effectively than specialized, distilled architectures.

The bottom line for retail AI leaders: Watch X's rollout closely. Note the user feedback, the reported technical hurdles, and the performance metrics if they are shared. It is a rare, public testbed for the next generation of recommendation technology. The winning approaches that emerge—whether pure LLM, hybrid systems, or new distillation methods—will define the tools you will be evaluating for your own digital clienteling and discovery engines within the next 18-24 months.