A new benchmark from Handshake AI and McGill University has delivered a sobering reality check for the AI industry. BankerToolBench pits top models against the actual workflows of junior investment bankers — building Excel models, drafting PowerPoint decks, and parsing SEC filings — and the results are emphatic: not a single output from any model was rated ready to send to a client.

The study enlisted around 500 current and former investment bankers from firms including Goldman Sachs, JPMorgan, Evercore, Morgan Stanley, and Lazard. Of those, 172 designed the 100 tasks themselves, logging more than 5,700 hours of work. Each task took a human banker an average of five hours, with some running up to 21 hours.

Key Takeaways

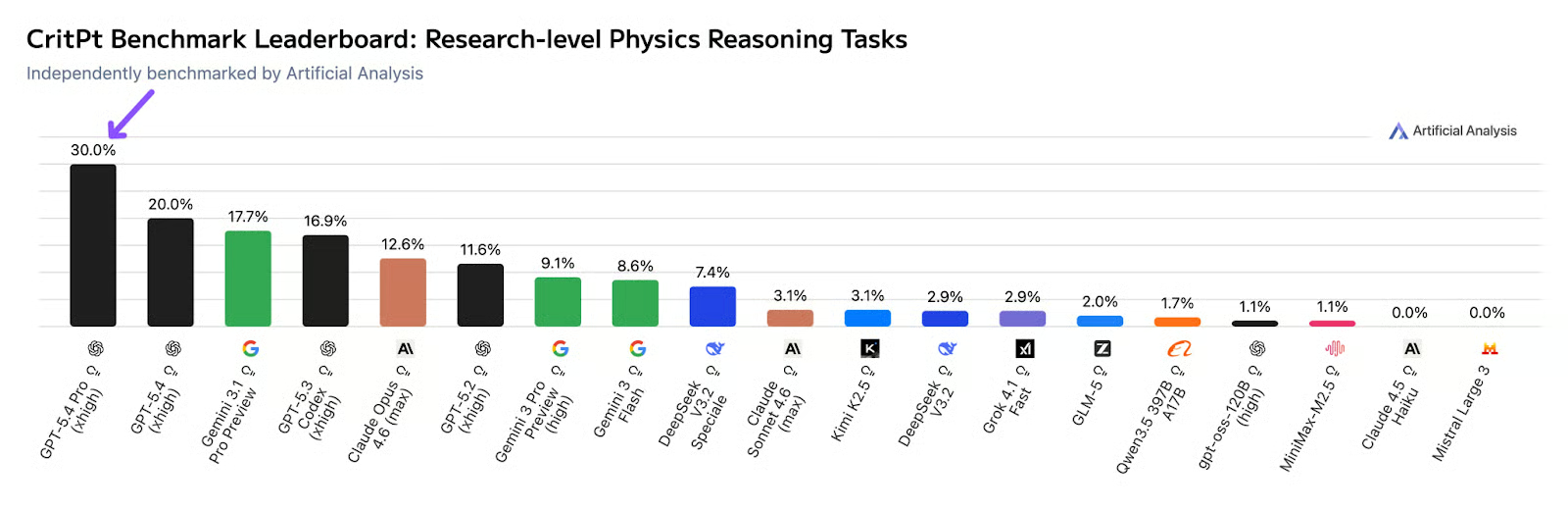

- A new benchmark, BankerToolBench, tested GPT-5.4, Claude Opus 4.6, and others on junior investment banker tasks.

- None of the outputs were deemed client-ready, with GPT-5.4 leading but still failing nearly half the criteria.

What the Benchmark Tests

BankerToolBench grades actual deliverables a junior banker would hand to a supervisor: Excel financial models with working formulas, PowerPoint decks for client meetings, PDF reports, and Word memos. The agents must dig through data rooms, pull from market data platforms like FactSet and Capital IQ, and parse SEC filings.

According to the paper, a single task can trigger up to 539 calls to the language model, with 97 percent tied to tool use or code execution. Each deliverable is checked against a banker-designed rubric averaging 150 individual criteria spanning six areas: technical correctness, client readiness, compliance, auditability, and consistency across files.

Grading is handled by an AI verifier the authors built called Gandalf, based on Gemini 3 Flash Preview. It agrees with human reviewers 88.2 percent of the time, slightly above the 84.6 percent agreement rate between two human reviewers.

Key Results

GPT-5.4 16% (as starting point) 2% 0% Claude Opus 4.6 ~15% (as starting point) ~1% 0% Gemini 2.5 Pro ~14% (as starting point) 0% 0% Other models ~10-13% (as starting point) ~0-1% 0%

GPT-5.4 came out on top but still failed nearly half the criteria. Just 16 percent of its outputs cleared the bar where bankers would accept them as a useful starting point. Require three consistent runs, and that figure drops to 13 percent. Not a single output from any model was deemed ready to submit as is. With GPT-5.4, just 2 percent of tasks cleared every critically weighted criterion. With Gemini 2.5 Pro, that figure was zero.

Polished Surface, Broken Foundations

Claude Opus 4.6's outputs look polished at first glance, according to the researchers. But the Excel models reveal a fundamental flaw: the formulas are often incorrect or produce wrong numbers. The output looks professional but contains errors that would be caught by a junior banker's supervisor.

This pattern — high-quality text generation paired with unreliable numerical computation — has been observed in previous AI benchmarks. It suggests that current models excel at generating plausible-looking outputs but lack the rigorous verification and domain-specific reasoning required for financial work.

Why It Matters

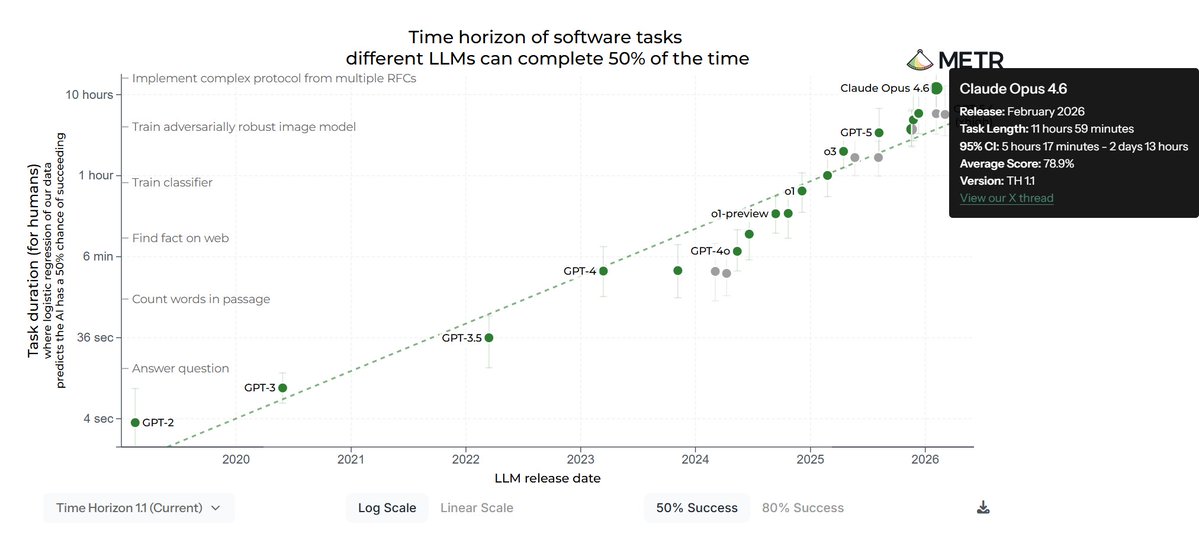

The timing is notable. Just last week, we covered how GPT-5.5 tops benchmarks but still hallucinates. This study provides a more realistic evaluation: benchmarks like MMLU and SWE-Bench measure narrow skills, but BankerToolBench tests the end-to-end workflow that defines a job.

The results align with a broader trend we've tracked. On April 23, Anthropic published a post-mortem on Claude Code quality issues, acknowledging that agentic coding tools produce outputs that look good but require significant human review. Similarly, GPT-5.5's benchmark scores mask persistent hallucination problems.

What This Means in Practice

For investment banks, the implication is clear: AI can accelerate junior bankers' work but cannot replace human oversight. More than half of the bankers said they'd use the AI output as a starting point, but none trusted it for client delivery. This creates a workflow where AI handles first drafts and humans do the verification — a pattern that may define professional services for the next several years.

The study also highlights a gap in current AI evaluation. Most benchmarks test isolated skills, not multi-step workflows with real-world deliverables. BankerToolBench may set a new standard for domain-specific evaluation.

gentic.news Analysis

This study arrives at a critical inflection point for AI in professional services. The knowledge graph shows Claude Opus 4.6 trending with 102 articles this week alone, and Claude Code appearing in 41 articles this week. The benchmark's results cut against the narrative that frontier models are rapidly approaching human-level performance in knowledge work.

The 88.2% agreement rate between Gandalf and human reviewers is itself a notable finding. It suggests that AI evaluation of AI outputs may be more reliable than human evaluation for certain tasks — a reversal of the usual dynamic. But the core result — 0% client-ready — underscores that we're still in the "co-pilot" phase, not the "autopilot" phase, for high-stakes financial work.

This follows our coverage of Anthropic's Claude Code post-mortem on April 23, which acknowledged similar quality issues in coding outputs. The pattern is consistent across domains: AI produces outputs that look good to non-experts but fail under expert scrutiny. The gap between "looks right" and "is right" remains the fundamental challenge.

Frequently Asked Questions

What is BankerToolBench?

BankerToolBench is an open-source benchmark developed by Handshake AI and McGill University that tests AI agents on the actual workflows of junior investment bankers. It evaluates Excel models, PowerPoint decks, PDF reports, and Word memos against rubrics averaging 150 criteria each.

Which models were tested in the benchmark?

The study tested GPT-5.2, GPT-5.4, Claude Opus 4.5 and 4.6, Gemini 2.5 Pro, Gemini 3.1 Pro Preview, Grok 4, Qwen-3.5-397B, and GLM-5. GPT-5.4 achieved the highest scores but still failed to produce any client-ready outputs.

Why did AI models fail the banking benchmark?

The models produced outputs that looked polished but contained fundamental errors, particularly in Excel formulas and numerical computations. The results suggest current AI lacks the rigorous verification and domain-specific reasoning required for client-ready financial work.

Can AI still be useful for investment bankers?

Yes. More than half of the bankers said they would use the AI outputs as a starting point. The study suggests AI can accelerate first drafts and initial analysis, but human verification remains essential for client delivery.