Key Takeaways

- OpenAI launched GPT-5.5, an agentic model that tops Terminal-Bench 2.0 at 82.7% and surpasses Claude Opus 4.7 and Gemini 3.1 Pro on coding and math.

- However, independent testing shows higher hallucination rates and effective API costs 20% above GPT-5.4 despite doubled token prices.

OpenAI’s GPT-5.5: New Benchmark Leader with a Hallucination Hangover

OpenAI released GPT-5.5 on April 23, 2026, positioning it as a “new class of intelligence for real work and powering agents.” The model is built to autonomously handle complex, multi-step tasks — writing code, running web searches, analyzing data, and switching between tools without human hand-holding. But the price of progress is steep: API token costs have doubled on paper, and independent testing shows effective costs are about 20% higher than GPT-5.4, with a notable weakness in hallucination rates.

What’s New

GPT-5.5 is not a single monolithic model. OpenAI released two variants:

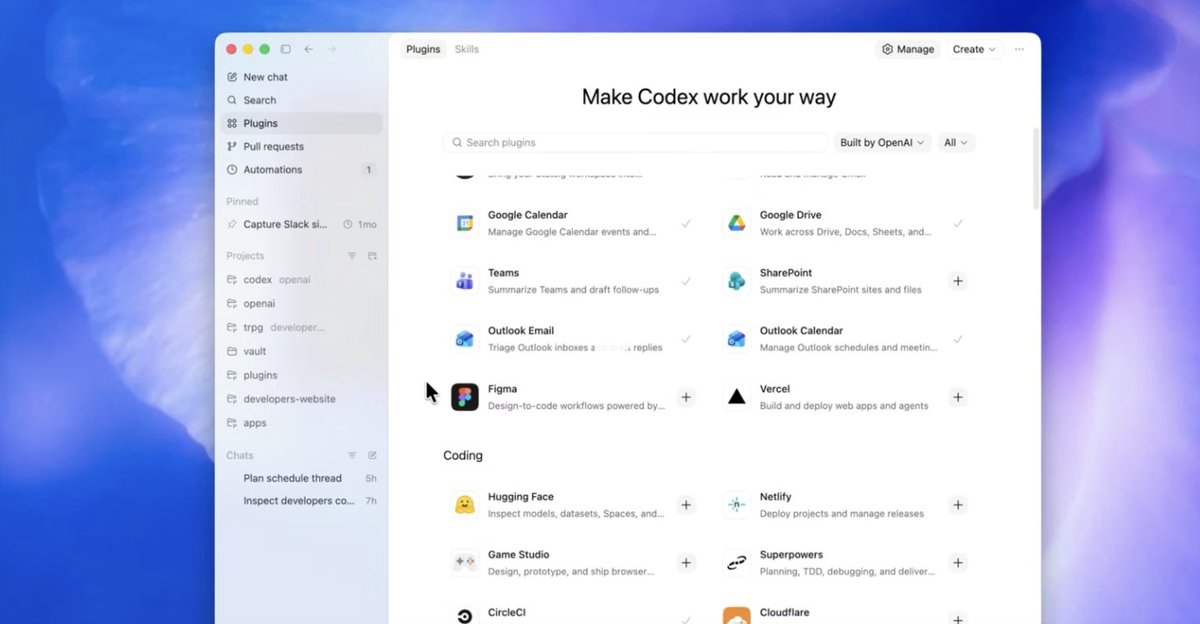

- GPT-5.5: The standard agentic model, available now to ChatGPT Plus, Pro, Business, and Enterprise users, and in Codex 5.3. API access via Responses and Chat Completions endpoints with a 1 million token context window.

- GPT-5.5 Pro: A higher-accuracy variant “designed for higher-accuracy work,” according to OpenAI. Also available through the same API with an identical context window.

Both models are now generally available to paying customers. OpenAI claims GPT-5.5 excels in four key areas: agentic coding, computer use, knowledge work, and early scientific research — domains that require sustained reasoning and multi-step action.

Key Benchmark Results

Terminal-Bench 2.0 (coding) 82.7% 75.1% 69.4% 68.5% Hard Math (OpenAI internal) Significant lead (no exact number published) — Lower LowerOn Terminal-Bench 2.0, a coding benchmark designed for agentic workflows, GPT-5.5 scores 82.7% — 7.6 points above its predecessor GPT-5.4 (75.1%). Anthropic’s Claude Opus 4.7 and Google’s Gemini 3.1 Pro lag behind at 69.4% and 68.5%, respectively. The gap widens on harder math problems, though OpenAI has not published exact numbers for those evaluations.

Independent testing lab Artificial Analysis confirmed that GPT-5.5 takes the overall top spot by a slim margin over Claude and Gemini. However, the lab flagged a notable weakness: GPT-5.5 hallucinates more frequently than its competitors. Effective API costs run about 20% higher than GPT-5.4, according to Artificial Analysis — the doubled token prices on paper are partially offset by lower token usage per task, but the net increase is still significant.

How It Works

GPT-5.5 is an agentic model by design. Unlike previous GPT models that primarily generate text in response to prompts, GPT-5.5 is built to:

- Understand complex goals: Parse multi-step instructions and break them into sub-tasks.

- Use tools autonomously: Switch between coding environments, web browsers, data analysis tools, and document editors without explicit user commands.

- Self-check its output: Evaluate intermediate results and correct errors before proceeding.

- Recover from ambiguity: When encountering unclear instructions or missing information, the model can ask clarifying questions or infer missing context.

OpenAI describes the model as switching between tools “on its own until a task is finished.” This is a significant architectural shift from earlier models that required explicit tool calls at each step.

The model runs on NVIDIA GB200 NVL72 rack-scale systems, as confirmed by an NVIDIA blog post. This hardware partnership is notable — NVIDIA has invested in OpenAI and is a key infrastructure provider for frontier AI training and inference.

How It Compares

GPT-5.5 leads on coding and math benchmarks, but it does not dominate across all categories. Key comparisons:

- Coding: GPT-5.5 beats Claude Opus 4.7 by 13.3 points on Terminal-Bench 2.0. This is a meaningful gap for developer tools like Codex.

- Math: OpenAI claims a “significant lead” on hard math problems, though exact numbers are not public.

- Hallucination: This is GPT-5.5’s Achilles’ heel. Artificial Analysis found that the model hallucinates more frequently than Claude Opus 4.7 and Gemini 3.1 Pro. For knowledge work and scientific research — two of OpenAI’s target use cases — this is a serious limitation.

- Cost: The API price has doubled on paper. However, because GPT-5.5 can complete tasks with fewer tokens (thanks to its agentic efficiency), effective costs are only about 20% higher than GPT-5.4. Still, for high-volume users, this adds up.

What to Watch

- Hallucination rates in production: OpenAI’s claims about “higher-accuracy work” for GPT-5.5 Pro need independent verification. If hallucination rates remain high, the model may not be suitable for regulated industries or customer-facing applications where factual accuracy is critical.

- Agent reliability in the wild: Benchmarks like Terminal-Bench 2.0 measure controlled scenarios. Real-world agentic workflows — especially those involving external APIs, dynamic web content, and multi-step reasoning — may reveal additional failure modes.

- Competitive response: Anthropic and Google will likely respond with updates to Claude Opus and Gemini 3.1 Pro. Given that GPT-5.5’s lead is slim on some metrics, the competitive landscape could shift quickly.

- Cost trajectory: Doubled API prices signal that OpenAI is betting on value-per-task rather than price-per-token. If users see real productivity gains, the higher cost may be acceptable. If not, competitors with lower pricing could gain share.

Frequently Asked Questions

What is GPT-5.5?

GPT-5.5 is OpenAI’s latest frontier model, designed for agentic workflows. It can autonomously write code, browse the web, analyze data, and switch between tools to complete complex tasks. It’s available in two variants: GPT-5.5 (standard) and GPT-5.5 Pro (higher accuracy).

How much does GPT-5.5 cost?

The API token price has doubled compared to GPT-5.4. However, independent testing by Artificial Analysis shows effective costs are about 20% higher than GPT-5.4, because the model uses fewer tokens per task. GPT-5.5 is included in ChatGPT Plus, Pro, Business, and Enterprise subscriptions.

Is GPT-5.5 better than Claude Opus 4.7?

On coding and math benchmarks, yes — GPT-5.5 scores 82.7% on Terminal-Bench 2.0 versus 69.4% for Claude Opus 4.7. However, GPT-5.5 hallucinates more frequently than Claude, making it less reliable for knowledge work and factual tasks.

When is GPT-5.5 available?

GPT-5.5 and GPT-5.5 Pro are available now via the OpenAI API (Responses and Chat Completions endpoints) and in ChatGPT for Plus, Pro, Business, and Enterprise subscribers. Codex users also have access.

gentic.news Analysis

GPT-5.5 arrives at a pivotal moment in the AI agent wars. Our coverage has tracked the rapid maturation of agentic systems — just two days ago, we reported on Cua Driver being open-sourced for macOS agent control, and earlier this month, industry leaders predicted 2026 as the breakthrough year for AI agents. OpenAI’s launch confirms that prediction, but the hallucination weakness is a red flag that echoes our earlier reporting on the reliability threshold for agents.

OpenAI’s relationship with NVIDIA is worth watching. The model runs on NVIDIA GB200 NVL72 systems, deepening the hardware-software integration between the two companies. This follows NVIDIA’s investments in OpenAI and the broader trend of AI labs partnering closely with infrastructure providers — a dynamic we’ve seen play out with Microsoft and OpenAI as well.

The pricing strategy is aggressive. Doubling API prices while claiming lower token usage per task is a bet that the market will pay more for autonomous agents that save developer time. But with DeepSeek V4-Pro offering open weights and 10x cost advantages (as we covered yesterday), the pressure on proprietary pricing is mounting. If GPT-5.5’s hallucination problem proves persistent, cost-conscious enterprises may look to open-source alternatives or Claude.

Finally, the timing is notable. GPT-5.5 was described internally as a “GPT-3.5 moment” — a leap that redefines what’s possible. Whether the market agrees will depend on real-world reliability, not just benchmark scores. We’ll be watching the independent evaluations closely.