Key Takeaways

- A developer has ported DeepSeek-V4 to Apple's MLX framework, allowing the large language model to run on Apple Silicon Macs.

- Early results show functional inference with room for optimization.

What Happened

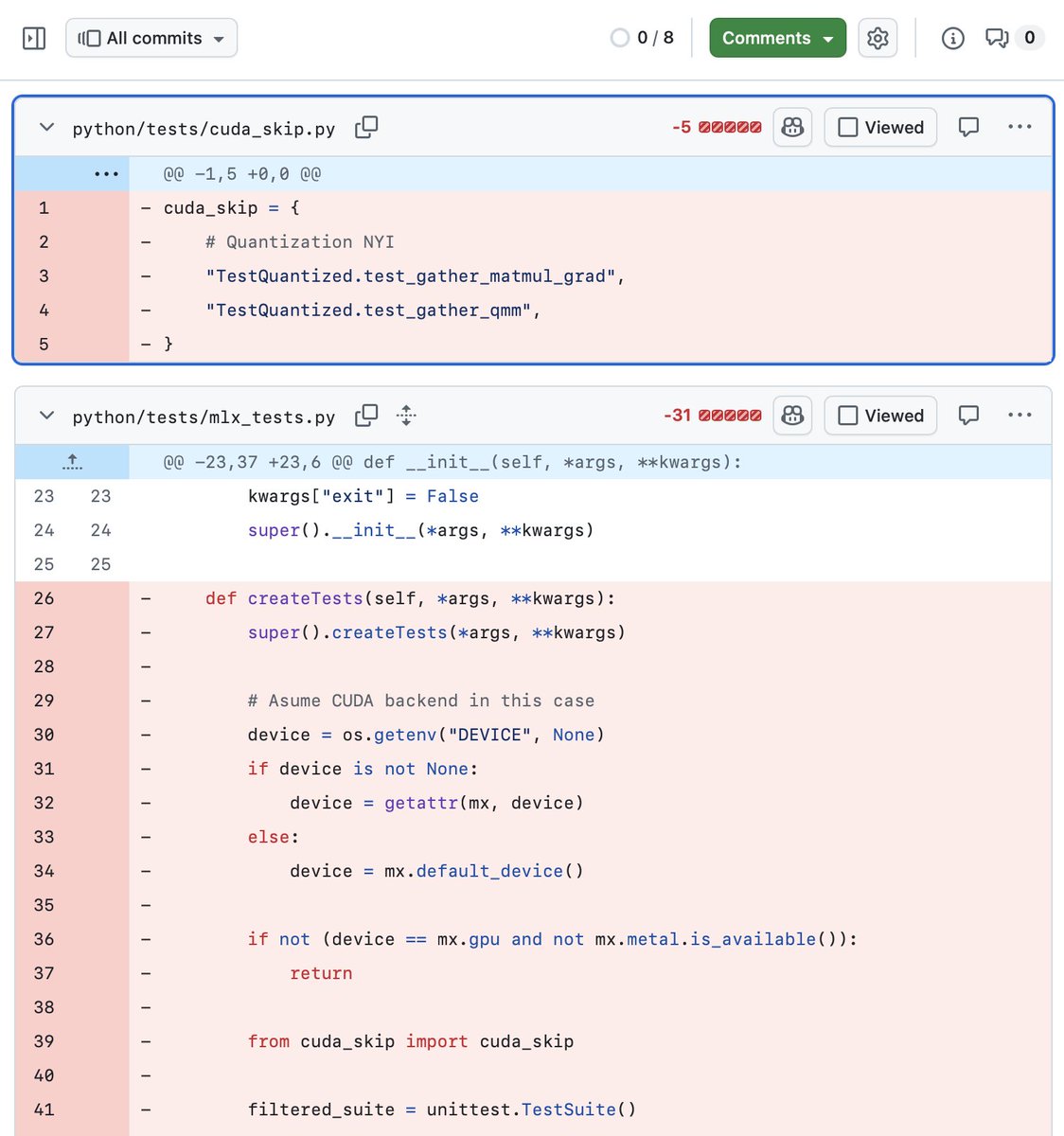

Developer @Prince_Canuma has ported DeepSeek-V4 to Apple's MLX framework, enabling the large language model to run locally on Apple Silicon Macs. The port is functional but still requires optimization, as noted in the developer's tweet.

Context

DeepSeek-V4 is the latest iteration of DeepSeek's large language model series, known for strong performance on reasoning and coding benchmarks. MLX is Apple's machine learning framework for Apple Silicon, designed to leverage the unified memory architecture of M-series chips for efficient model inference.

This port follows a pattern of community efforts to run large models on consumer hardware. While DeepSeek-V4 is a large model, MLX's efficient memory management allows it to run within the constraints of Mac hardware, though performance may vary depending on the specific model size and hardware configuration.

The developer has not yet published detailed benchmarks or optimization results, but the initial port demonstrates feasibility for local inference of DeepSeek-V4 on Apple Silicon.

gentic.news Analysis

This port is part of a broader trend of making frontier models accessible on consumer hardware. The MLX ecosystem has seen rapid growth, with ports of models like Llama, Mistral, and now DeepSeek-V4. This democratizes access to large models for developers who want to run inference locally without cloud dependencies.

The fact that the port is functional but not yet optimized suggests that DeepSeek-V4's architecture is compatible with MLX's design principles, but inference speed and memory usage may not yet match optimized implementations. For practitioners, this means local experimentation is possible, but production use may require further optimization or quantization.

This development also highlights the growing importance of hardware-specific frameworks. While DeepSeek-V4 is typically run on server-grade GPUs, MLX ports enable edge cases like offline coding assistants, privacy-sensitive applications, and educational use on Mac hardware.

Frequently Asked Questions

What is MLX?

MLX is Apple's machine learning framework for Apple Silicon, optimized for the unified memory architecture of M-series chips. It allows efficient model inference and training on Mac hardware.

Can I run DeepSeek-V4 on my Mac?

Yes, with this port. However, performance depends on your Mac's RAM and chip generation. Larger models may require significant memory, and inference speed will vary.

Is this an official DeepSeek release?

No, this is a community port by developer @Prince_Canuma. It is not officially supported by DeepSeek.

How does this compare to running DeepSeek-V4 on cloud GPUs?

Local inference on Mac hardware will be slower than cloud GPU inference but offers privacy, no API costs, and offline availability.