Developer Prince Canuma has successfully ported the TurboQuant quantization method to Apple's MLX framework, reporting a 75% reduction in memory usage with "nearly no hit on performance" for running large language models on Apple Silicon.

The announcement was made via a social media post, highlighting a significant step in making powerful AI models more efficient and accessible on Apple hardware.

What Happened

Prince Canuma, a developer active in the machine learning optimization space, shared that he has implemented TurboQuant—a post-training quantization technique—within Apple's MLX framework. MLX is an array framework for machine learning research on Apple Silicon, developed by Apple's machine learning research team. The key claim is that this implementation achieves a dramatic 75% reduction in memory usage while maintaining nearly equivalent model performance (inference accuracy/speed) compared to running models at full precision.

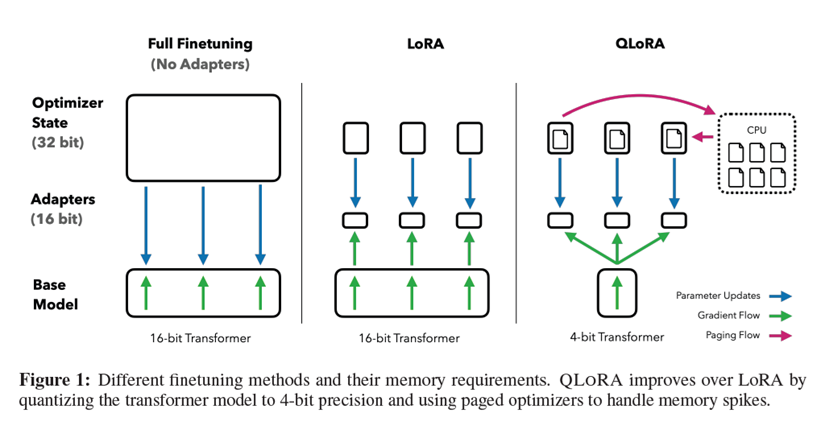

Quantization reduces the numerical precision of a model's weights (e.g., from 16-bit floating point to 4-bit integers). This directly reduces the memory footprint required to load the model, which is a critical bottleneck for deploying large models on consumer devices with limited RAM, like MacBooks and iPhones.

Context: TurboQuant and MLX

TurboQuant is a specific quantization method. While detailed public benchmarks for it are sparse, quantization techniques generally trade off some model accuracy for massive gains in memory and computational efficiency. A 75% reduction suggests a move to very low precision, likely 3 or 4 bits per parameter.

Apple's MLX framework provides a NumPy-like API for machine learning on Apple Silicon, allowing researchers and developers to run models efficiently on the unified memory architecture of M-series chips. Efficient quantization within MLX is a sought-after capability, as it directly enables larger models to run locally.

Canuma's work appears to be an independent integration, porting the TurboQuant methodology into the MLX ecosystem. The post does not specify which models have been tested or provide reproducible benchmark numbers, but the claim aligns with the ongoing industry-wide push for extreme model compression.

Why This Matters for On-Device AI

The primary value is for on-device AI inference. Reducing a model's memory footprint by 75% means:

- A 7B parameter model that normally requires ~14GB of RAM could potentially run in ~3.5GB.

- This brings capable LLMs into the realm of standard consumer MacBooks and even high-end iPhones/iPads.

- It enables faster loading times and the potential to run multiple models or larger context windows within the same hardware constraints.

The "nearly no hit on performance" claim is crucial. If validated, it means this memory saving comes without the typical significant degradation in answer quality or coherence that often accompanies aggressive quantization. This balance is the holy grail of model compression.

What We Don't Know Yet

The announcement is preliminary. To assess its real impact, the community will need:

- Code Availability: Is the ported TurboQuant implementation open-sourced or publicly available?

- Benchmarks: Rigorous testing on standard model suites (like Llama 2/3, Mistral) across diverse tasks (reasoning, coding, knowledge) to quantify the exact performance trade-off.

- Comparison: How does it stack against other quantization methods already available in the MLX ecosystem or broader landscape (like GPTQ, AWQ, or MLX's own

mlx.lm.quantize)?

gentic.news Analysis

This development taps directly into two converging, high-trend entities in our knowledge graph: Apple MLX 📈 and the broader theme of Model Quantization 📈. MLX has seen a surge in community activity and project integrations since its release, positioning itself as a core tool for the Apple AI developer stack. Canuma's work is a direct contributor to that ecosystem growth.

The push for efficient on-device AI is not new. This follows Apple's own quiet but consistent strategy of enabling powerful ML on its silicon, as we covered in our analysis of MLX's release and its implications for local LLM development. However, most early MLX quantization efforts have been adaptations of existing methods. A dedicated port of TurboQuant, with such aggressive memory claims, suggests the community is moving beyond simple porting and into optimizing specialized quantization techniques for the Apple hardware paradigm.

This aligns with a competitive trend we're tracking: the race to shrink models for edge deployment. It stands in contrast to cloud-focused scaling laws and complements other approaches like speculative decoding or mixture-of-experts for efficiency. If the claims hold, it could slightly pressure other edge-focused frameworks (like TensorFlow Lite, ONNX Runtime) to demonstrate similar efficiency gains on their respective platforms. The key test will be independent replication and benchmarking, which our technical audience will demand before integrating it into any pipeline.

Frequently Asked Questions

What is TurboQuant?

TurboQuant is a post-training quantization (PTQ) method designed to compress large language models by reducing the bit-width of their parameters (e.g., to 3 or 4 bits). Its stated goal is to achieve high compression ratios with minimal loss in model accuracy, making LLMs more feasible to run on devices with limited memory.

What is Apple MLX used for?

Apple MLX is a machine learning framework built for Apple Silicon. It allows developers and researchers to train and run models—like large language models—efficiently on Macs with M-series chips by leveraging the unified memory architecture. It's become a popular tool for running local, open-source LLMs.

How does a 75% memory reduction help?

A 75% reduction in memory usage means a model requires one-quarter of its original RAM. This allows significantly larger models to run on consumer hardware (like a MacBook Air) or enables existing models to run with larger context windows (processing more text at once). It's critical for practical, responsive local AI applications.

Are there benchmarks available for this MLX TurboQuant port?

As of this initial announcement, no detailed benchmarks have been published. The claim of "nearly no hit on performance" with 75% less memory is based on the developer's testing. The technical community will need access to the code and independent validation on standard tasks to verify the performance trade-offs.