A new open-source project demonstrates how to perform single or multiple object tracking in videos entirely on a local machine, eliminating the need for cloud-based vision APIs. The method combines Meta's recently released Segment Anything 3 (Sam3) model with Apple's MLX machine learning framework, which is optimized for Apple Silicon.

What Happened

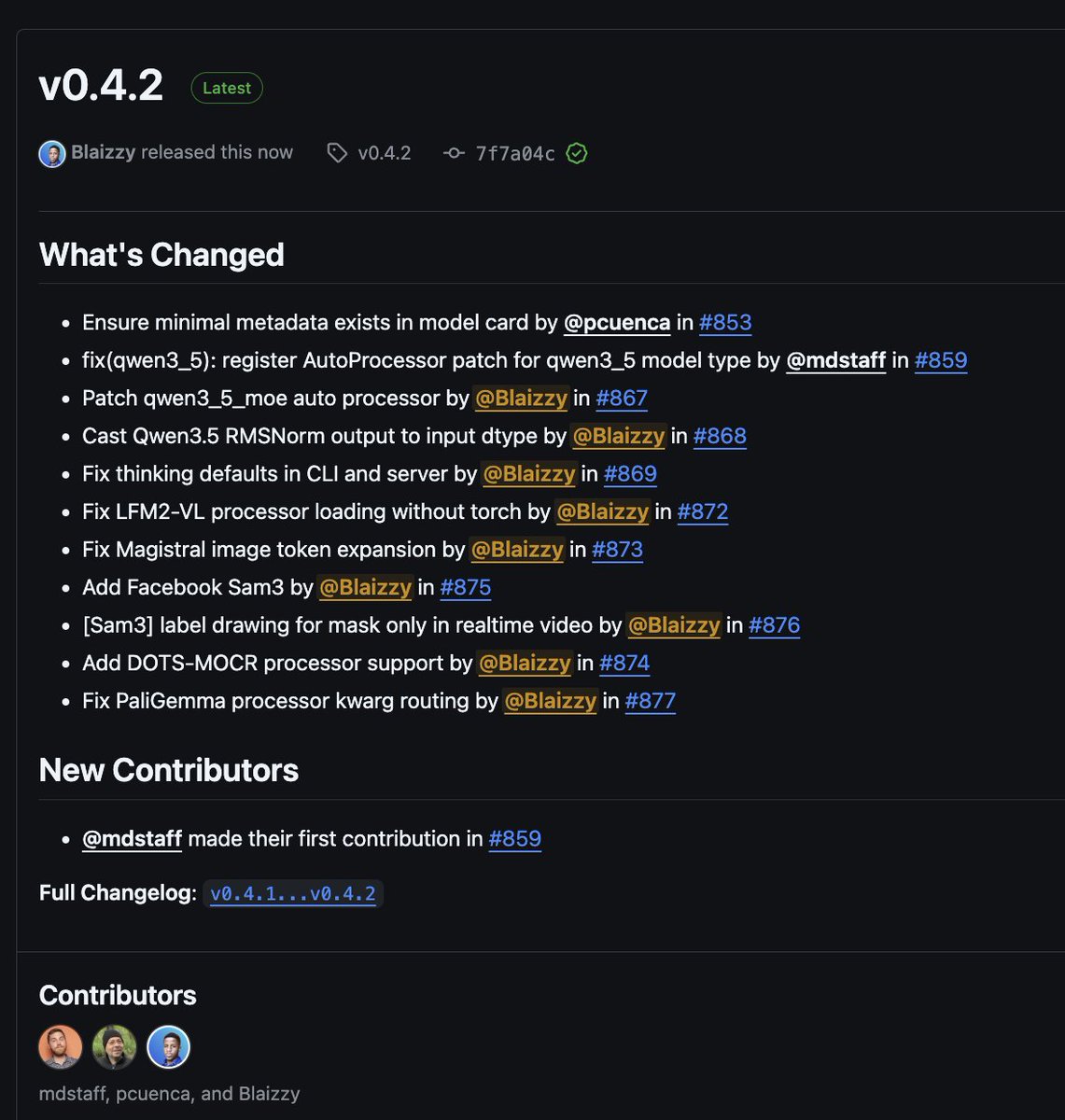

Developer Prince Canuma shared a working implementation that uses Sam3 for initial object segmentation and a tracking algorithm to follow those segments across video frames. The entire pipeline runs locally using MLX, allowing it to execute efficiently on Macs with M-series chips. The project is hosted on GitHub, providing code that users can clone and run without sending video data to external services.

Technical Details

The implementation leverages two key technologies:

- Segment Anything 3 (Sam3): Meta's foundational model for segmenting objects in images and videos based on points, boxes, or text prompts. Released in late 2025, Sam3 improved upon its predecessor with better zero-shot performance and video segmentation capabilities.

- MLX: Apple's machine learning array framework for Apple Silicon. MLX allows models like Sam3 to run with optimized performance on Mac CPUs and GPUs, providing a PyTorch-like API without requiring cloud compute.

The workflow is straightforward: a user provides a video and selects an object (or objects) to track in the first frame. Sam3 creates a precise mask of the object. A tracking algorithm then uses this mask to locate the object in subsequent frames, potentially with periodic re-segmentation by Sam3 to correct drift.

Why Local Tracking Matters

Most production-grade object tracking has relied on cloud APIs from providers like Google Vision, AWS Rekognition, or dedicated video AI services. This local approach offers several practical advantages:

- Cost Elimination: No per-call API fees or monthly quotas.

- Privacy & Data Sovereignty: Video data never leaves the local device, crucial for medical, security, or proprietary content.

- Latency Reduction: Processing happens immediately without network round-trips.

- Offline Functionality: Usable in environments without reliable internet connectivity.

The trade-off is local computational resource usage, but MLX's optimization for Apple hardware makes this feasible for many applications.

How to Try It

For developers interested in experimenting, the project is available on GitHub. Running it requires:

- A Mac with Apple Silicon (M1/M2/M3/M4)

- Python environment with MLX installed

- The Sam3 weights (available from Meta's repository)

The repository includes example scripts and instructions for processing video files. This is currently a developer tool, not a consumer-facing application.

gentic.news Analysis

This project is a tangible example of a broader trend we've been tracking: the democratization and decentralization of foundational model inference. As we covered in our analysis of Apple's MLX 1.0 release, Apple has been strategically building an ecosystem to keep AI workloads on-device, directly challenging the cloud-centric paradigm of OpenAI, Google, and Anthropic. The integration of Sam3—a model from Meta, another cloud-agnostic giant—fits perfectly into this strategy. It creates a viable, local alternative to cloud vision APIs.

Technically, this implementation is more of a clever engineering synthesis than a novel research breakthrough. The real significance is in the stack choice: using MLX instead of PyTorch directly or ONNX Runtime for Apple hardware. This suggests developers are starting to trust MLX for production-relevant tasks beyond experimentation. If this pattern holds, we could see a new category of "MLX-optimized" model ports that bring other foundational models (like vision-language models or audio models) into fully local execution.

From a market perspective, this aligns with Meta's open-weight strategy and Apple's on-device AI focus—two approaches that indirectly ally against the closed API model. For practitioners, the immediate implication is that prototyping computer vision features no longer requires cloud credits. The longer-term implication is that cost and privacy concerns may push more real-time video analysis to the edge, reshaping the architecture of applications in security, retail analytics, and media production.

Frequently Asked Questions

What is MLX and do I need a Mac to use this?

MLX is Apple's machine learning framework designed specifically for its family of Apple Silicon chips (M1, M2, M3, M4). It is primarily intended to run on macOS. Therefore, to run this specific Sam3 tracking implementation, you do need a Mac with an Apple Silicon processor. The framework optimizes computation across the CPU and GPU cores of these chips for efficient local execution.

How does this local tracking compare to cloud APIs like Google Video AI?

Cloud APIs typically offer higher throughput, managed scalability, and often more polished, production-ready features out of the box. This local solution offers zero latency from data transmission, no ongoing usage costs, and complete data privacy. The accuracy may be comparable for standard objects, but cloud APIs might still lead in complex scenarios (e.g., heavy occlusion, tiny objects) due to their potentially larger backbone models and dedicated infrastructure. This local tool is ideal for prototyping, offline applications, or projects with strict privacy requirements.

Can I track any object in a video with this tool?

The capability depends on the Segment Anything 3 (Sam3) model's zero-shot segmentation performance. Sam3 is exceptionally good at segmenting a wide variety of objects based on a user's click or box in the first frame. However, it may struggle with very amorphous objects (e.g., smoke, water), objects that change shape drastically, or objects that are extremely small relative to the frame. The tracking algorithm's ability to maintain the track also depends on factors like motion blur and occlusion.

Is this project ready for commercial use?

As shared, this is a developer demonstration project hosted on GitHub. It provides a working proof-of-concept and codebase that can be adapted. For commercial use, significant engineering would be required to build a robust, user-friendly application with features like a proper UI, batch processing, failure handling, and optimizations for long videos. It serves as an excellent starting point but is not a plug-and-play commercial product in its current form.