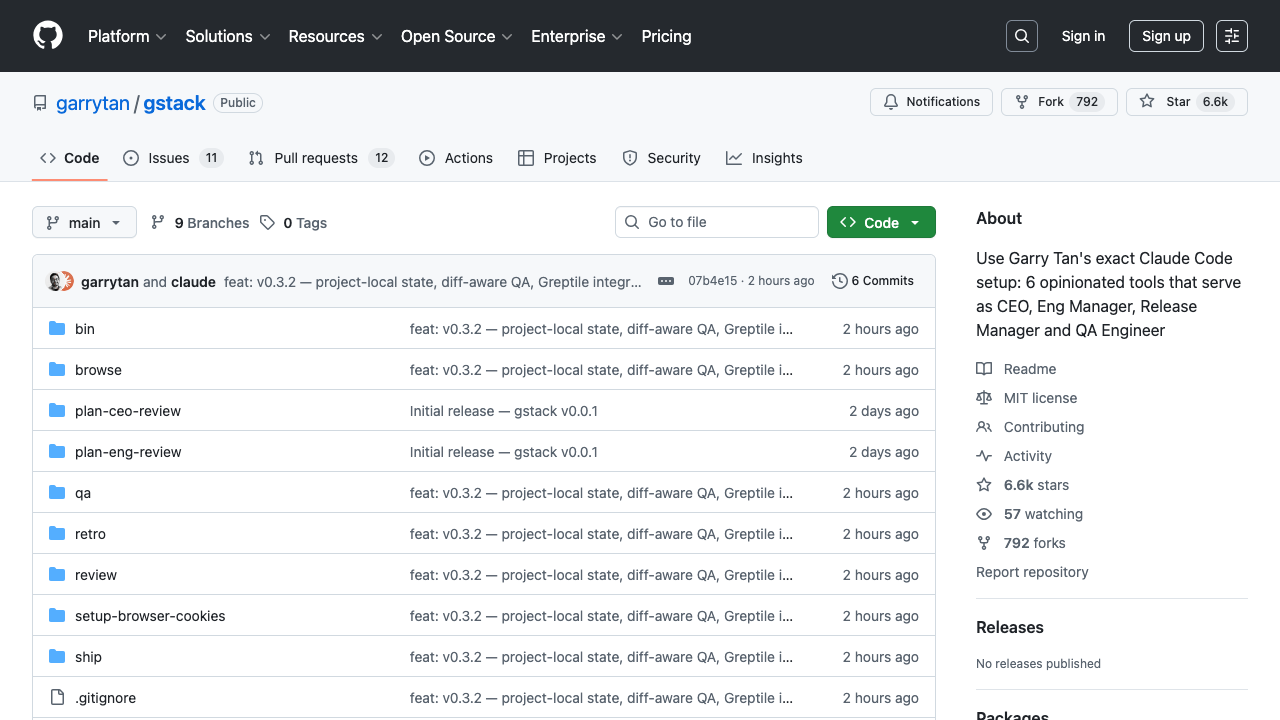

Garry Tan, Y Combinator's CEO, built GBrain as his personal agent memory system. It uses markdown files as the system of record, not a vector store.

Key facts

- Built by Y Combinator CEO Garry Tan

- 34 skills ship with GBrain

- Runs on embedded PGLite, ready in 2 seconds

- 20 deterministic search techniques layered in concert

- Zero LLM calls for knowledge graph updates

How GBrain Inverts the Agent Memory Stack

Most agent memory tools—Mem0, Zep, LangMem, or a CLAUDE.md file—rely on vector embeddings stored in a database, retrieved by semantic similarity. Some add a knowledge graph on top. [According to @akshay_pachaar] GBrain flips this model entirely.

The source of truth is a folder of markdown files: one page per person, one per company, one per concept. Each page has a two-part structure: Compiled truth on top (rewritten as new evidence arrives) and Timeline on the bottom (an append-only evidence trail that never gets edited).

"The markdown IS the system of record," the source states. You can open it in VS Code, edit by hand, and gbrain sync picks up changes.

Self-Wiring Knowledge Graph Without Token Spend

Every time a page is written, GBrain extracts entity references and creates typed relationship links: works_at, invested_in, founded, attended, advises. All deterministic, all regex-based, zero LLM calls. "The knowledge graph wires itself on every single write, without spending tokens," per the source.

For relational queries like "who works at Acme AI?" or "what has Bob invested in this quarter?", the agent walks the graph instead of relying on vector similarity, which struggles with such queries.

Search and the Compounding Loop

Search layers ~20 deterministic techniques: intent classification, multi-query expansion, vector search, keyword search, reciprocal rank fusion, cosine re-scoring, compiled-truth boosting, and backlink ranking.

GBrain's signal detector fires on every message, capturing entities in the background. Person mentioned once? Stub page. Three mentions across different sources? Web enrichment kicks in. After a meeting? Full pipeline.

The agent runs a dream cycle overnight: scans conversations, enriches missing entities, fixes broken citations, consolidates memory. "You wake up and the brain is smarter than when you went to bed."

Technical Architecture and Distribution

GBrain ships with 34 skills, runs on embedded PGLite (no server, ready in 2 seconds), and works as an MCP server for Claude Code, Cursor, and Windsurf. The unique take: this inverts the standard agent memory architecture by making markdown the canonical store, with vector search as just one of 20 complementary techniques rather than the primary retrieval mechanism.

What to watch

Watch for community adoption metrics: whether GBrain's markdown-as-source-of-truth approach gains traction beyond Garry Tan's personal use, and whether other agent frameworks adopt similar deterministic graph-wiring patterns to reduce token spend.