Key Takeaways

- A developer shares a backend implementation guide for automating the fine-tuning process of AI models using Apple's MLX framework.

- This enables private, on-device model customization without cloud dependencies, which is crucial for handling sensitive data.

What Happened

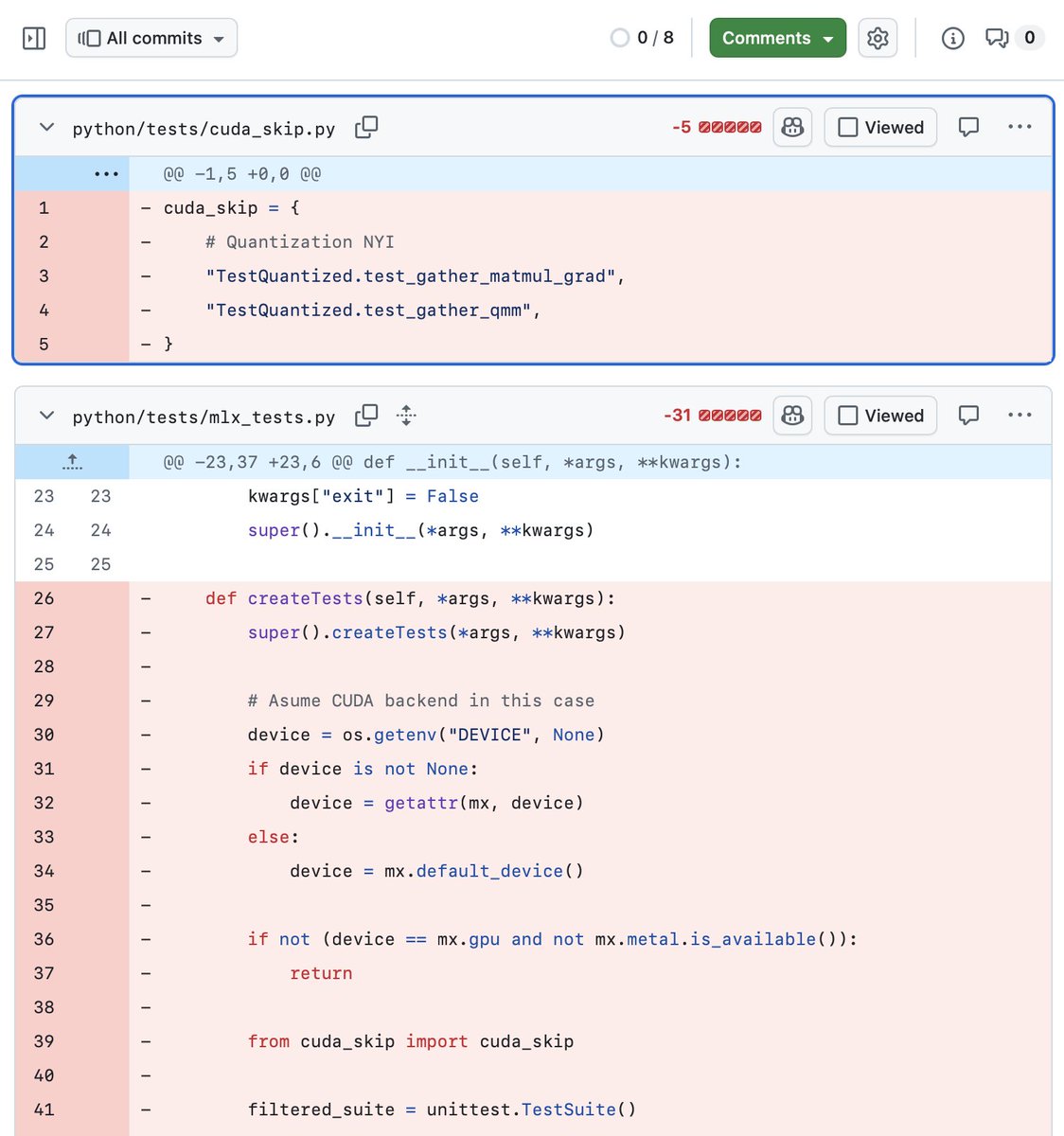

A developer has published a detailed technical guide titled "Technical Implementation: Building the Local Fine-Tuning Engine." The core focus is on implementing the backend logic to automate the process of fine-tuning AI models using MLX, Apple's machine learning framework designed to run efficiently on Apple Silicon (M-series chips).

The article is presented as a "hands-on" guide, suggesting it provides concrete code and architectural patterns rather than just theoretical concepts. The goal is to build an engine that manages the entire fine-tuning pipeline locally, from data preparation and training loop execution to model checkpointing and evaluation. This approach emphasizes privacy, cost control, and reduced latency by eliminating reliance on cloud-based GPU services.

Technical Details

While the full article is behind a Medium paywall, the premise is clear: it's a build log for a local fine-tuning system. Key technical components likely include:

- MLX Framework: The guide leverages MLX, which is specifically optimized for Apple's unified memory architecture. This allows large models to be fine-tuned on consumer hardware like MacBooks or Mac Studios by efficiently managing data between the CPU and GPU.

- Automation Logic: The "engine" concept implies moving beyond one-off scripts. The implementation probably covers automating dataset loading, applying parameter-efficient fine-tuning (PEFT) methods like LoRA (Low-Rank Adaptation), managing training runs, and saving results.

- Local-First Architecture: The entire stack is designed to run on a local machine. This addresses data sovereignty concerns and provides a predictable, one-time cost (the hardware) versus variable cloud compute bills.

Retail & Luxury Implications

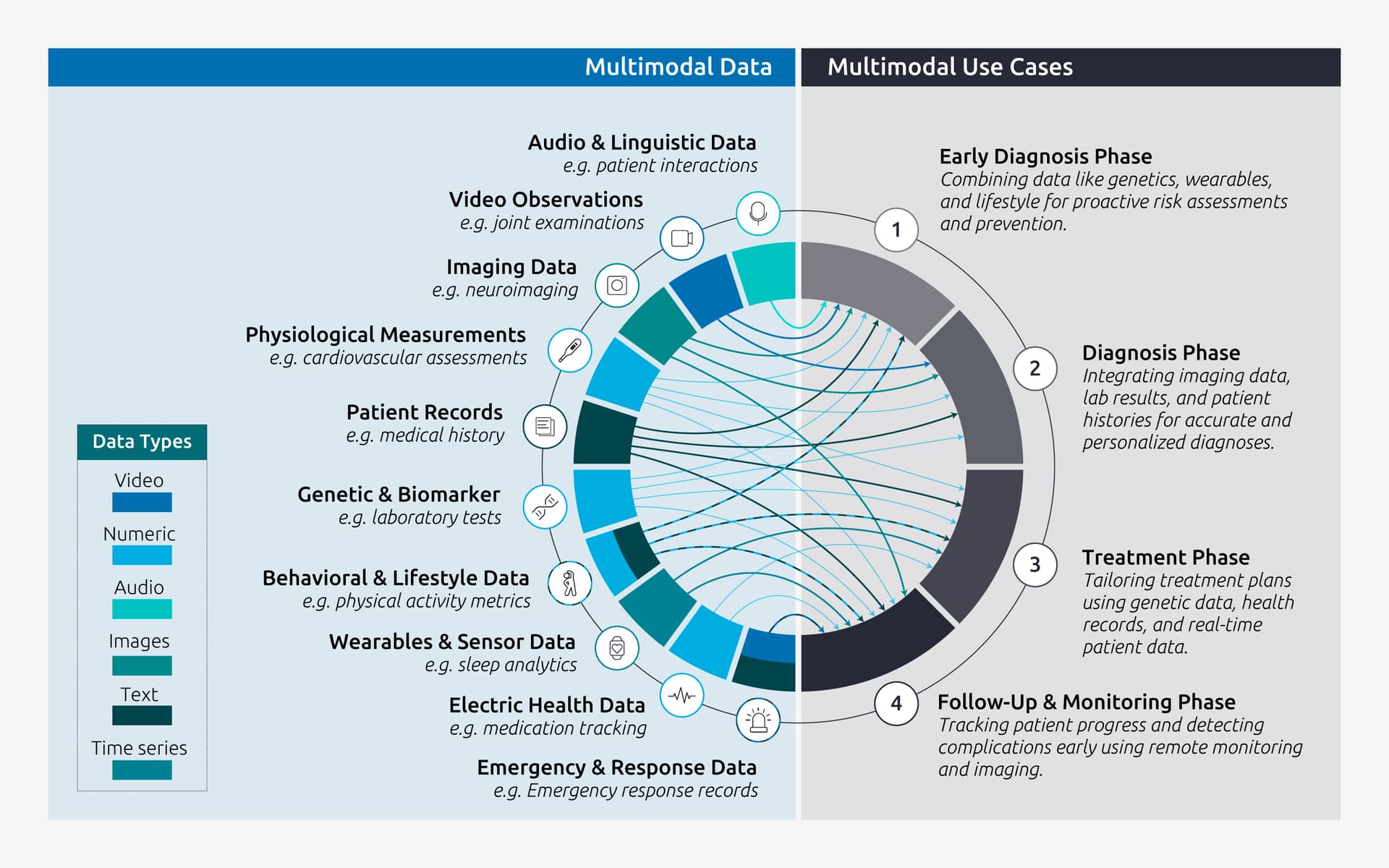

The ability to fine-tune models locally has significant, though nascent, implications for retail and luxury. The primary value is in creating highly specialized, proprietary AI assistants without exposing sensitive internal data.

Private Clienteling Models: A brand could fine-tune a small, efficient language model (e.g., a 7B parameter model) on its private corpus of client notes, purchase histories, and stylist insights. This creates a hyper-personalized clienteling assistant that understands brand-specific terminology and client relationships, all running on a secured company device. No customer data ever leaves the premises.

Internal Process Automation: Local fine-tuning engines can be used to create models that understand complex internal workflows. For example, a model could be tuned on historical inventory reports, supply chain communications, and product descriptions to become an expert assistant for planners or merchandisers, answering complex natural language queries about stock levels or product attributes.

Prototyping and Innovation: A local setup drastically reduces the cost and friction of experimentation. AI teams can rapidly prototype new applications—like a copywriting assistant tuned on a brand's historical campaign language—without going through lengthy cloud service procurement and data security reviews for each experiment.

The Gap: The current guide is a technical starting point. For a luxury enterprise, the journey from this engine to a production system involves significant additional work: implementing robust data pipelines, establishing model governance and versioning, and integrating the fine-tuned model into existing business applications. This is an enabling technology for advanced AI teams, not an off-the-shelf product.