Google has shifted its open-source AI strategy with the release of the Gemma 4 family, a suite of four models built from the same foundational research as its proprietary Gemini 3. The key departure: all models are now released under the permissive Apache 2.0 license, replacing the previous custom Gemma license, granting developers significantly more freedom for modification and commercial deployment.

What's New: Four Models, One License

Google is releasing four distinct model sizes, targeting different deployment scenarios:

Gemma 4 2B 2 Billion "Effective" (likely distilled) Smartphones, edge devices Gemma 4 4B 4 Billion "Effective" Edge devices, laptops Gemma 4 26B 26 Billion Mixture of Experts (MoE) Servers, cloud inference Gemma 4 31B 31 Billion Dense High-performance serversThe 2B and 4B "Effective" models are optimized for on-device inference. The 26B model represents Google's first open-source Mixture-of-Experts (MoE) model in the Gemma line, a sparsely-activated architecture that can maintain a large parameter count while keeping computational costs lower during inference. The 31B model is a traditional dense model.

Technical Details: Performance and Capabilities

Google's primary claim is "an unprecedented level of intelligence-per-parameter." The company cites the Arena AI text leaderboard, where the 31B and 26B models reportedly hold the third and sixth spots respectively, outperforming models up to 20 times their size. Arena AI uses human preference voting, making this a strong indicator of perceived output quality.

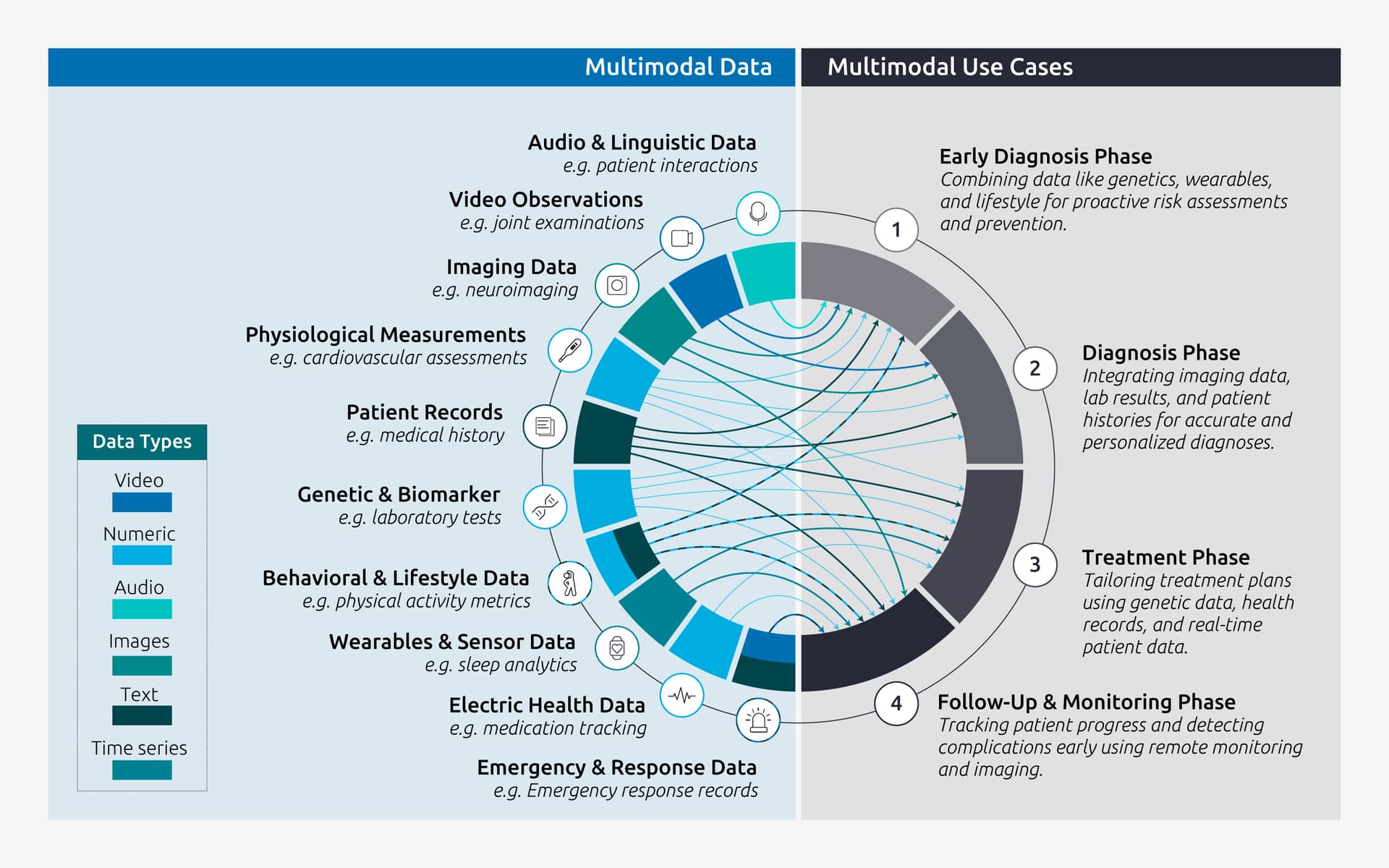

All four models are multimodal from the ground up, capable of processing video, images, and text. This makes them suitable for tasks like visual question answering or optical character recognition (OCR). The two smaller edge models (2B and 4B) add audio input and speech understanding capabilities. Google also states the entire family supports offline code generation and has been trained on data from over 140 languages.

The shift to the Apache 2.0 license is a major change from the previous Gemma license, which included use-case restrictions. Apache 2.0 is a standard, well-understood open-source license that allows for commercial use, modification, distribution, and patent use, providing what Google calls "complete developer flexibility and digital sovereignty."

How It Compares: Filling the Open-Source MoE Gap

The Gemma 4 26B MoE model enters a competitive but sparse segment of the open-source landscape. Until now, high-performing open MoE models have been limited, with leaders like Meta's Llama models being predominantly dense. Google's release provides a direct, Apache-licensed alternative for developers seeking the efficiency benefits of MoE architecture.

The permissive licensing also contrasts with the licensing of other leading open-weight models, many of which use custom licenses (like Meta's Llama) or non-commercial variants. This move could accelerate enterprise adoption for on-premises deployment where data privacy is paramount.

What to Watch: Benchmarks and Real-World Efficiency

While the Arena AI ranking is promising, independent benchmarking on standardized academic suites (like MMLU, GSM8K, HumanEval) will be critical to validate Google's efficiency claims. Practitioners should also test the real-world latency and memory footprint of the 26B MoE model compared to a dense 31B model to quantify the MoE advantage.

The availability of audio capabilities in the small models is notable for edge AI applications. However, the source does not detail the audio model's architecture (e.g., whether it uses a separate encoder or a unified multimodal backbone).

Model weights are available immediately on Hugging Face, Kaggle, and Ollama.

gentic.news Analysis

This release is a clear strategic pivot by Google to regain momentum in the open-source AI community. The shift to Apache 2.0 directly addresses developer complaints about the restrictive nature of the first Gemma license and aligns with the broader industry trend towards more permissive licensing to spur adoption. It follows a week of significant Google AI infrastructure news, including the $5B+ Texas data center investment for Anthropic and the open-sourcing of the TimesFM time-series model, indicating a coordinated push on both infrastructure and accessible model fronts.

The inclusion of a Mixture-of-Experts model is technically significant. MoE architectures, which route inputs to specialized sub-networks, have been a key differentiator for top-tier proprietary models (like GPT-4 and Gemini itself) due to their superior efficiency. Google's decision to open-source a 26B MoE model, following the trend set by other releases like NVIDIA's Nemotron-Cascade 2 (which also uses MoE), helps democratize this advanced architecture. It provides researchers and developers with a crucial tool for studying and building upon sparse model designs.

Contextually, this release continues the intense competition between Google, Anthropic, and OpenAI. By strengthening its open-source portfolio, Google creates a funnel: developers start with free, powerful Gemma models for prototyping and on-prem deployment, potentially lowering the barrier to later adopting Google's cloud-based Gemini API for scaling. This "open-first" strategy contrasts with OpenAI's largely closed approach and complements Google's recent moves in agent frameworks, as covered in our article "Top AI Agent Frameworks in 2026." The multimodal and audio features in the small models also hint at Google's focus on the next frontier: ubiquitous, on-device AI agents.

Frequently Asked Questions

What is the difference between the Gemma license and Apache 2.0?

The original Gemma license was a custom agreement created by Google that included specific acceptable use policies and restrictions. The Apache 2.0 license is a standard, permissive open-source license used by thousands of projects. It grants users broad rights to use, modify, distribute, and sublicense the software for any purpose, including commercial, with minimal conditions (primarily attribution and patent peace). This change gives developers much more legal certainty and flexibility.

How does a Mixture-of-Experts (MoE) model work?

A Mixture-of-Experts model is a type of neural network architecture where the full model comprises many smaller sub-networks (the "experts"). For each input token, a router network selects only a few relevant experts to activate, leaving the rest inactive. This allows the model to have a very large total number of parameters (for knowledge capacity) while only using a fraction of them for any given computation, making it dramatically more efficient in terms of compute and latency compared to a dense model of equivalent size.

Where can I download and run the Gemma 4 models?

The model weights are available on several major AI platforms: the Hugging Face Hub, Google's Kaggle platform, and Ollama. This wide availability supports different workflows—Hugging Face for Python developers using the transformers library, Kaggle for notebooks and experiments, and Ollama for easy local running and management via a command-line interface.

Can the small Gemma 4 models (2B, 4B) run on a smartphone?

Yes, the 2-billion and 4-billion parameter "Effective" models are explicitly designed for edge devices, including smartphones. Their architecture is likely heavily optimized and potentially distilled from larger models to maximize performance per parameter. The inclusion of audio processing in these models makes them particularly suited for on-device voice assistant or real-time translation applications.