DataArc-SynData-Toolkit, an open-source framework from Zhichao Shi and colleagues, targets the data scarcity bottleneck in LLMs. It unifies multimodal, multilingual, and multi-task synthetic data generation into a configuration-driven pipeline.

Key facts

- Submitted to arXiv on May 2, 2026.

- Features visual interface and simplified CLI.

- ParallelExecutor design for efficient sample synthesis.

- Targets specialized domains and low-resource languages.

- Open-source framework from Zhichao Shi et al.

Key Takeaways

- DataArc-SynData-Toolkit is an open-source framework for multimodal synthetic data, aiming to lower technical barriers for LLM training.

- It features a configuration-driven pipeline with visual interface and modular architecture.

The Problem: Fragmented Synthetic Data Workflows

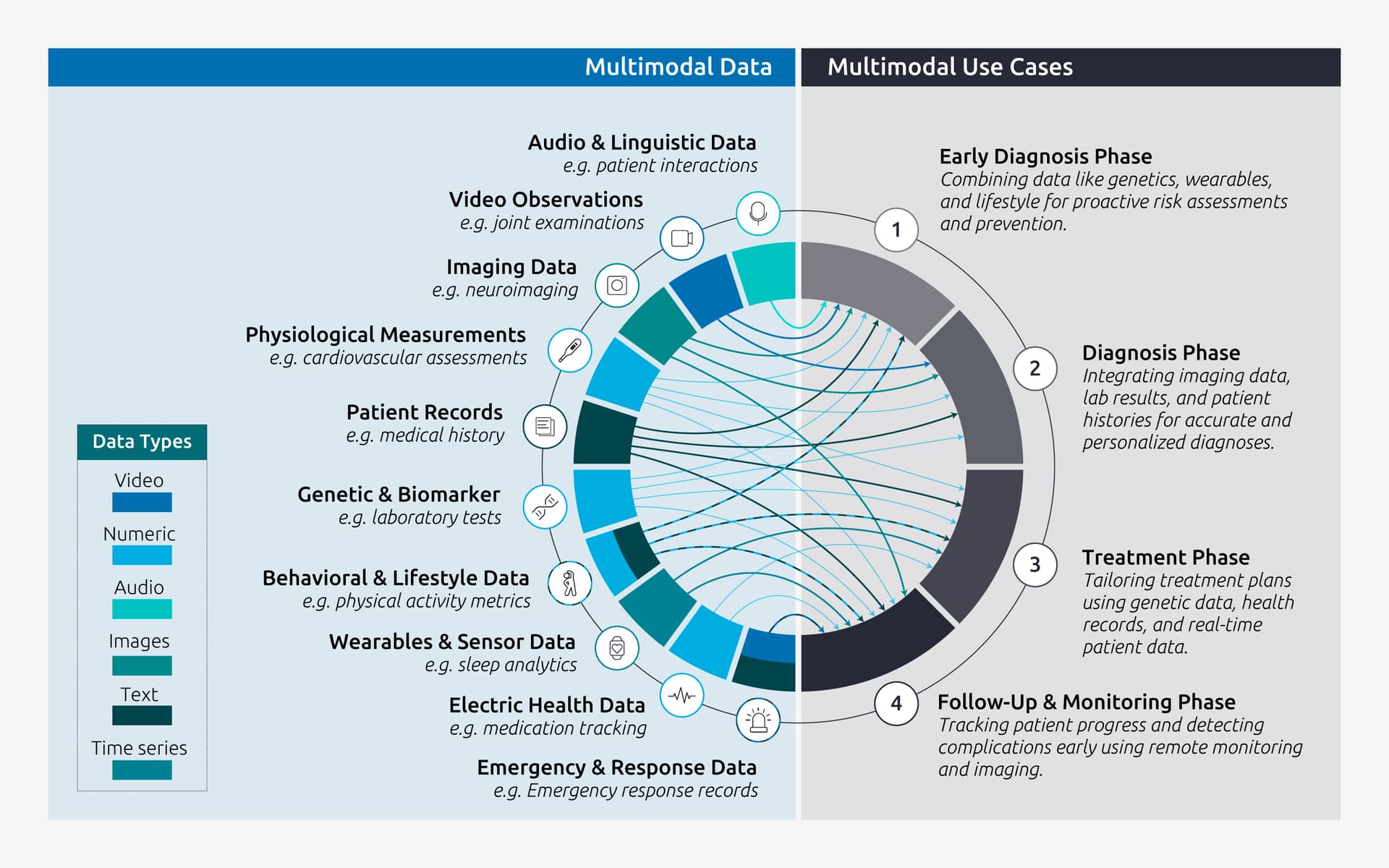

Synthetic data has become a critical tool for training large language models (LLMs), especially for specialized domains and low-resource languages where natural data is scarce. However, existing tools suffer from convoluted workflows, fragmented data standards, and limited scalability across modalities, as noted in the paper submitted to arXiv on May 2, 2026.

The Solution: A Unified, Modular Framework

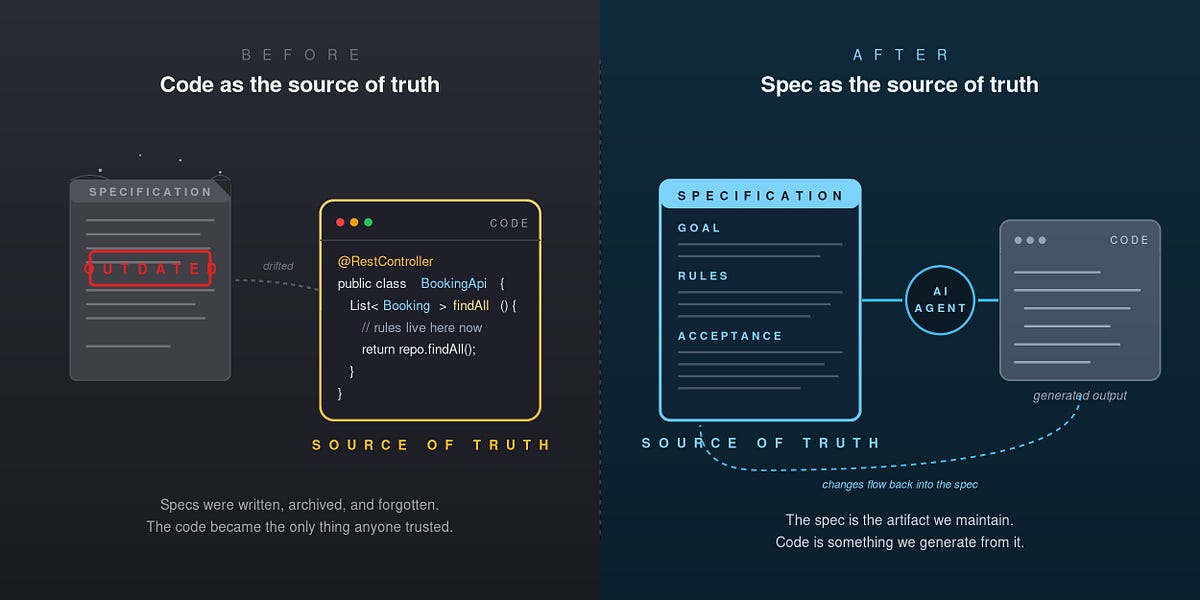

DataArc-SynData-Toolkit addresses these limitations with three core components:

- Configuration-driven pipeline: An intuitive visual interface and simplified CLI for exceptional usability. Users only need to set configurations in a file or the visual interface to obtain synthetic data, models, and evaluation results.

- Unified, quality-controllable synthesis: Standardizes multi-source data generation to ensure high reusability.

- Highly modular architecture: Designed for seamless multimodal, multilingual, and multi-task adaptation.

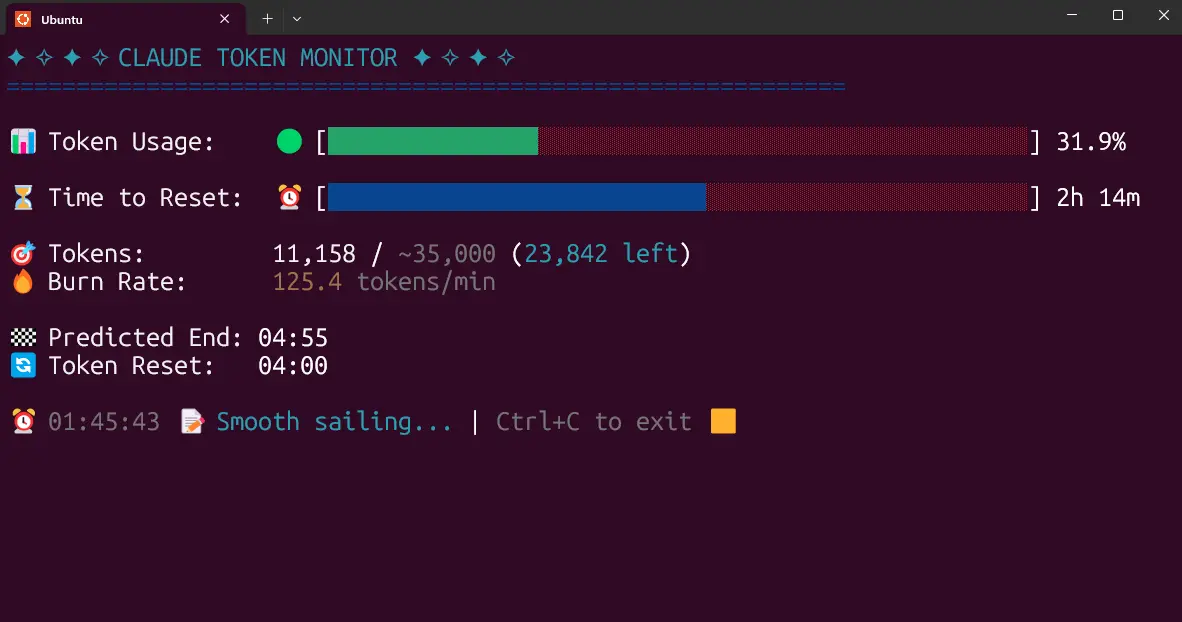

The framework divides the end-to-end pipeline into three stages: data synthesis, model training, and evaluation. A key innovation is the ParallelExecutor design, which the paper shows improves efficiency when synthesizing 500 samples.

Unique Take: Lowering the Barrier, Not Just Generating Data

While many synthetic data tools focus on raw generation volume or quality, DataArc-SynData-Toolkit's primary contribution is lowering the technical barrier for practitioners. The paper emphasizes that 'broader adoption of existing synthetic data tools is severely hindered by convoluted workflows' — a practical bottleneck that often outweighs theoretical data quality concerns. By offering a visual interface alongside a CLI, the toolkit targets both researchers and engineers who may lack deep infrastructure expertise.

The framework's closed-loop design — generating data, training models, and evaluating results within a single pipeline — mirrors the iterative approach seen in recent RAG systems that retrieve at multiple reasoning steps [per recent RAG research, May 2026]. This suggests a broader industry trend toward integrated, feedback-driven development cycles.

Limitations and Open Questions

The paper does not disclose specific benchmark results or compare against existing tools like MIT's Recursive Language Models [April 2026]. The claim of 'optimal balance between generation efficiency and data quality' lacks quantitative evidence in the abstract. The authors also do not specify supported modalities beyond text, nor the computational requirements for running the toolkit.

What to watch

Watch for the release of the actual code repository and accompanying benchmarks. The paper's claims about generation efficiency and data quality need quantitative validation against existing tools like those from MIT. The framework's adoption in specialized domains will test its practical utility.