Spec Kit generates test suites from plain-English specifications, then Claude Code iterates code until tests pass. The author claims a 90% first-pass acceptance rate on their project, a claim not independently verified.

Key facts

- Spec Kit generates test suites from plain-English specs.

- Claims 90% first-pass test acceptance rate.

- Open-source, available on GitHub.

- Designed to integrate with Claude Code's agentic loop.

- Author did not disclose downloads or contributors.

A new open-source tool called Spec Kit proposes a spec-driven development workflow for Claude Code, Anthropic's terminal-based coding agent. Spec Kit generates test suites directly from plain-English specifications, then Claude Code iterates on the implementation until the tests pass. The author claims a 90% first-pass acceptance rate on their project [According to the source].

The workflow replaces the traditional 'write code, then write tests' loop with a 'write spec, then generate tests, then write code' sequence. This mirrors test-driven development (TDD) but automates test generation and code iteration. The author reports that the approach caught edge cases that manual coding would have missed.

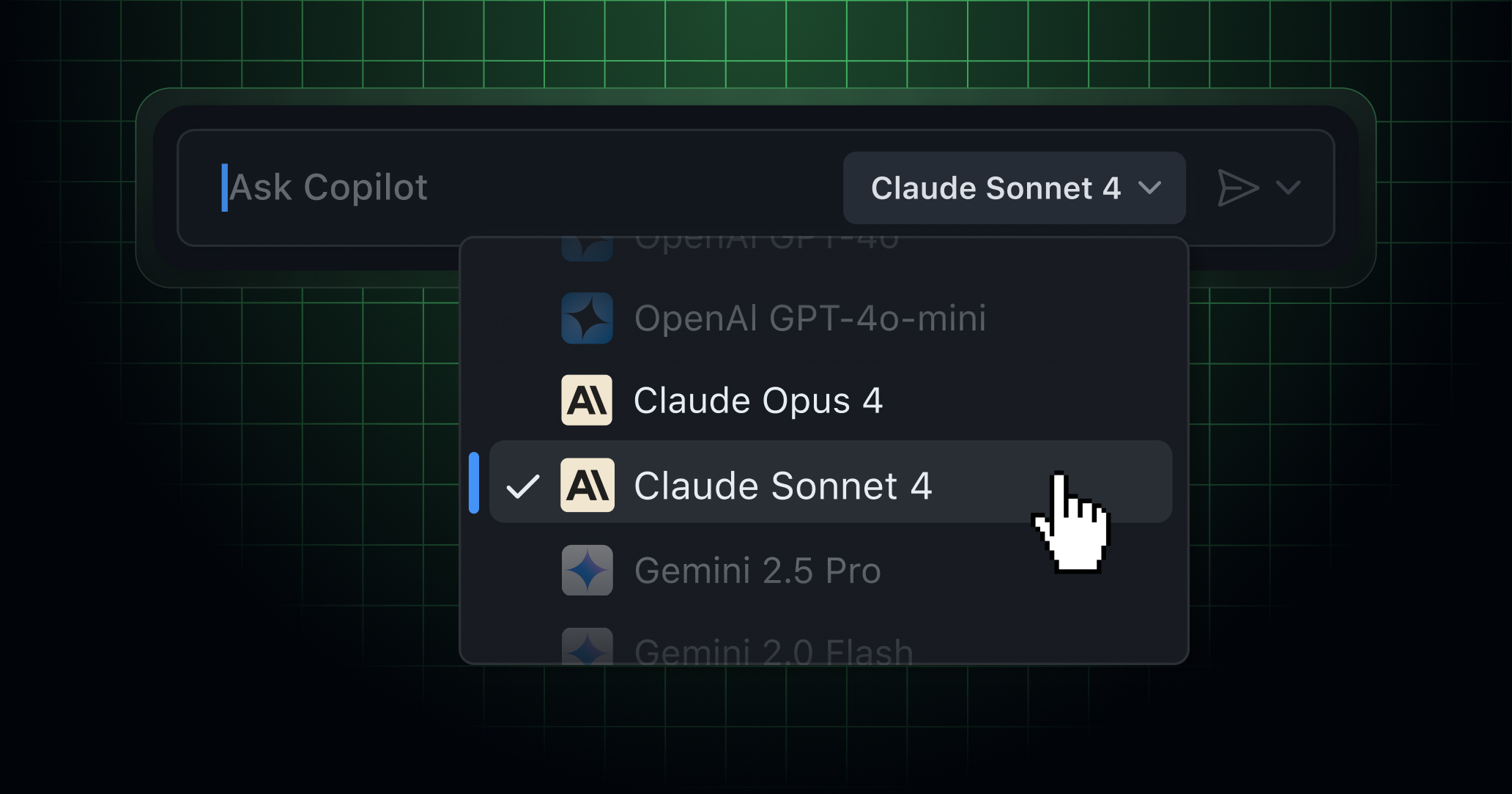

Spec Kit is open-source and available on GitHub, though the author did not disclose the number of downloads or contributors. The tool does not specify which LLM powers its test generation, but it is designed to integrate with Claude Code's agentic loop. This contrasts with Anthropic's own Claude Agent framework, which orchestrates multiple Claude models for complex tasks [According to the source].

The Unique Take

Spec Kit represents a shift from prompt-driven to spec-driven development. While tools like Cursor and Copilot optimize for inline code completion, Spec Kit forces the developer to define the contract first. This could reduce the 'garbage in, garbage out' problem that plagues agentic coding tools, where vague prompts produce buggy code. However, the 90% figure is a single data point from the author's own project — no independent benchmarks exist.

Limitations

Spec Kit's effectiveness depends entirely on the quality of the plain-English spec. A poorly written spec will generate poor tests, leading to code that passes tests but fails in production. The tool also assumes Claude Code can reliably iterate until tests pass, a process that may consume significant token budget on complex projects. Anthropic's recent post-mortem on Claude Code quality issues [2026-04-23] noted regressions in reasoning effort and context retention, which could impact this workflow.

What to watch

Watch for independent benchmarks of Spec Kit's acceptance rate on standard software engineering tasks, and whether Anthropic integrates spec-driven workflows into Claude Code natively. Also track the tool's GitHub star count and contributor growth over the next 90 days.