A Trojan disguised as Anthropic's Claude Code appeared as the first Google search result on May 11, 2026. Windows Defender flagged the malware as Trojan:Win32/Kepavll!rfn after a user downloaded it from a site mimicking the official Claude Code homepage.

Key facts

- Trojan appeared as #1 Google result for 'claude code' on May 11, 2026

- Malware flagged as Trojan:Win32/Kepavll!rfn by Windows Defender

- Victim has been online since 1996 and has 30 years of internet experience

- Claude Code has direct file system and shell access

- Anthropic has not yet commented on the incident

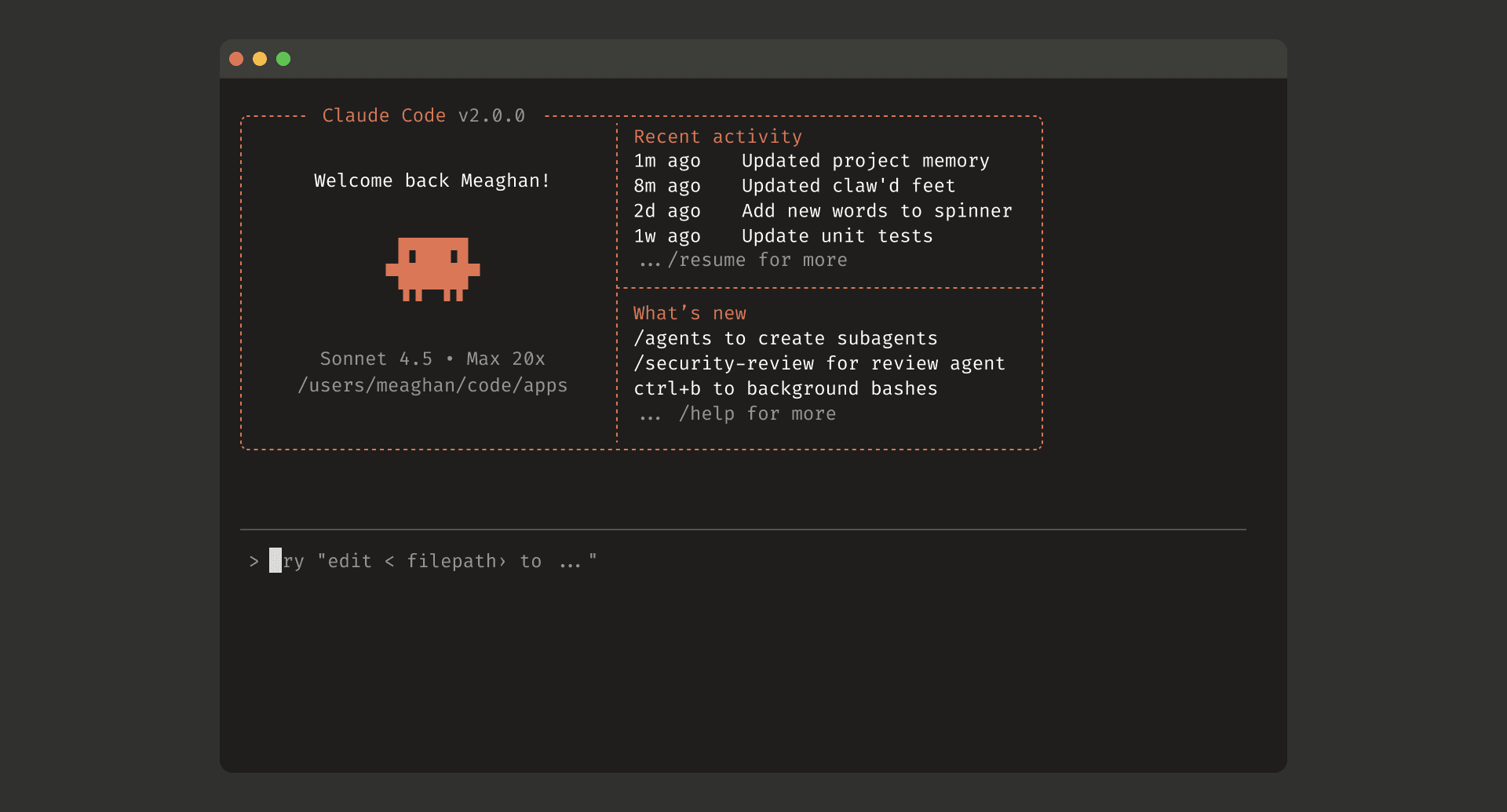

A Reddit user reported that a search for "claude code" on Google returned a malicious site as the top result, impersonating Anthropic's official Claude Code download page. The site replicated the design language of the real Anthropic homepage, including the same layout and color scheme, making it difficult to distinguish from the legitimate source.

The victim, who has been online since 1996 and works on a Mac, downloaded the installer on a rarely used Windows PC. Windows Defender immediately flagged the file as Trojan:Win32/Kepavll!rfn. The user noted they had previously installed Claude Code via a PowerShell command on another machine and assumed the Google result was safe.

This is a supply-chain attack targeting the growing ecosystem of AI developer tools. Claude Code, released in 2025, is an Anthropic agentic coding tool with direct file system and shell access — meaning a trojanized version could exfiltrate source code, API keys, and credentials with the same permissions users grant the legitimate tool.

Google's Responsibility

The fact that a malicious site ranked first for a branded search query suggests either a sophisticated SEO poisoning campaign or a gap in Google's ad quality controls. Google has invested heavily in AI safety [per Google's own blog posts] but has not yet commented on this specific incident. The company competes directly with Anthropic through its Gemini models and CodeWiki product, adding an awkward dimension to the story.

Broader Pattern

This incident follows a trend of attackers targeting AI developers. In April 2026, researchers at [MIT] demonstrated that anyone with a laptop could poison major AI models, including those from Anthropic and Google. The Claude Code trojan represents the exploitation side of that vulnerability — not poisoning the model but poisoning the distribution channel.

Anthropic has not released an official statement about the malicious site. The company's Claude Code product has been rapidly adopted, appearing in 670 articles on this publication alone, making it a high-value target for impersonation.

Key Takeaways

- A Trojan impersonating Claude Code ranked #1 on Google.

- Windows Defender caught it as Trojan:Win32/Kepavll!rfn.

- The victim had 30 years of internet experience.

What to watch

Watch for Anthropic's official response and whether Google removes the malicious site from search results. If the trojan spreads further, expect a security advisory from Anthropic and possibly a broader investigation by Google Trust & Safety into SEO poisoning targeting AI tools.