Run `npx ccmeter` to see per-session spend, cache hit rates, and personalized fix recommendations — no setup, no API keys.

What Changed

Anthropic quietly shortened Claude Code's default prompt-cache TTL from 1 hour to 5 minutes in early March 2026. The rollout was staggered — different users saw it on different days. Anthropic's official line: this shouldn't increase costs because most cached context is one-shot anyway.

User analysis of actual session JSONLs disagrees. The typical impact reported by heavy users? A 30–60% bill increase with zero change in usage.

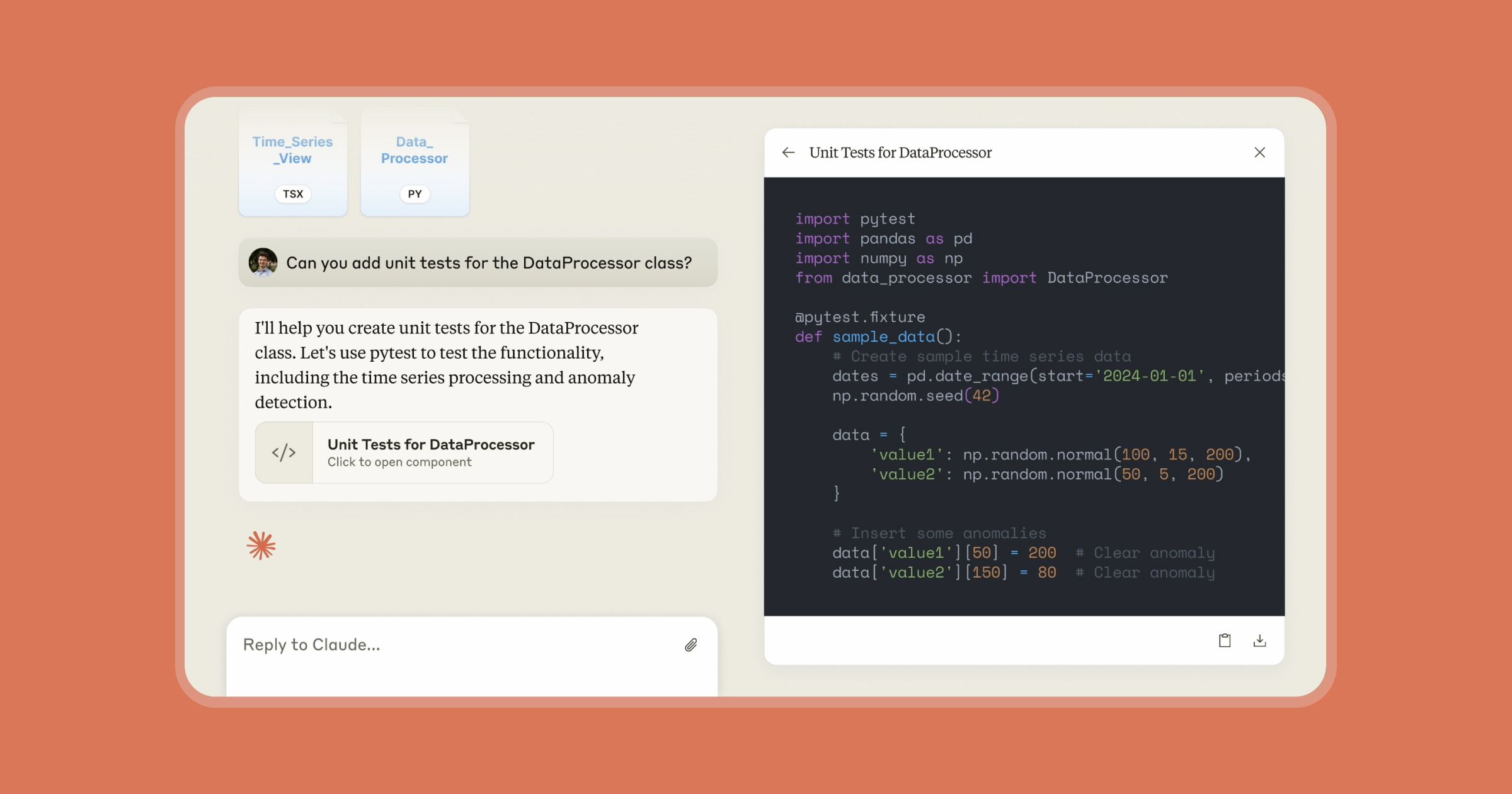

Enter CCmeter — a local-first dashboard that reads the session files Claude Code already writes to ~/.claude/projects and tells you exactly what's costing you. No telemetry, no API key, no setup.

What It Means For You

The Anthropic Console gives you one number per day. That's useless for debugging. You need per-session, per-project, per-cache-bust breakdowns to:

- Verify which side of the TTL-change argument your data falls on.

- Find which sessions are the new expensive ones.

- Apply the specific behavioral fix that recovers most of the money.

CCmeter is that breakdown. It runs against data already on your machine, surfaces the patterns eating your spend, and recommends concrete fixes ranked by estimated monthly savings.

Try It Now

Quickest start:

npx ccmeter@latest

Permanent install:

npm i -g ccmeter

ccmeter

Requires Node 20+. Works on macOS, Linux, Windows. Reads ~/.claude/projects by default — set CCMETER_LOG_DIR if your logs live elsewhere.

Key Commands

ccmeter

Summary: total spend, cache hits, today's biggest leak

ccmeter recommend

Personalized fixes ranked by $/mo saved

ccmeter compare

Last 7d vs prior 7d — quantify what changed

ccmeter tools

Which tool calls cost the most (Bash, Read, …)

ccmeter cache

Cache hit rate trend + TTL-change callout

ccmeter whatif --swap opus->sonnet

Simulate model swaps on

your data

ccmeter dashboard

Local web UI, no network

ccmeter live

Full-screen ticker

Real Example Output

ccmeter — last 30 days

────────────────────────────────────────────────────────────────────────────────

Total spend $284.10 (↑ +43% vs prior period)

Daily average $9.47 ≈ $284.10/month

Sessions 127

Cache hit rate 47.3%

Cache busts 89 wasted $24.18

Daily spend ▁▂▂▃▅▇█▆▄▅█▇▆▄▃

Suggestions:

● Idle sessions are busting your cache 41×/week (save $43/mo)

● 6 long sessions (>90 min) bled cache value (save $18/mo)

+ 4 more — run `ccmeter recommend`

How to Use CCmeter to Cut Your Bill

- Run

ccmeter to see your baseline.

- Run

ccmeter recommend for prioritized fixes.

- Run

ccmeter cache to check if the TTL change is hitting you.

- Run

ccmeter whatif --swap opus->sonnet to see how much you'd save by switching models for specific projects.

- Tag expensive sessions with

ccmeter tag $SID "auth-refactor" and group by tag in reports.

gentic.news Analysis

This tool arrives at a critical moment. Anthropic's Claude Code ecosystem is exploding — 653 articles have mentioned it on gentic.news, and it appeared in 31 articles just this week. The product is maturing fast, but with that maturity comes cost complexity that the official console doesn't address.

CCmeter fills a gap Anthropic hasn't prioritized: granular, local-first cost observability. It's notable that the tool's creator explicitly calls out the TTL change — a quiet config tweak that, according to user data, can inflate bills 30–60%. This follows a pattern we've seen before: Anthropic makes model-side optimizations (like the recent Claude Opus 4.6 1M context window launch on April 28) that have downstream cost implications for users.

The tool's whatif command is particularly clever — it lets you simulate model swaps (e.g., Opus 4.6 → Sonnet 4.6) against your actual usage data. Given Anthropic's aggressive model release cadence — Claude Opus 4.6, Claude Sonnet 4.6, and the Claude Agent framework all launched recently — this kind of forward-looking cost modeling is essential.

For Claude Code users, the actionable takeaway is clear: run npx ccmeter today. Even if you're not seeing a bill jump, the cache-hit and idle-session data alone will likely reveal patterns you can fix. The tool is local-first and reads only your existing logs — no data leaves your machine.