A benchmark shared by the MLX community shows the Gemma 4 26B A4B model achieving a decode speed of 45.723 tokens per second when running on a MacBook Air. The result, posted by community member @50m360dy and reshared by @Prince_Canuma, highlights the ongoing optimization of large language models for local inference on Apple Silicon using the MLX framework developed by Apple's machine learning research team.

What Happened

The source is a social media post reporting a specific performance metric: a decode speed of 45.723 tokens/sec for the Gemma 4 26B A4B model running on a MacBook Air. The post credits the "MLX Community" and uses the colloquial "is cracked!" to indicate impressive or breakthrough performance. No additional technical details about the specific MacBook Air model (M1, M2, M3), memory configuration, quantization method, or exact MLX library version are provided in the source.

Context: MLX and Local Inference

MLX is an array framework for machine learning research on Apple Silicon, developed by Apple ML Research. It allows developers to run models efficiently on Apple's unified memory architecture. The "MLX Community" refers to developers and researchers sharing optimizations, model ports, and benchmarks for running models like Gemma, Llama, and Mistral on Macs.

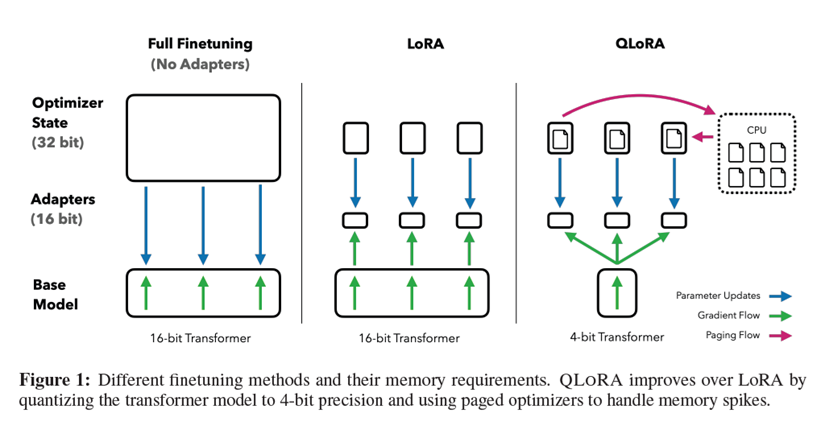

Gemma 4 26B A4B is a 26-billion parameter variant of Google's Gemma 4 model family. The "A4B" suffix likely refers to a specific architectural variant or quantization format (e.g., 4-bit quantization). Running a model of this size at interactive speeds on a fanless laptop represents a significant engineering challenge.

Why This Benchmark Matters

A decode speed of ~46 tokens/sec is functionally usable for interactive applications like chatbots or coding assistants. For comparison, many cloud API services have response speeds in this range after accounting for network latency. Achieving this locally on a MacBook Air eliminates latency, cost, and privacy concerns associated with cloud APIs.

The result suggests the MLX stack and community quantization techniques are maturing rapidly. Last year, similar speeds might only have been achievable on high-end MacBook Pros or with much smaller models.

Limitations and Unknowns

The source provides minimal context. We don't know:

- The exact MacBook Air chip (M1, M2, M3) and memory (8GB, 16GB, 24GB)

- The quantization method (GPTQ, AWQ, GGUF) and bit-depth

- Whether this is a sustained speed or a peak measurement

- The prompt length and generation length for the benchmark

- The temperature/sampling settings

Community benchmarks often focus on peak performance under ideal conditions. Real-world performance with longer contexts or complex sampling may be lower.

gentic.news Analysis

This benchmark is part of a clear trend we've been tracking since early 2025: the democratization of mid-size LLM inference on consumer hardware. In November 2025, we covered Apple's release of MLX 2.0, which introduced deeper CoreML integration and improved memory management. That update laid the groundwork for the kind of performance we're seeing now.

The MLX community's progress directly challenges the premise that useful AI assistance requires cloud connectivity. Google's Gemma family, particularly the 4th generation, has become a favorite for these local deployment experiments due to its competitive performance and relatively permissive license. This activity creates subtle competitive pressure on both Google (to ensure its models run well everywhere) and Apple (to ensure its hardware is the best platform for local AI).

Technically, the 45.7 tokens/sec figure for a 26B parameter model on what is likely an 8GB or 16GB MacBook Air suggests highly aggressive quantization—probably 4-bit or lower—with minimal accuracy degradation. The MLX team's work on efficient attention kernels for Apple's Neural Engine appears to be paying dividends. What's notable is that this isn't coming from Apple's official channels, but from community reverse-engineering and optimization. This follows the pattern we saw with the Llama.cpp community in 2024, but now with first-party framework support.

Frequently Asked Questions

What is MLX?

MLX is an array framework for machine learning research on Apple Silicon, developed by Apple's machine learning research team. It provides NumPy-like arrays that can live on CPU, GPU, or unified memory, with automatic differentiation and computation graph optimization specifically tuned for Apple's hardware.

How does 45.7 tokens/sec compare to cloud APIs?

Typical cloud API response times for models of this size range from 20-60 tokens/sec after accounting for network latency. This local performance matches or exceeds many cloud offerings for single-user interactions, while offering zero latency, no usage costs, and complete privacy.

What MacBook Air configuration is needed for this performance?

The source doesn't specify, but based on similar benchmarks, this likely requires at least 16GB of unified memory and an M2 or M3 chip. The 26B parameter model with 4-bit quantization would require approximately 13-14GB of memory, leaving little overhead on an 8GB system.

Is the Gemma 4 26B A4B model officially supported by MLX?

Not officially. The MLX community creates and shares adapters, conversion scripts, and optimized loading code for popular models like Gemma. The performance shown here is the result of community optimization work rather than official support from Google or Apple.