OpenAI has released GPT-5.5, their latest frontier model, delivering significant improvements across coding, mathematics, scientific reasoning, and long-context recall while matching the latency of GPT-5.4. The model is already rolling out to ChatGPT Plus, Pro, Business, and Enterprise tiers, with API access coming shortly.

Key Takeaways

- GPT-5.5 scores 82.7% on Terminal-Bench 2.0 and 35.4% on FrontierMath Tier 4, while reducing token usage per task.

- The model is rolling out to ChatGPT tiers and API soon, priced at $5/$30 per million tokens.

Key Results

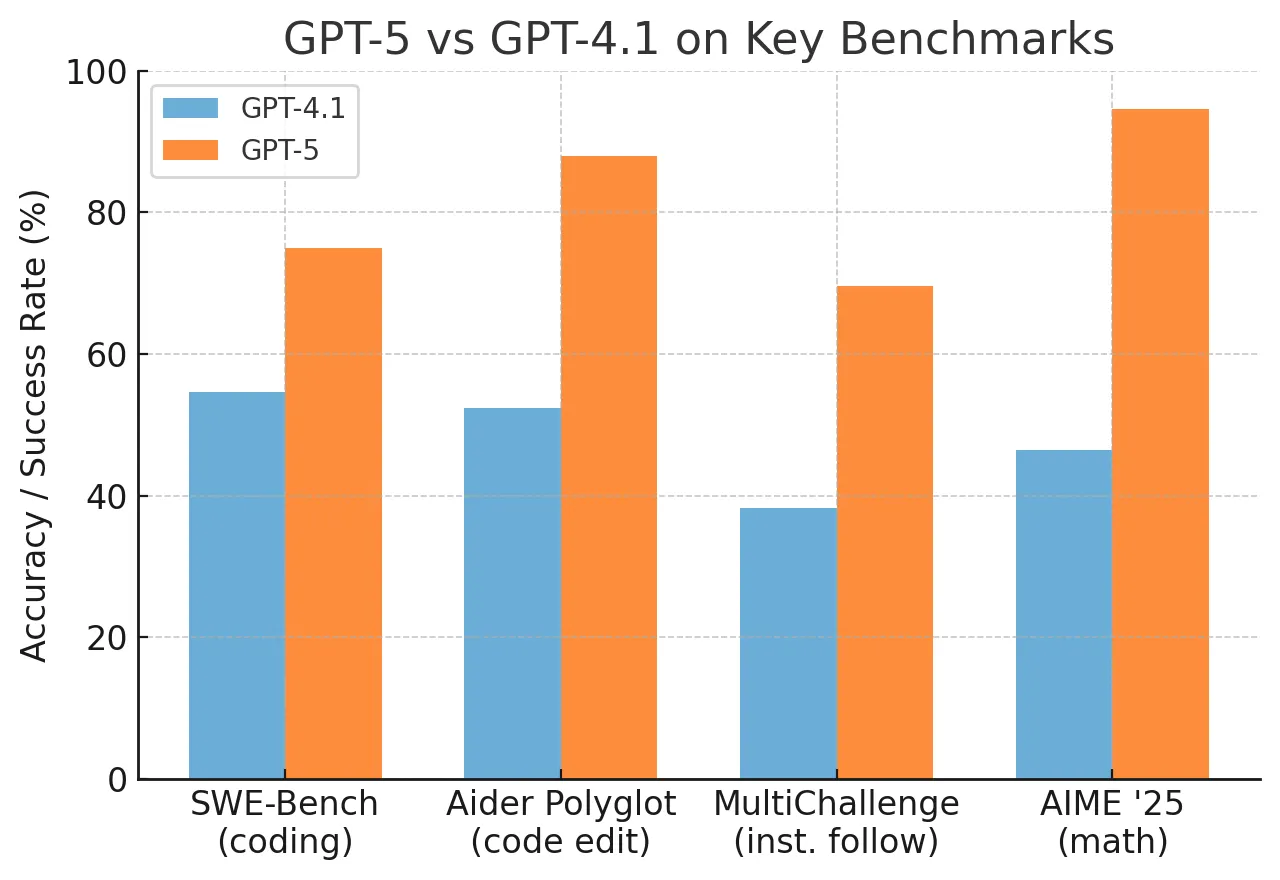

The model achieves substantial gains over GPT-5.4 and Anthropic's Claude Opus 4.7 across multiple benchmarks:

Terminal-Bench 2.0 (agentic coding) 82.7% 75.1% 69.4% SWE-Bench Pro 58.6% — 64.3% Expert-SWE (internal, median human 20h) 73.1% — — GDPval (knowledge work) 84.9% — 80.3% OSWorld-Verified 78.7% — 78.0% Tau2-bench Telecom (no prompt tuning) 98.0% — — GeneBench (science) 25.0% 19.0% — BixBench 80.5% — — FrontierMath Tier 4 35.4% 27.1% 22.9% FrontierMath Tiers 1–3 51.7% — — MRCR 512K–1M tokens (long context) 74.0% 36.6% — CyberGym (cybersecurity) 81.8% — 73.1%What's New

GPT-5.5 is OpenAI's "most capable model yet," designed to match GPT-5.4's latency while delivering higher intelligence across coding, knowledge work, and scientific research. The standout story is efficiency: GPT-5.5 uses fewer tokens to complete the same tasks, making it both smarter and cheaper to run per task.

OpenAI claims the model costs half as much as competitive frontier coding models on Artificial Analysis's Coding Index, with a 20%+ token generation speed boost from AI-optimized load balancing. The infrastructure is co-designed for and served on NVIDIA GB200 and GB300 NVL72 systems.

How It Works

While OpenAI has not released full architectural details, the model's efficiency gains appear to stem from a combination of:

- Token efficiency: The model completes tasks with fewer generated tokens, reducing cost per task

- AI-optimized serving: GPT-5.5 used Codex to help optimize its own serving infrastructure, a meta-learning approach to inference optimization

- NVIDIA GB200/GB300 NVL72: Co-designed hardware-software integration for optimal throughput

The model also incorporates Codex (OpenAI's coding agent) into its own development and deployment pipeline — 85% of OpenAI employees already use Codex weekly across all departments.

Surprising Bits

- Claude Opus 4.7 still leads on SWE-Bench Pro (64.3% vs. 58.6%) and some long-context benchmarks

- GPT-5.5 used Codex to help optimize its own serving infrastructure, a meta-optimization loop

- Internal adoption: 85% of OpenAI uses Codex weekly across all departments

- Mathematical discovery: Helped discover a new proof about Ramsey numbers verified in Lean

Pricing and Availability

Standard API $5 $30 Pro API $30 $180Availability: Rolling out to Plus, Pro, Business, Enterprise in ChatGPT and Codex. API access "very soon."

gentic.news Analysis

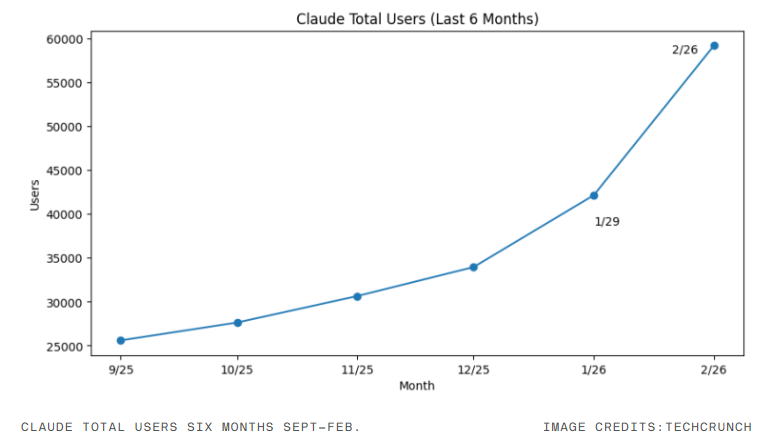

GPT-5.5 represents a notable shift in OpenAI's strategy: rather than chasing raw benchmark supremacy on every metric, the company is optimizing for efficiency and real-world task completion. The 74.0% on MRCR at 512K–1M tokens (up from 36.6% for GPT-5.4) is a massive jump that suggests significant architectural changes in attention mechanisms or context compression. This follows a trend we've seen across the industry — Anthropic's Claude 3.5 Sonnet also showed strong long-context performance, and Google's Gemini 1.5 Pro pushed context windows to 10M tokens.

The model's use of Codex to optimize its own serving infrastructure is a fascinating meta-engineering move. This mirrors the trend we covered in our article on "Self-Improving AI Systems" — where models are increasingly used to optimize their own training and deployment pipelines. However, the fact that Claude Opus 4.7 still leads on SWE-Bench Pro suggests that Anthropic remains competitive in agentic coding tasks, and the gap may be narrowing rather than widening.

The FrontierMath Tier 4 result (35.4%) is particularly striking. Tier 4 problems are designed to be extremely difficult — requiring novel mathematical reasoning rather than pattern matching. This suggests GPT-5.5 is developing genuine reasoning capabilities beyond simple retrieval and pattern completion. The validated proof about Ramsey numbers in Lean is concrete evidence of this.

Frequently Asked Questions

When will GPT-5.5 be available via API?

OpenAI states API access will come "very soon." The model is currently rolling out to ChatGPT Plus, Pro, Business, and Enterprise tiers. Developers should expect API availability within weeks based on previous release patterns.

How does GPT-5.5 compare to Claude Opus 4.7?

GPT-5.5 leads on many benchmarks (Terminal-Bench 82.7% vs 69.4%, GDPval 84.9% vs 80.3%, FrontierMath Tier 4 35.4% vs 22.9%) but Claude Opus 4.7 still leads on SWE-Bench Pro (64.3% vs 58.6%) and some long-context evaluations. The models are competitive across different task categories.

What is the pricing for GPT-5.5?

Standard API pricing is $5 per million input tokens and $30 per million output tokens. A Pro tier is available at $30/$180 respectively. OpenAI claims this is half the cost of competitive frontier coding models on Artificial Analysis's Coding Index.

What hardware does GPT-5.5 run on?

GPT-5.5 is co-designed for and served on NVIDIA GB200 and GB300 NVL72 systems. The infrastructure includes AI-optimized load balancing that provides a 20%+ token generation speed boost.

Is GPT-5.5 better than GPT-5.4 at everything?

No. While GPT-5.5 shows substantial gains in most benchmarks (particularly long-context recall and mathematics), OpenAI has not released comparisons for all tasks. Claude Opus 4.7 still leads on SWE-Bench Pro, suggesting there are specific domains where GPT-5.5 may not be the top performer.