The Technique — Treating AI as a Junior Developer on Steroids

A developer recently detailed how they used AI—specifically Claude Code—to write 70% of a production codebase for a monitoring tool called Sitewatch. The key insight wasn't just about prompt engineering; it was about implementing a rigorous safety net. The developer treated the AI as an incredibly fast, yet occasionally error-prone, junior developer. This meant the human role shifted from writing lines of code to architecting systems, writing exhaustive tests, and implementing robust monitoring.

Why It Works — Compensating for AI's Blind Spots

Claude Code excels at generating functional code from clear specifications, but it can introduce subtle bugs, make incorrect assumptions about dependencies, or miss edge cases. The developer's workflow succeeded because they built processes to catch these issues before they reached users. This is especially critical as AI accelerates development cycles. Shipping faster means bugs can proliferate faster without proper gates. The article emphasizes that production monitoring became more critical, not less, because the volume and speed of code changes increased dramatically.

How To Apply It — Your AI-Assisted Production Checklist

If you're using Claude Code for serious development, integrate these steps into your workflow:

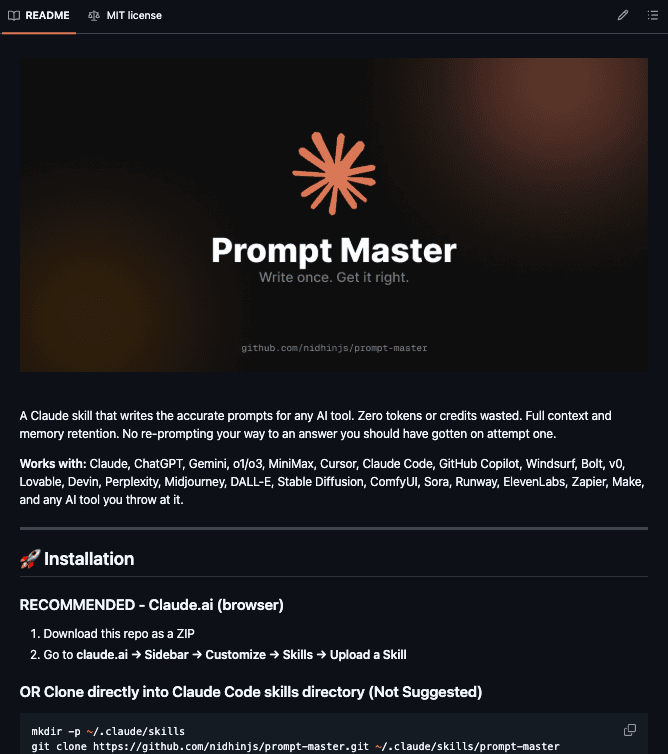

Architect and Prompt in CLAUDE.md: Start every significant feature or module with a detailed spec in your

CLAUDE.mdfile. Define the API contracts, data models, and key functions before asking for code.<!-- CLAUDE.md snippet for a new API endpoint --> ## Feature: User Notification Service - **Framework:** Express.js - **Endpoint:** POST /api/notifications - **Input:** { userId: string, message: string, type: 'alert' | 'info' } - **Validation:** userId must exist in DB, message max 500 chars. - **Edge Cases:** Handle duplicate notifications within 5 minutes. - **Tests Required:** Validation failure, success, idempotency check.Mandate AI-Generated Tests: For every module Claude Code creates, immediately prompt it to also generate a comprehensive test suite. Use a follow-up command like:

claude code --write-tests --for-file ./src/services/notifier.jsReview these tests as carefully as the implementation code.

Implement Synthetic Monitoring Immediately: For any new service or endpoint, create a simple canary test or synthetic transaction before deployment. The Sitewatch developer used their own tool for this, but you can start with a simple cron job that hits your health check endpoints and alerts on failure.

Review for Integration, Not Syntax: Your code review focus changes. Spend less time on style (enforce it with Prettier/ESLint) and more on how the AI-generated module integrates with the existing system. Check data flow, state management, and error handling across boundaries.

This approach turns the velocity of AI coding from a risk into a superpower. You're not just writing code faster; you're building a more resilient system by baking validation and observation into your development loop from the first prompt.