Developer Alex Albert, in a recent social media post, highlighted a significant shift in how AI agents are being built and deployed. He stated that "Managed Agents" have become "both the fastest way to hack together a weekend agent project and the most robust way to ship one to millions of users."

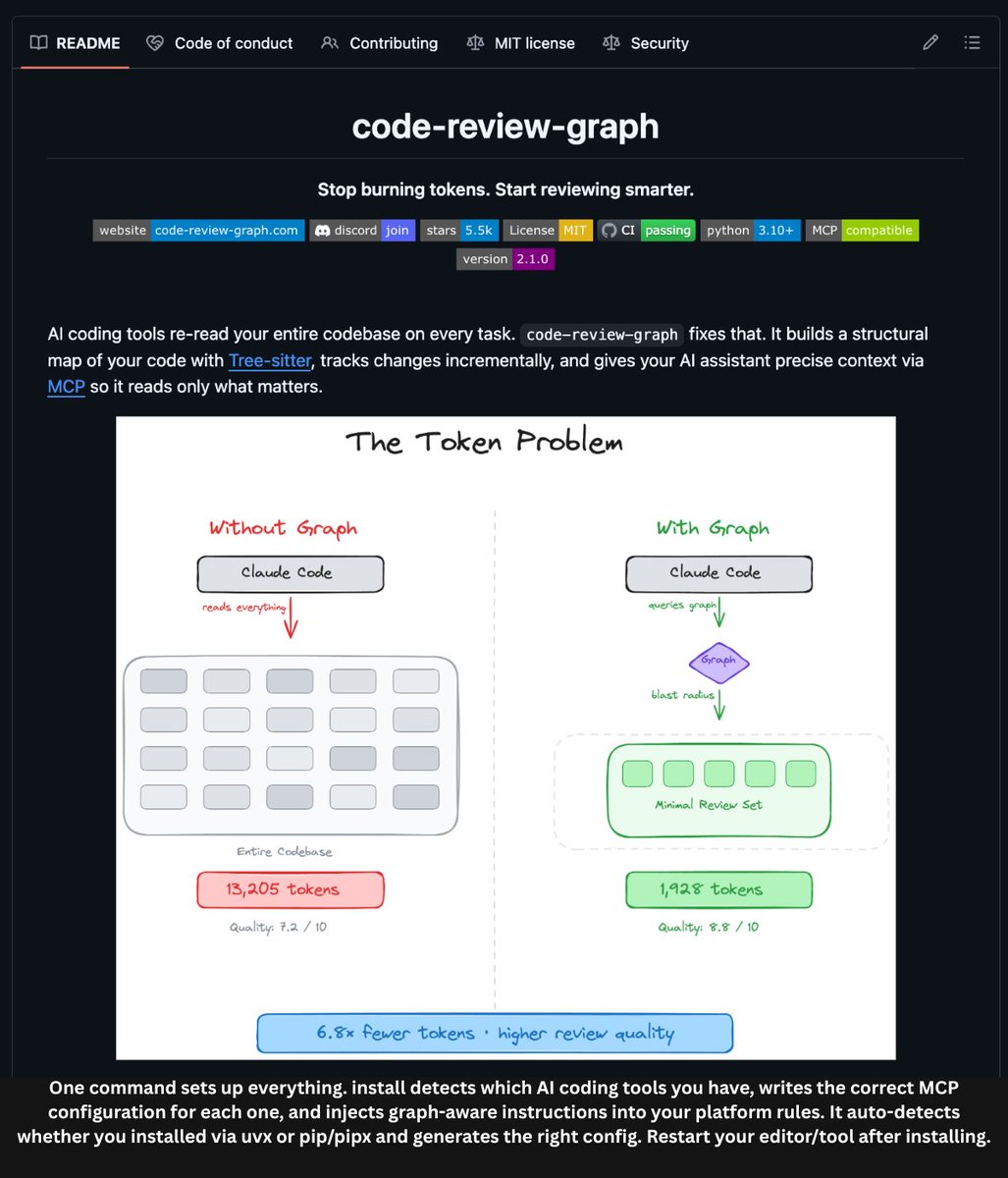

This observation points to the maturation of a new category of developer tooling. Instead of wrestling with the infrastructure required to self-host complex agent systems—orchestrating LLM calls, managing tool execution, handling state, and ensuring reliability—developers can now leverage fully managed platforms.

What Are Managed Agents?

Managed Agent platforms are cloud-based services that provide the core infrastructure for building, testing, and running AI agents. They abstract away the underlying complexity, offering developers a higher-level API or interface to define an agent's behavior, tools, and reasoning loops.

As Albert notes, the key advantage is that they "eliminate all the complexity of self-hosting an agent but still allow a great degree of flexibility with setting up your harness, tools, skills, etc." This means developers can focus on the agent's logic and capabilities rather than the plumbing required to make it run reliably at scale.

The Developer Experience Shift

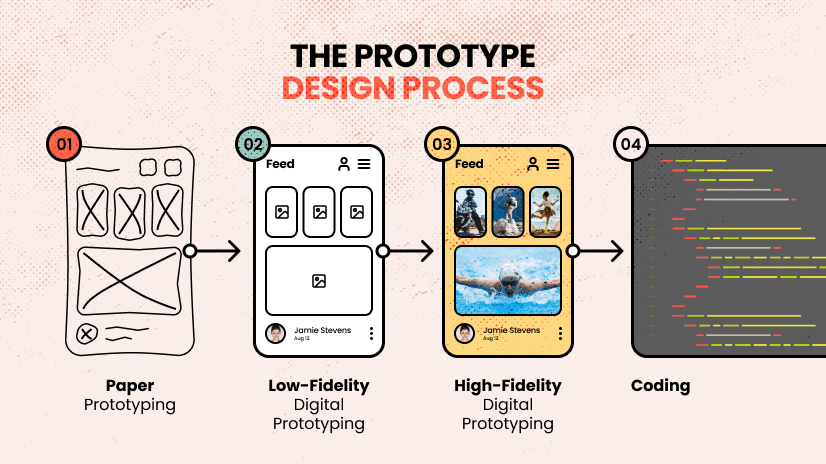

The traditional path for building an AI agent involved:

- Selecting and integrating an LLM provider (OpenAI, Anthropic, etc.).

- Building a custom orchestration layer to manage prompts, tool calls, and state.

- Deploying and scaling this system, which often resembles a complex distributed application.

Managed Agent platforms collapse this stack. A developer can define an agent's purpose, equip it with specific tools (like web search, code execution, or API calls), and deploy it with a few commands. The platform handles the runtime, scaling, monitoring, and often provides built-in features like memory, human-in-the-loop workflows, and evaluation tools.

This dramatically lowers the barrier to entry, enabling the "weekend project" pace Albert mentions. More importantly, it also addresses the production challenge. Scaling a self-built agent system to handle millions of users requires significant DevOps and MLOps expertise. A managed service inherently provides this scalability and robustness, making the path from prototype to production much shorter and less risky.

The Competitive Landscape

While Albert did not name a specific provider, this space has seen rapid growth. Companies like CrewAI, LangChain (with LangGraph Cloud), and Vercel's AI SDK in partnership with models providers are offering varying levels of managed agent infrastructure. Larger cloud providers like Google Cloud (Vertex AI Agent Builder) and AWS (Amazon Bedrock Agents) also provide enterprise-grade managed agent services.

The trend is clear: the infrastructure layer for AI agents is becoming a productized, commoditized service, similar to how managed databases (like AWS RDS) abstracted away the pain of running PostgreSQL or MySQL at scale.

gentic.news Analysis

Alex Albert's observation is a signal of a maturing market. For the past two years, the dominant narrative in AI tooling has been about frameworks (LangChain, LlamaIndex) that help developers build agents. Albert's point indicates we are now entering the deployment phase, where the focus shifts from "how do I make this work?" to "how do I make this work for everyone, all the time?"

This aligns with a broader trend we've covered, such as the rise of agentic AI platforms as a service. It represents a natural evolution in the tech stack: after a wave of innovation at the model and framework layers, the next value is created by simplifying operations and scaling. It also creates a clearer business model for AI infrastructure companies beyond just selling API credits for model inference.

For practitioners, the implication is strategic. Building a custom agent orchestration platform from scratch is increasingly difficult to justify for most use cases, barring extreme requirements for control or differentiation. The competitive edge will come from the unique tools, skills, and domain-specific logic an agent possesses, not from the underlying scheduler that runs it. The rise of managed agents allows teams to reallocate engineering resources from infrastructure to application logic, accelerating the pace of AI-powered product development.

Frequently Asked Questions

What is a Managed Agent platform?

A Managed Agent platform is a cloud service that provides the complete infrastructure needed to build, run, and scale AI agents. It handles the complex orchestration of language model calls, tool execution, memory, and state management, allowing developers to focus solely on defining the agent's behavior and capabilities through a higher-level API or interface.

How do Managed Agents differ from just using an LLM API?

Using a raw LLM API (like OpenAI's ChatGPT API) gives you a stateless completion engine. Building an agent requires creating a stateful system that can plan, use tools, and maintain context across multiple steps. Managed Agent platforms provide this full agent runtime as a service, so you don't have to build the orchestration, memory, and tool-calling logic yourself.

Are Managed Agent platforms flexible enough for complex applications?

According to developers like Alex Albert, yes. The leading platforms are designed to offer "a great degree of flexibility" in setting up your agent's control logic (harness), external capabilities (tools), and specialized behaviors (skills). They provide the robust, scalable foundation while exposing the knobs needed to create sophisticated, custom agent workflows.

When should a team choose a Managed Agent platform vs. building their own?

Choose a managed platform to accelerate development, ensure production reliability from day one, and avoid the ongoing maintenance burden of a custom distributed system. Consider building your own only if you have highly unique, proprietary orchestration requirements that cannot be met by existing platforms, and you have the dedicated MLOps/DevOps resources to build and maintain that infrastructure indefinitely.