Key Takeaways

- Meta released Tuna-2, an encoder-free multimodal model that understands and generates images from raw pixels.

- It beats encoder-based models on fine-grained perception benchmarks, challenging the dominant VAE/vision encoder paradigm.

What Happened

Meta has released Tuna-2, an encoder-free multimodal model that directly processes raw pixels—no VAE, no vision encoder, just patch embeddings. The model both understands and generates images from raw pixel data, and according to Meta, it outperforms encoder-based models on fine-grained perception benchmarks.

The announcement, shared via Hugging Papers on X, positions Tuna-2 as a significant departure from the current multimodal architecture standard, which typically relies on variational autoencoders (VAEs) or dedicated vision encoders like CLIP to compress and represent visual information before feeding it into a language model.

Technical Details

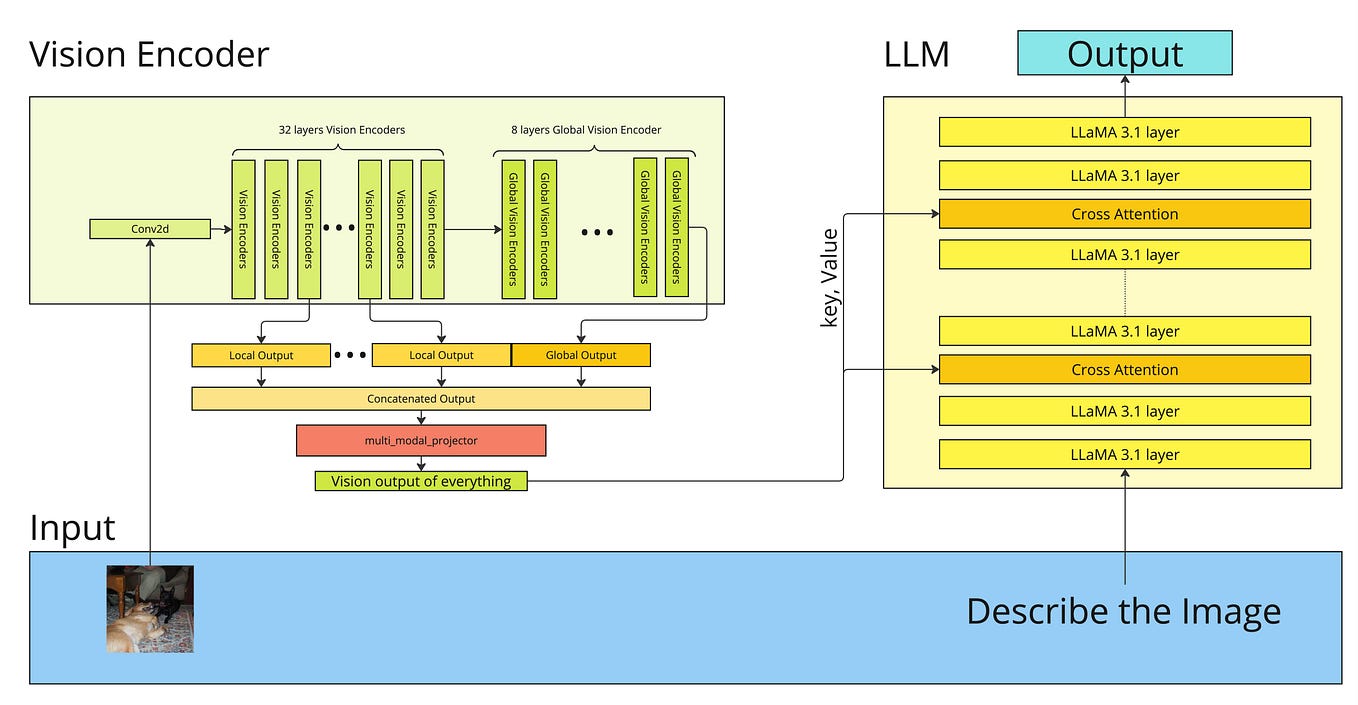

Tuna-2's key architectural innovation is its encoder-free design. Most multimodal models today—including GPT-4V, Gemini, and open-source alternatives like LLaVA—use a vision encoder (typically a pretrained ViT or CLIP model) to convert images into embeddings that can be fused with text embeddings. Some also use VAEs for image generation tasks.

Tuna-2 bypasses this entirely by operating directly on raw pixel patches. The model processes image patches as sequences of pixel values, similar to how language models process token sequences. This means:

- No information loss from encoder compression (VAEs and vision encoders inherently lose fine-grained details)

- Unified architecture for both understanding and generation

- Simpler pipeline with fewer components to train and maintain

How It Compares

Meta claims Tuna-2 beats encoder-based models on fine-grained perception benchmarks. While specific benchmark numbers were not provided in the announcement, the claim is notable because encoder-based models have dominated multimodal AI for years. The VAE/encoder approach has been considered table stakes for handling high-dimensional image data efficiently.

If Tuna-2's performance holds up under independent evaluation, it suggests that the computational cost of processing raw pixels directly is offset by the preservation of fine-grained visual information that encoders typically discard.

Traditional multimodal (LLaVA, GPT-4V) Yes (CLIP/ViT) High (compressed embeddings) Limited (separate decoder) VAE-based (Stable Diffusion variants) Yes (VAE) Medium (latent compression) Yes (built-in decoder) Tuna-2 (encoder-free) No Minimal (raw pixels) Yes (unified)What This Means in Practice

For practitioners building multimodal systems, Tuna-2 suggests a potential simplification of the stack. Instead of maintaining separate encoders, decoders, and fusion modules, a single encoder-free model could handle both understanding and generation tasks. This could reduce system complexity, simplify training pipelines, and potentially improve performance on tasks requiring fine-grained visual reasoning—such as medical imaging, document analysis, or any domain where pixel-level detail matters.

The trade-off is likely increased computational cost during training and inference, as processing raw pixel sequences is more expensive than processing compressed embeddings. However, if the performance gains are substantial, the trade-off may be worth it for high-stakes applications.

Limitations and Caveats

- No public benchmarks: The announcement claims Tuna-2 beats encoder-based models but doesn't provide specific numbers or comparisons

- No model weights: As of the announcement, Tuna-2 has not been released as open-source

- Scalability unknown: It's unclear how encoder-free architectures scale to very high-resolution images or very large model sizes

- Inference cost: Processing raw pixels directly likely requires more compute per image than compressed embeddings

Frequently Asked Questions

What makes Tuna-2 different from other multimodal models?

Tuna-2 eliminates the traditional vision encoder (like CLIP or ViT) and VAE, processing raw pixels directly as patch embeddings. Most multimodal models compress images through encoders, losing fine-grained detail; Tuna-2 preserves this information by working with raw pixel data.

Does Tuna-2 outperform GPT-4V or Gemini?

The announcement claims Tuna-2 beats encoder-based models on fine-grained perception benchmarks, but does not specifically name GPT-4V or Gemini. Without direct comparison or public benchmark numbers, it's unclear how it stacks against proprietary models.

Is Tuna-2 open-source?

Meta has not released Tuna-2 weights or code as of this announcement. The model was shared as a research paper and announcement, with no immediate plans for open-source release mentioned.

What tasks is Tuna-2 good for?

Given its encoder-free design, Tuna-2 is likely strongest at tasks requiring fine-grained visual understanding—such as precise object detection, detailed image captioning, document analysis, and medical imaging. The unified architecture also allows it to generate images from scratch.

gentic.news Analysis

Tuna-2 represents a genuine architectural departure from the multimodal status quo. For the past two years, the field has converged on a pattern: take a pretrained vision encoder (CLIP, SigLIP, DINOv2), a pretrained language model (LLaMA, Mistral, Gemma), and train a connector to bridge them. Tuna-2 says: throw out the encoder entirely.

This is reminiscent of the debate in NLP between encoder-decoder models (T5, BART) and decoder-only models (GPT series). The field eventually settled on decoder-only as the simpler, more scalable approach. Tuna-2 suggests a similar simplification may be coming for vision-language models.

The timing is interesting. Meta has been aggressively pushing open-source AI with LLaMA, LLaVA, and various multimodal efforts. Tuna-2, if it holds up, could further cement Meta's position as a research leader in efficient architectures—especially if they eventually open-source it.

However, the lack of published benchmarks is a red flag. The claim "beats encoder-based models" is vague, and without knowing which benchmarks, at what model scale, and against which baselines, it's impossible to assess the significance. The community will need to see numbers before treating this as a paradigm shift.

For practitioners, the key takeaway is architectural: encoder-free designs are now viable for multimodal understanding and generation. Whether they scale to production workloads remains to be seen, but the research direction is worth watching closely.