In a significant development at the intersection of artificial intelligence regulation and market competition, Microsoft has publicly stated that its legal team has reviewed the European Union's designation of Anthropic as a "gatekeeper" under the Digital Markets Act (DMA) and concluded that this status does not require Microsoft to restrict access to Anthropic's products, including its Claude AI assistant, on Microsoft platforms. This legal interpretation, reported by journalist Hadas Gold and amplified by AI researcher Ethan Mollick, represents a potential challenge to how the EU's landmark tech regulations will be applied to the rapidly evolving AI sector.

Understanding the Digital Markets Act and Gatekeeper Designation

The Digital Markets Act, which came into full force in March 2024, represents the European Union's most ambitious attempt to regulate the power of large technology platforms. The legislation designates certain companies as "gatekeepers" based on their size, market position, and entrenched ecosystem. These gatekeepers face specific obligations, including requirements to ensure interoperability, refrain from self-preferencing, and allow business users to access the data they generate on the platform.

Anthropic, the AI safety startup behind Claude, was designated alongside other major tech firms. The designation carries significant implications for how these companies can operate within the EU market. However, Microsoft's legal interpretation suggests there may be flexibility in how these rules apply to partnerships and integrations between designated gatekeepers.

The Microsoft-Anthropic Partnership Context

Microsoft and Anthropic have developed a substantial partnership in recent years. In addition to being a major investor in Anthropic (with commitments totaling billions of dollars), Microsoft has integrated Claude's capabilities into various aspects of its ecosystem, including through its Azure cloud platform. This relationship exists alongside Microsoft's deep partnership with OpenAI, creator of ChatGPT, in which Microsoft has invested approximately $13 billion.

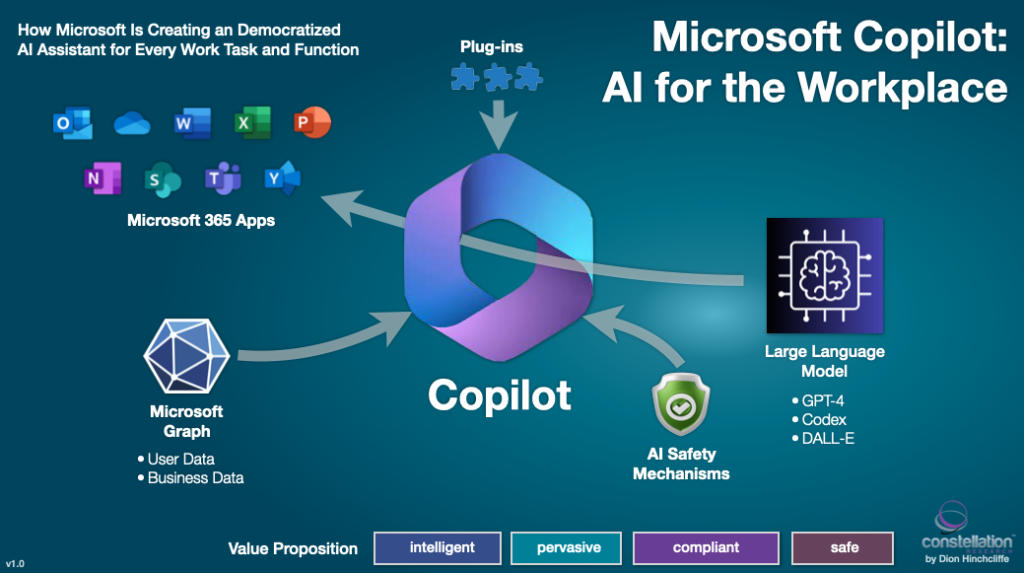

Microsoft's position as both an investor in and platform for multiple leading AI companies creates complex regulatory considerations. The company's legal conclusion that it can continue offering Claude despite Anthropic's gatekeeper status suggests Microsoft sees the DMA as primarily regulating direct consumer-facing platforms rather than business-to-business relationships or cloud infrastructure partnerships.

Legal Interpretation vs. Regulatory Intent

The heart of this development lies in the tension between legal interpretation and regulatory intent. Microsoft's lawyers appear to be making a distinction between different types of gatekeeper obligations. Their analysis likely focuses on specific articles of the DMA that might not explicitly prohibit one gatekeeper from hosting another gatekeeper's services, particularly when those services are offered through enterprise-facing platforms rather than direct consumer interfaces.

This interpretation could have several implications:

Platform Neutrality: Microsoft may be positioning its Azure cloud platform as a neutral infrastructure provider that can host services from multiple AI providers regardless of their regulatory status.

Partnership Preservation: The interpretation allows Microsoft to maintain its strategic partnerships with both Anthropic and OpenAI without facing conflicting regulatory requirements.

Regulatory Precedent: If unchallenged by EU regulators, this interpretation could establish a precedent for how gatekeeper rules apply to AI services hosted on cloud platforms.

Industry Implications and Competitive Landscape

Microsoft's stance could significantly impact the competitive landscape for AI in Europe. If gatekeepers can freely host each other's services, it may reduce potential market fragmentation that could have resulted from strict separation requirements. This could benefit consumers and businesses by maintaining access to multiple AI options through unified platforms.

However, this interpretation might also raise concerns about potential circumvention of the DMA's core objectives. If large platforms can maintain tight partnerships with multiple gatekeepers, it could potentially reinforce the market power of existing giants rather than fostering the emergence of truly independent competitors.

Regulatory Response and Future Developments

The European Commission, which enforces the DMA, has not yet publicly responded to Microsoft's legal interpretation. Regulators face the challenge of applying rules designed for traditional digital platforms to the novel architecture of AI ecosystems, where services are often layered and interdependent.

Potential regulatory responses could include:

- Clarification of Rules: The Commission might issue further guidance on how gatekeeper status applies to AI services and partnerships.

- Case-by-Case Assessment: Regulators might evaluate specific implementations to determine if they violate the spirit of the DMA.

- Formal Investigation: If the Commission disagrees with Microsoft's interpretation, it could launch proceedings that might result in fines or mandatory changes.

The Broader Context of AI Regulation

This development occurs against a backdrop of increasing regulatory attention on AI globally. The EU's AI Act, which focuses specifically on AI system risks, complements the DMA's competition-focused approach. Meanwhile, other jurisdictions including the United States and United Kingdom are developing their own AI regulatory frameworks.

Microsoft's legal interpretation highlights the challenges regulators face in creating rules that are both effective and adaptable to rapidly changing technologies. It also underscores how large technology companies are actively engaging with new regulations through legal analysis and strategic positioning.

What This Means for AI Developers and Users

For AI companies like Anthropic, Microsoft's interpretation provides continued access to important distribution channels and cloud infrastructure in the EU market. This could be particularly valuable for AI startups that rely on partnerships with larger platforms to reach customers.

For businesses and developers using AI services, the maintenance of multiple AI options on major platforms could mean greater choice and potentially more competitive pricing. However, it also means continued dependence on a small number of large platform providers.

For consumers, the immediate impact may be minimal, but the long-term implications could shape which AI services are available and how they are integrated into the digital tools they use daily.

Conclusion: A Test Case for Tech Regulation in the AI Era

Microsoft's public statement about its legal analysis of Anthropic's gatekeeper status represents more than just a corporate compliance decision. It serves as an early test case for how existing digital regulations will adapt to the age of artificial intelligence. The outcome of this situation—whether through regulatory acceptance, challenge, or compromise—will help establish the ground rules for AI competition and innovation in Europe and potentially influence approaches in other markets.

As AI continues to transform the digital landscape, the tension between fostering innovation and preventing anti-competitive concentration will only intensify. Microsoft's position highlights how established technology companies are navigating this new terrain, using legal interpretation as a strategic tool to shape the emerging regulatory environment for AI.

Source: Analysis based on reporting by Hadas Gold and commentary by Ethan Mollick regarding Microsoft's legal assessment of Anthropic's gatekeeper designation under the EU Digital Markets Act.