Key Takeaways

- Microsoft released VibeVoice, an MIT-licensed speech-to-text model with built-in speaker diarization.

- Simon Willison tested a 4-bit MLX conversion on an M5 MacBook, transcribing 1 hour of audio in ~9 minutes using ~60GB RAM.

Microsoft's VibeVoice: Open-Source Speech-to-Text with Diarization

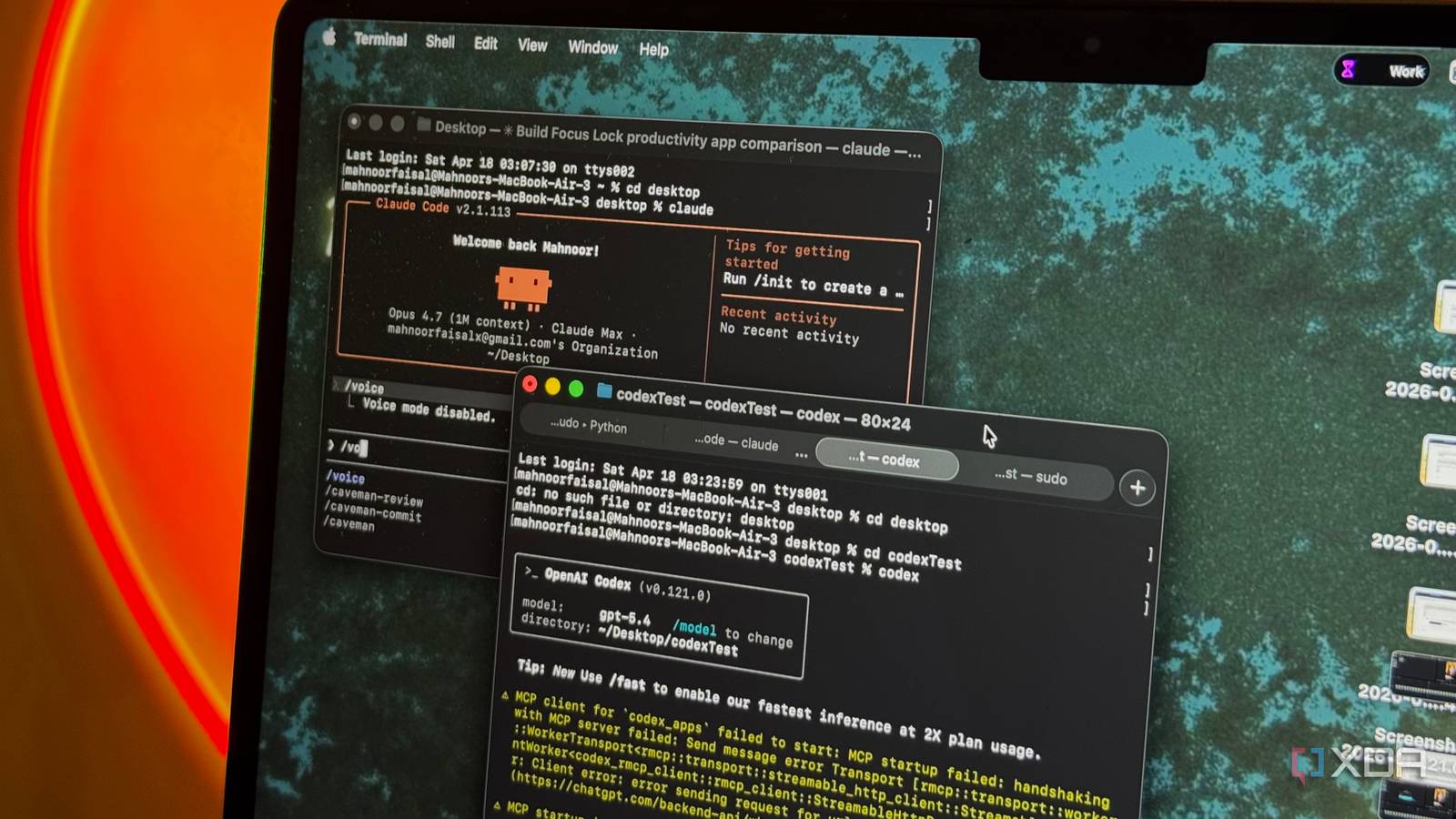

Microsoft has released VibeVoice, a speech-to-text model that combines transcription with speaker diarization — the ability to identify who spoke when — under the permissive MIT license. The model, which can be thought of as "Whisper with speaker diarization," was highlighted by Simon Willison, who shared his experience running a 4-bit MLX conversion on an M5 MacBook.

What's New

VibeVoice is a speech-to-text model that outputs both a transcript and speaker labels, assigning each utterance to a specific speaker. Unlike traditional pipelines that run a separate diarization model after transcription, VibeVoice integrates both tasks into a single model. This reduces complexity and latency, and simplifies deployment.

Key details from Willison's testing:

- Model size: 5.71GB (4-bit MLX conversion)

- Hardware: Apple M5 MacBook

- Peak RAM usage: ~60GB

- Transcription speed: ~9 minutes for 1 hour of audio

The model is available under the MIT license, meaning it can be used for commercial projects, modified, and redistributed without royalties or restrictions. Microsoft's decision to open-source VibeVoice under MIT contrasts with OpenAI's Whisper, which is also open-source but under a more restrictive license that prohibits certain commercial uses.

Technical Details

VibeVoice builds on the encoder-decoder architecture popularized by Whisper, but adds a speaker diarization head that predicts speaker identities for each time step. The model is trained on a large corpus of multi-speaker audio, likely drawn from Microsoft's internal datasets or public sources like LibriSpeech and VoxCeleb.

The 4-bit MLX conversion, performed by Willison, reduces the model's memory footprint and inference time on Apple Silicon. MLX is Apple's machine learning framework optimized for M-series chips, and 4-bit quantization allows the model to run on consumer-grade hardware, albeit with high RAM requirements (60GB peak).

Willison's test used an M5 MacBook, which has a unified memory architecture. The 60GB peak RAM usage suggests the model requires significant memory, likely due to the attention mechanism's quadratic scaling with audio length. For comparison, Whisper large-v3 uses about 10GB of VRAM in 16-bit precision for a 30-second audio clip, but scales with input length.

How It Compares

VibeVoice's main advantage is its integrated diarization. Whisper can be combined with a separate diarization model like pyannote-audio, but this adds complexity and latency. Deepgram's Nova-2 offers built-in diarization but is a proprietary API, not a downloadable model.

What to Watch

- RAM requirements: 60GB peak RAM limits deployment to high-end hardware. Future optimizations (e.g., 2-bit quantization, streaming inference) could reduce this.

- Accuracy: No benchmark numbers were provided in the source. It's unclear how VibeVoice compares to Whisper + pyannote-audio or Deepgram on standard metrics like word error rate (WER) and diarization error rate (DER).

- Language support: Whisper supports 99 languages. VibeVoice's language coverage is unknown.

- Real-world performance: Willison's test used a single 1-hour audio file. Performance on noisy, multi-speaker, or overlapping speech scenarios is untested.

gentic.news Analysis

Microsoft's release of VibeVoice under MIT license is a strategic move in the open-source AI space. The company has been increasingly active in releasing open-weight models, including Phi-3 and Phi-4, as part of a broader push to compete with Meta's Llama and Google's Gemma. By offering a permissive license, Microsoft lowers the barrier to adoption and positions VibeVoice as a default choice for developers building speech applications.

This also aligns with Microsoft's Azure AI strategy: while the model is open-source, Microsoft can monetize through Azure ML hosting, fine-tuning APIs, and enterprise support. The high RAM requirement (60GB) ensures that many users will still need cloud infrastructure, which Azure can provide.

Competitively, VibeVoice challenges Deepgram's Nova-2 and AssemblyAI, which offer built-in diarization but are closed-source and API-based. However, without published benchmarks, it's unclear if VibeVoice matches their accuracy. Developers should test VibeVoice on their own data before committing.

The timing is notable: OpenAI's Whisper has seen widespread adoption, but its license (MIT for the model, but restrictive for commercial use of the weights in some interpretations) has caused friction. Microsoft's MIT license is unambiguous, which may attract developers who want legal certainty.

Frequently Asked Questions

What is VibeVoice?

VibeVoice is a speech-to-text model developed by Microsoft that transcribes audio and identifies different speakers (speaker diarization) in a single step. It is released under the MIT open-source license.

How does VibeVoice compare to OpenAI's Whisper?

VibeVoice integrates speaker diarization directly into the model, whereas Whisper requires a separate diarization pipeline. Both are open-source, but VibeVoice uses the more permissive MIT license, while Whisper's license has restrictions on commercial use.

What hardware do I need to run VibeVoice?

Based on Simon Willison's test, running a 4-bit MLX conversion of VibeVoice on an Apple M5 MacBook required about 60GB of RAM at peak. This suggests high-end hardware is needed, though future optimizations may reduce requirements.

Is VibeVoice free to use commercially?

Yes, the MIT license permits commercial use, modification, and redistribution without royalties. This makes it suitable for integration into proprietary products.