The Innovation — What the Source Reports

A new research paper, "Forecasting Supply Chain Disruptions with Foresight Learning," introduces a specialized framework for training large language models (LLMs) to predict high-impact, low-frequency supply chain events. The core challenge addressed is the inability of general-purpose models to reason reliably about rare disruptions from noisy, unstructured data inputs—a common scenario in global logistics.

The proposed "Foresight Learning" method is an end-to-end framework that uses realized disruption outcomes as direct supervision to train an LLM. Instead of relying on prompt engineering or asking a model like GPT-5 to "think step-by-step," this approach fine-tunes the model's weights to produce calibrated probabilistic forecasts. This means the model doesn't just predict if a disruption will happen, but assigns a well-calibrated probability (e.g., a 15% chance of a port closure) that accurately reflects real-world likelihoods.

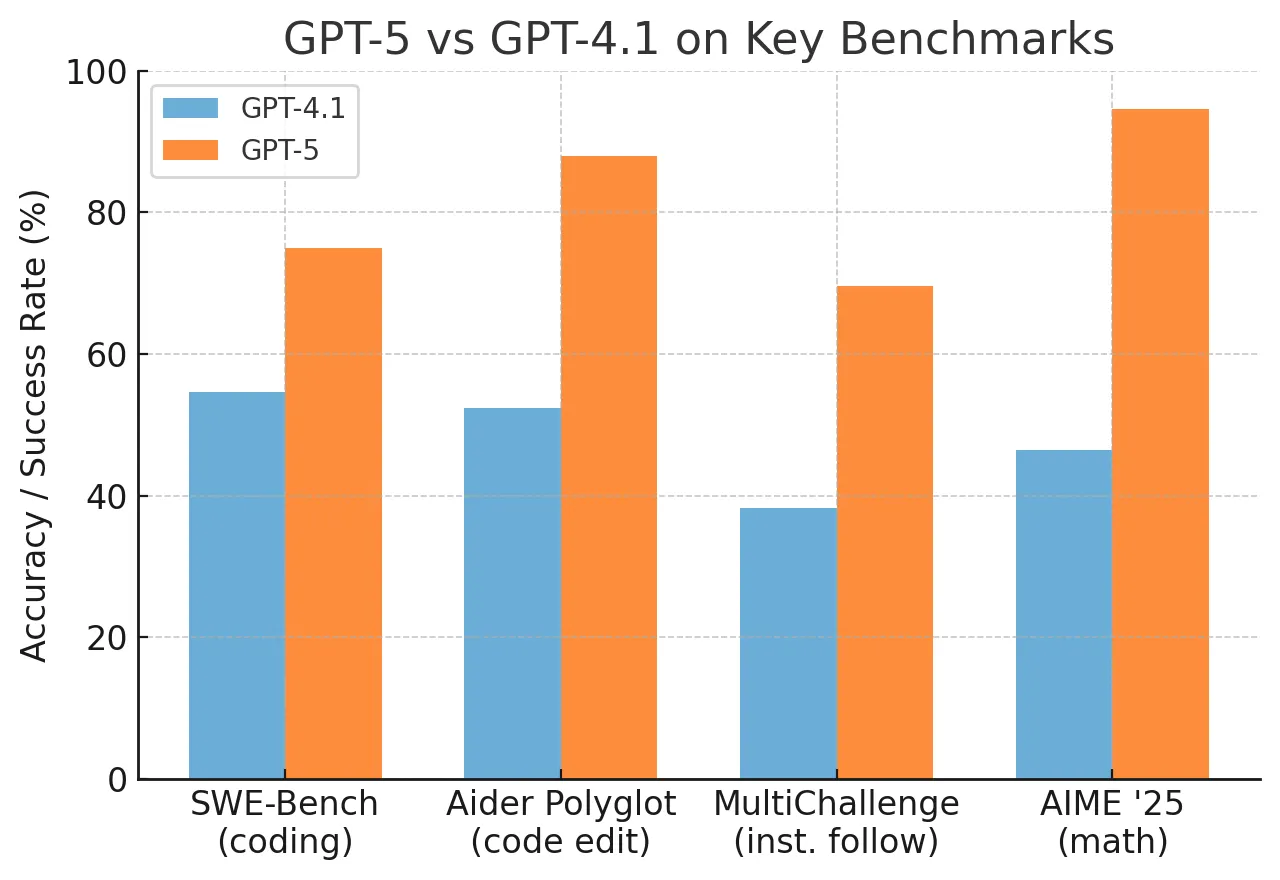

The results are striking: the fine-tuned model "substantially outperforms strong baselines - including GPT-5 - on accuracy, calibration, and precision." The research also shows that this training process induces more structured and reliable probabilistic reasoning intrinsically, without the need for explicit chain-of-thought prompting. The authors have open-sourced their evaluation dataset on Hugging Face to support transparency and further research.

Why This Matters for Retail & Luxury

For luxury and retail groups with complex, global, and high-value supply chains (think Italian leather, Swiss watch movements, or rare fabrics), unanticipated disruptions are a primary operational and financial risk. A delayed shipment can mean missing a crucial launch window or a holiday season, directly impacting revenue and brand prestige.

Current forecasting often relies on historical trend analysis or human intuition, which struggles with "black swan" events or novel correlations. A model that can ingest unstructured data—news reports, weather forecasts, supplier emails, port congestion logs, geopolitical briefings—and output a calibrated probability of a disruption is a powerful decision-support tool. It enables proactive mitigation: rerouting shipments, pre-ordering buffer stock, or qualifying alternative suppliers before a crisis hits.

Key departments that would benefit include:

- Supply Chain & Logistics: For dynamic routing and inventory buffer planning.

- Procurement: For risk-scoring suppliers and raw material sources.

- Finance: For more accurate contingency budgeting and risk modeling.

- Sustainability/ESG Teams: For assessing environmental and social governance risks in the supply chain.

Business Impact

The paper does not provide quantified business metrics (e.g., "reduced losses by X%"), but the implied impact is significant. Moving from reactive firefighting to probabilistic, data-driven foresight can protect margin, ensure product availability, and enhance brand resilience. For a sector where exclusivity and timeliness are paramount, the ability to safeguard the journey from artisan workshop to store shelf is a competitive advantage.

The open-source dataset provides a starting point, but the real value for a luxury conglomerate would come from fine-tuning a model on its proprietary, internal data—order logs, supplier performance histories, and qualitative risk assessments—creating a unique, defensible AI capability.

Implementation Approach

Implementing this is non-trivial and sits at the intersection of data science, supply chain expertise, and MLOps.

- Data Foundation: The first step is aggregating and structuring internal and external disruption signals. This includes structured data (lead times, OTIF metrics) and, crucially, unstructured data (supplier communications, news, logistics reports).

- Labeling Historical Disruptions: A historical timeline of "realized disruption outcomes" must be created to serve as training labels. This requires domain experts to define and label what constitutes a disruptive event.

- Model Selection & Fine-Tuning: An open-source LLM (e.g., Llama, Mistral) would likely serve as the base model. The "Foresight Learning" framework would then be applied, requiring significant GPU resources and machine learning engineering expertise to fine-tune the model on the proprietary dataset.

- Integration & Actionability: The model's probabilistic outputs need to be integrated into existing Supply Chain Management (SCM) and ERP systems, likely via an API. The biggest challenge is designing workflows that translate a "25% probability of air freight delay" into a concrete, cost-effective action.

Governance & Risk Assessment

- Data Privacy & Sovereignty: Training on internal communications and supplier data raises significant privacy and contractual concerns. Federated learning or strict data anonymization protocols would be essential.

- Model Bias & Calibration Drift: A model trained on past disruptions may fail to anticipate novel risks (e.g., a new type of trade sanction). Continuous monitoring and re-calibration are required to maintain reliability.

- Over-reliance & Alert Fatigue: Poorly implemented, a system like this could generate excessive false alarms, leading to alert fatigue and ignored warnings. The emphasis on calibrated probabilities is key to building trust.

- Maturity Level: This is cutting-edge academic research, not a commercial product. The leap from a published paper on arXiv to a stable, production-grade system is substantial and would require a dedicated, skilled team over 12-18 months.