What Happened

NVIDIA has released Nemotron-Cascade 2 on the Hugging Face Hub. The model is a 30 billion parameter Mixture-of-Experts (MoE) architecture that activates approximately 3 billion parameters per token during inference.

The accompanying announcement claims the model achieves "gold medal performance" on the International Mathematical Olympiad (IMO) 2025 and International Olympiad in Informatics (IOI) 2025 benchmarks. These are standardized tests designed to evaluate advanced reasoning and problem-solving capabilities in mathematics and competitive programming.

Technical Details

Based on the provided specifications:

- Total Parameters: 30 billion

- Activated Parameters per Token: ~3 billion (10% sparsity)

- Architecture: Mixture-of-Experts (MoE)

- Availability: Hugging Face Hub

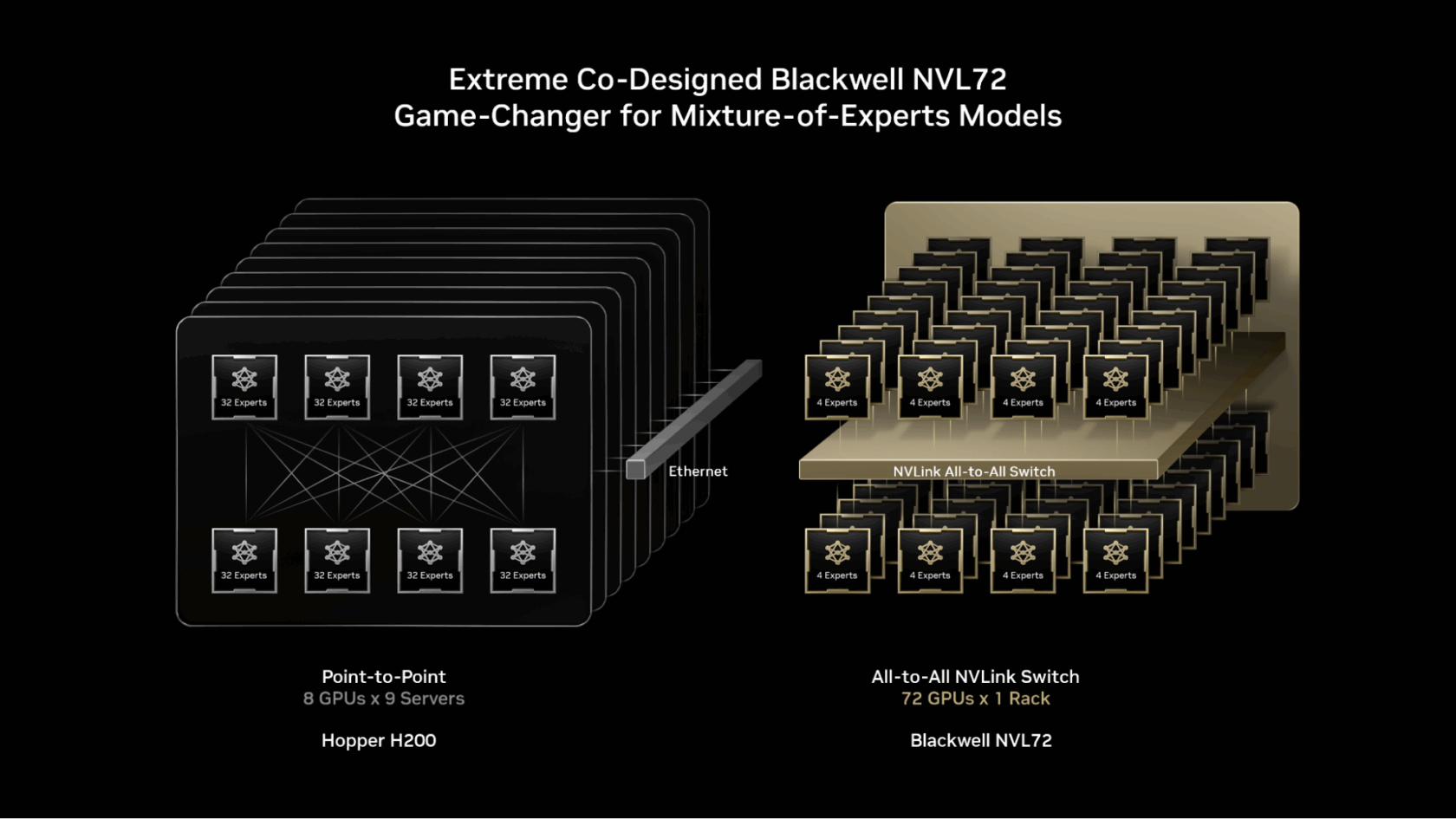

Mixture-of-Experts models route each input token through a subset of expert neural networks (the "activated parameters"), allowing for a large total parameter count while maintaining manageable computational costs during inference. The 10:1 ratio of total to active parameters is a common design point for modern MoE language models.

Context

The release follows NVIDIA's previous Nemotron model family, which includes instruction-tuned and reward models aimed at synthetic data generation and code completion. Positioning a model against IMO and IOI benchmarks targets the high-end reasoning evaluation space, currently dominated by models like OpenAI's o1, DeepSeek-R1, and Google's Gemini series.

No detailed benchmark scores, methodology, or training data information was provided in the initial announcement. The model card or associated technical report should be consulted for rigorous performance comparisons.

What to Watch

Practitioners should look for:

- Published benchmark results on IMO/IOI 2025 and standard coding/math benchmarks (e.g., MATH, HumanEval, SWE-Bench).

- Inference performance and hardware requirements for the 30B MoE architecture.

- License details and any commercial use restrictions.

- Comparison against similarly sized dense models and other MoE models (like Mixtral 8x22B) on throughput and quality.

The claim of "gold medal performance" requires verification against the official IMO/IOI evaluation criteria, which typically involve multi-step reasoning and formal proof generation.